COMPSCI 714 — AI Architecture and Design: Exam Killer Book

How to Get an A in One Day (Feynman Method)

This book is reverse-engineered from every past exam (2024, 2025, Practice) with official marking schemes. Every concept is ranked by exam frequency.

Your One-Day Battle Plan (Feynman Whiteboard Method)

The Feynman Technique: Grab a blank sheet. Write the topic. Explain it out loud as if teaching a 12-year-old. When you get stuck, that's your gap. Go back, learn it, explain again.

Morning (3 hrs) — Build Understanding

| Time | Action | What to Do |

|---|---|---|

| 9:00-9:30 | Read Part 0 | Skim exam analysis + frequency map. Know what's coming. |

| 9:30-10:30 | Whiteboard Session 1 | For each MUST topic, read only the Feynman Draft. Close book. Grab paper. Talk out loud. Draw diagrams. Write what you know. Find your gaps. |

| 10:30-11:30 | Whiteboard Session 2 | Read formal sections for your gaps. Close book. Re-explain. Repeat until you can explain CNN calculations, bias-variance diagnosis, and transformer architecture from memory. |

| 11:30-12:00 | CNN Drill | Do 3 CNN dimension calculations by hand. This WILL be on the exam. |

Afternoon (3 hrs) — Practice Exam Questions

| Time | Action | What to Do |

|---|---|---|

| 13:00-13:55 | Mock Exam 1 | Time yourself. 55 minutes. No book. Simulate real conditions. |

| 13:55-14:30 | Check answers | Compare with answer key. Mark your weak spots. |

| 14:30-15:25 | Mock Exam 2 | Another timed attempt. |

| 15:25-16:00 | Review gaps | Re-read chapters for any remaining weak spots. |

Evening (2 hrs) — Cheat Sheet + Final Review

| Time | Action | What to Do |

|---|---|---|

| 19:00-20:00 | Make cheat sheet | Double-sided A4 handwritten (exam allows this!) |

| 20:00-21:00 | Final Feynman pass | Walk around. Explain each MUST topic out loud. No notes. |

What to Put on Your Cheat Sheet

Side 1 — Formulas & Calculations:

CNN CONV output: floor((n + 2p - f) / s) + 1

CNN POOL output: floor((n - f) / s) + 1

Valid padding: p = 0 Same padding: output = input size

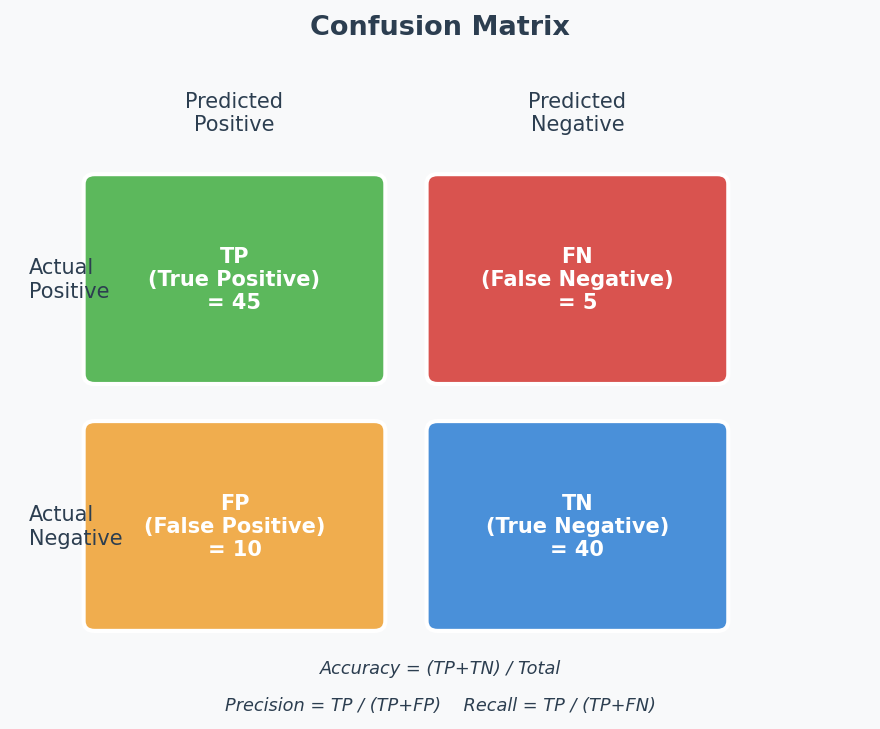

Accuracy = (TP + TN) / (TP + TN + FP + FN)

Precision = TP / (TP + FP)

Recall = TP / (TP + FN)

F1 = 2 * P * R / (P + R)

Side 2 — Decision Trees & Key Points:

DIAGNOSIS FLOWCHART:

Train HIGH, Val LOW → Overfitting (high variance)

→ Fix: regularisation, more data, data augmentation, smaller model

Train LOW, Val LOW → Underfitting (high bias)

→ Fix: bigger model, more features, train longer, remove regularisation

Train HIGH, Val HIGH → Good fit!

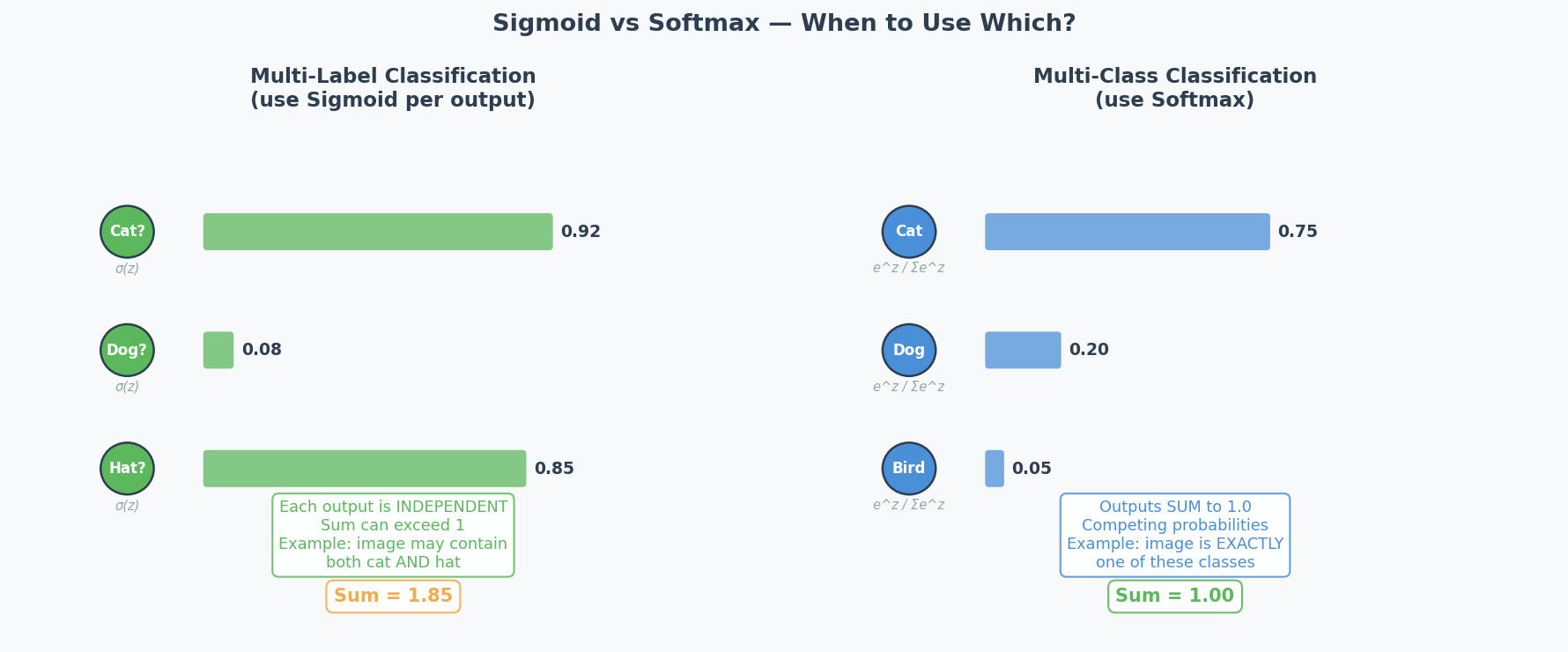

OUTPUT ACTIVATION:

Multi-class (one label) → Softmax

Multi-label (many labels) → Sigmoid

Regression → Linear (no activation)

BATCH NORM EFFECTS: faster training, reduce vanishing gradients,

regularisation effect, less sensitive to weight init

Exam Format

| Detail | 2025 | 2024 |

|---|---|---|

| Time | 60 min (5 read + 55 write) | 60 min (5 read + 55 write) |

| Marks | 20 | 30 |

| Questions | 6 short-answer | 7 short-answer |

| Allowed | Double-sided handwritten notes | Double-sided page of notes |

Golden rule: "Quality over quantity" — be concise. A 3-sentence precise answer beats a full-page ramble.

考前心理建设(Mental Preparation)

作为中国留学生,你的 ML 概念理解可能比很多本地学生都强。你唯一需要练的是:

- 先说结论(不要铺垫)

- 用题目的数字(不要泛泛而谈)

- 连接词(because, therefore, however — 让逻辑清晰)

- 不要怕犯语法错误(内容正确比语法完美重要100倍)

记住:考官打分看的是你理解不理解,不是你英语好不好。 一个有小语法错误但逻辑清晰的答案 >> 一个语法完美但空洞的答案。

Exam Question-by-Question Analysis

Source: 2025 S1 Test + 2024 S1 Test + Practice Test (with official answers)

2025 S1 Mid-Semester Test (20 marks, 6 questions)

Q1: Dataset Cleaning [2 marks]

| Field | Detail |

|---|---|

| Type | Data table analysis → justify 4 cleaning steps |

| Module | A: Data Preprocessing |

| Difficulty | ★☆☆ |

| Keywords | missing values, median imputation, attribute removal, outlier detection |

| Intent | Can you read dataset statistics and make cleaning decisions? |

The Trick: Attribute 4 has 9995/10000 missing values → remove entirely. Attribute 2 has extreme min/max relative to mean → outliers exist. No missing values in categorical/binary → no need for most-frequent imputation.

Q2: Evaluation and Design Choices [3 marks]

| Field | Detail |

|---|---|

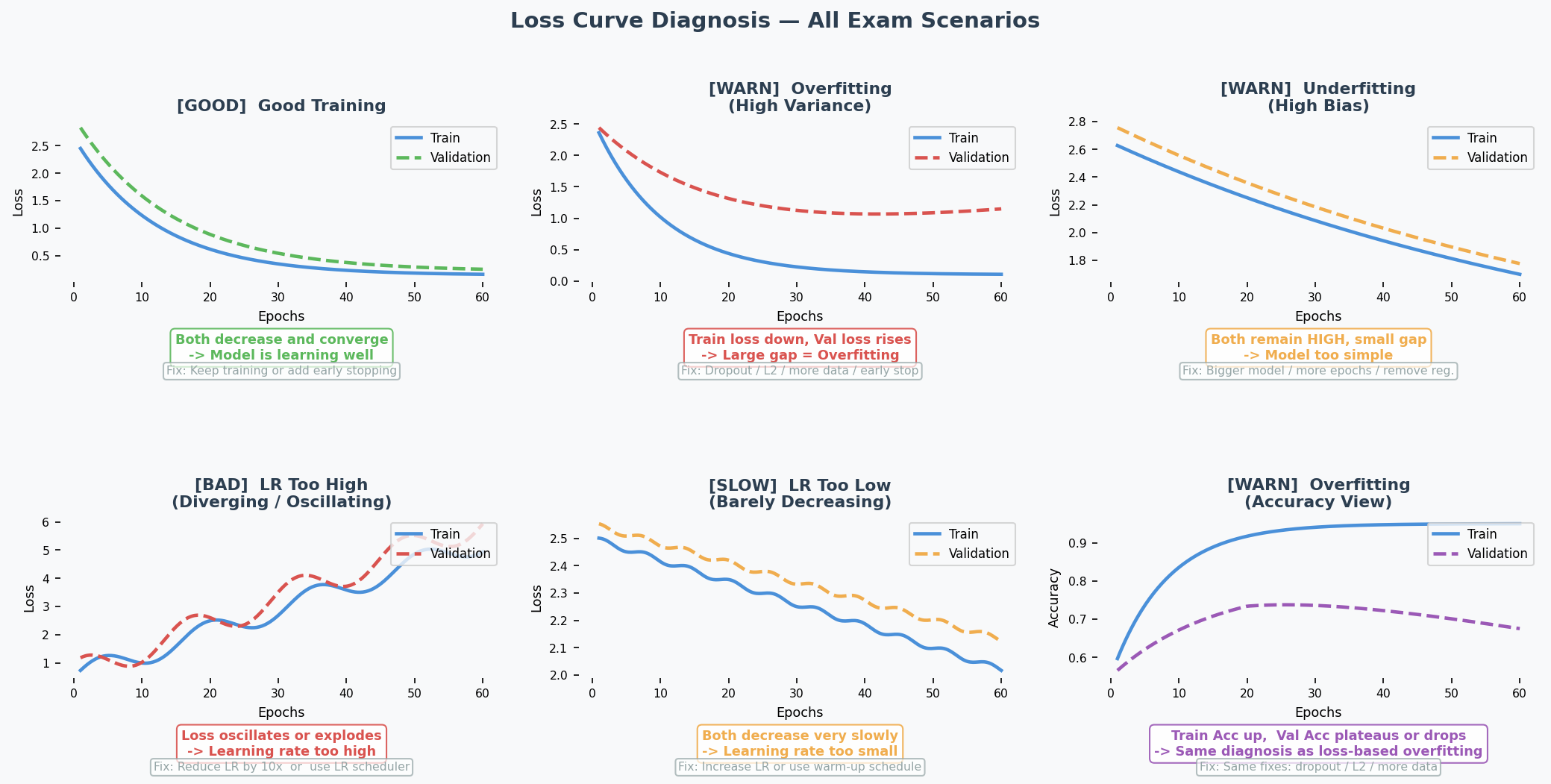

| Type | (a) Interpret loss curves → diagnose bias/variance (b) Suggest 2 improvements |

| Module | A: Bias-Variance |

| Difficulty | ★★☆ |

| Keywords | overfitting, high variance, high bias, regularisation, data augmentation |

| Intent | Can you read training curves and prescribe fixes? |

The Trick: Gap between training and validation = high variance (overfitting). Training loss still relatively high = possible high bias too. Each suggestion must target a DIFFERENT aspect.

Q3: Activation Functions [3 marks]

| Field | Detail |

|---|---|

| Type | (a) Explain dying ReLU + LeakyReLU fix (b) Choose output activation for multi-label |

| Module | B: MLP / Activation Functions |

| Difficulty | ★★☆ |

| Keywords | ReLU, LeakyReLU, dying neurons, sigmoid, multi-label vs multi-class |

| Intent | Do you understand activation function failure modes and design choices? |

The Trick: Multi-label (multiple anomalies per image) = sigmoid (independent per output). NOT softmax (which forces probabilities to sum to 1).

Q4: Learning Rate [4 marks]

| Field | Detail |

|---|---|

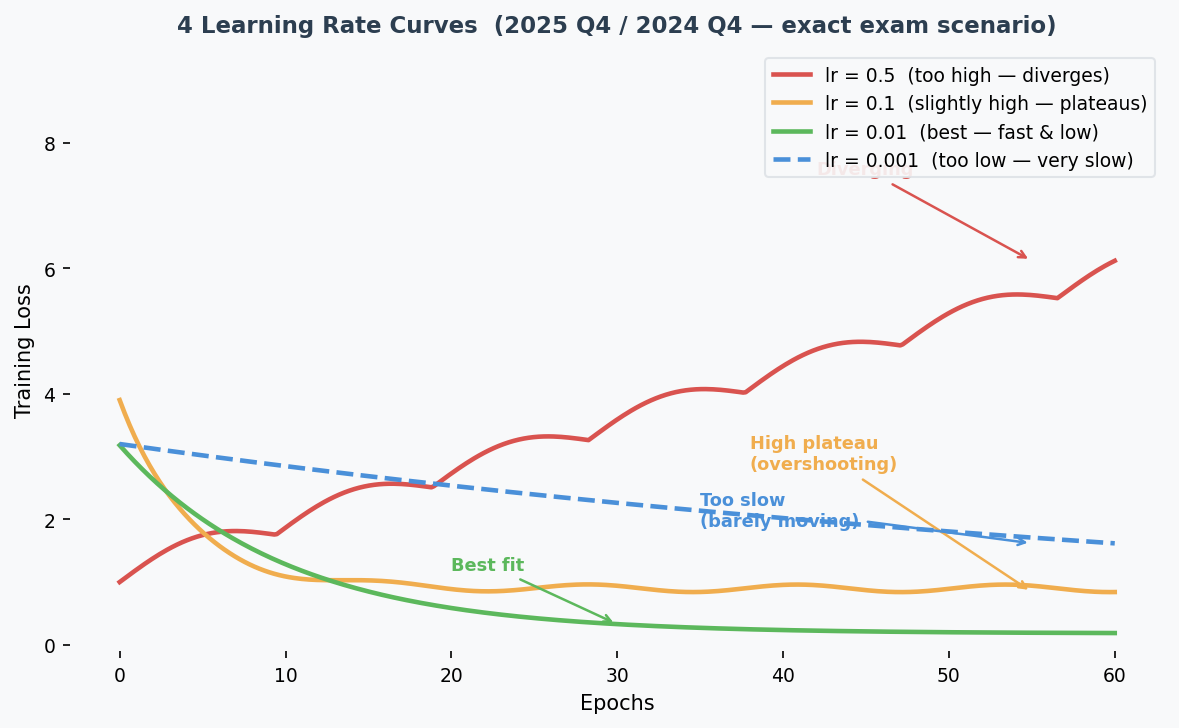

| Type | Match 4 loss curves to 4 learning rates |

| Module | A: Optimization |

| Difficulty | ★★☆ |

| Keywords | divergence, convergence, overshooting, learning rate |

| Intent | Can you visually identify learning rate effects? |

The Trick: Diverging (loss goes up) = 0.5. Slow descent = 0.001. Fast convergence to HIGH loss = 0.1 (overshoots optimum). Best convergence to LOW loss = 0.01.

Q5: Transformers [4 marks]

| Field | Detail |

|---|---|

| Type | (a) Explain masked attention in decoder (b) Explain ViT class token |

| Module | B: Transformer |

| Difficulty | ★★★ |

| Keywords | masked attention, autoregressive, ViT, [CLS] token, classification |

| Intent | Deep understanding of Transformer variants |

The Trick: (a) Mask prevents looking at future tokens → preserves autoregressive property during training. (b) [CLS] token aggregates info from all patches → efficient classification without processing all embeddings separately.

Q6: CNNs [4 marks]

| Field | Detail |

|---|---|

| Type | (a) Multiple choice: FC layer inputs (b) Show calculation |

| Module | B: CNN |

| Difficulty | ★★☆ |

| Keywords | valid padding, same padding, convolution, max pooling, flatten |

| Intent | Can you compute dimensions through a CNN pipeline? |

Answer: 180. Pipeline: [35,35,3] → Conv1(valid,k=7,s=2) → [15,15,10] → Pool1(k=2,s=2) → [7,7,10] → Conv2(same,k=3,s=1) → [7,7,20] → Pool2(k=2,s=2) → [3,3,20] → Flatten = 180.

2024 S1 Mid-Semester Test (30 marks, 7 questions)

Q1: Data Preprocessing [4 marks]

| Field | Detail |

|---|---|

| Type | Infer data characteristics from preprocessing pipeline |

| Module | A: Data Preprocessing |

| Difficulty | ★★☆ |

| Intent | Can you reverse-engineer what raw data looks like from the pipeline? |

Pipeline 1 (median imputer → standardisation → log transform): Numerical data, missing values, different scales, heavy-tailed distribution.

Pipeline 2 (most-frequent imputer → one-hot encoding): Categorical data, missing values, no ordinal relationship, not too many categories.

Q2: Design Choices [6 marks] — HIGHEST VALUE QUESTION

| Field | Detail |

|---|---|

| Type | Overfitting scenario (train=95%, val=60%), evaluate 3 fixes |

| Module | A: Bias-Variance |

| Difficulty | ★★☆ |

- More epochs: NO — worsens overfitting

- Larger dataset: YES — more diverse data helps generalise

- L2 regularisation: YES — penalises large weights, promotes simpler model

Q3: Evaluation [4 marks]

| Field | Detail |

|---|---|

| Type | Confusion matrix → calculate metrics → interpret |

| Module | E: Metrics |

Results: Accuracy=60%, Recall=100%, Precision=56%. The model predicts almost everything as positive. Looks like it catches all positives (perfect recall) but actually just labels everything positive (terrible precision).

Q4: Learning Rate and Optimisers [4 marks]

| Field | Detail |

|---|---|

| Type | (1) LR schedule example + benefit (2) Explain momentum |

Key answers: (1) Exponential decay — fast at start, fine-tune near optimum. (2) Momentum = exponentially decaying average of past gradients → smoother updates, speeds up convergence.

Q5: RNN and Transformer [4 marks]

| Field | Detail |

|---|---|

| Type | (1) Sequential processing: advantage AND drawback (2) How Transformer fixes it |

Key: (1) Advantage: naturally captures order. Drawback: can't parallelise → slow for long sequences. (2) Transformer: processes all tokens in parallel via embeddings + adds positional encoding for order.

Q6: CNN Feature Map [4 marks]

| Field | Detail |

|---|---|

| Type | Calculate dimensions after conv and pooling layers |

Answers: Conv: ((50+0-5)/3)+1 = 16 → [16,16,10]. AvgPool: ((50-5)/5)+1 = 10 → [10,10,5]. MaxPool: same dimensions (only values differ).

Q7: DNN Training [4 marks]

| Field | Detail |

|---|---|

| Type | (1) Why deep nets are hard to train (2) Two strategies to help |

Key: (1) Vanishing/exploding gradients + overfitting + longer training. (2) Batch norm, skip connections (ResNet), better optimisers (Adam), LSTM/GRU.

Practice Test (~32 marks, 7 questions)

Q1: Data Pre-processing [5 marks]

Two approaches to missing data + when to use each. Remove attribute (when mostly missing) or impute values (when reasonable amount missing).

Q2: DNN and Generalisation [5 marks]

High bias → underfitting → increase model, add data, transfer learning. High variance → overfitting → regularisation, more data, reduce model.

Q3: Design Choices [6 marks]

Underfitting scenario (train=val=50%). Increase size = YES. Zero init = NO (symmetry problem). Dropout = NO (regularisation doesn't help underfitting).

Q4: Evaluation [3 marks]

Accuracy=70%, Recall=33%. Accuracy misleading due to class imbalance — model bad at finding positives.

Q5: Batch Normalisation [5 marks]

Effects: speeds up training, reduces vanishing gradients, regularisation effect, reduces weight init sensitivity.

Q6: Attention and Transformers [4 marks]

Multi-head attention = stacks multiple attention heads with separate Q/K/V. Benefit: focuses on different aspects simultaneously.

Q7: CNNs [5 marks]

Reverse-engineer hyperparameters from diagram. Early layers = edge detectors (low-level features), deeper layers = complex features.

Exam Topic Frequency Map

The Heat Map: What WILL Be on Your Exam

| Topic | 2025 | 2024 | Practice | Count | Priority |

|---|---|---|---|---|---|

| Bias-Variance / Design Choices | Q2 (3m) | Q2 (6m) | Q2+Q3 (11m) | 4 | MUST |

| CNN Calculations | Q6 (4m) | Q6 (4m) | Q7 (5m) | 3 | MUST |

| Transformer / Attention | Q5 (4m) | Q5 (4m) | Q6 (4m) | 3 | MUST |

| Data Preprocessing | Q1 (2m) | Q1 (4m) | Q1 (5m) | 3 | MUST |

| Learning Rate / Optimizers | Q4 (4m) | Q4 (4m) | — | 2 | HIGH |

| Confusion Matrix Metrics | — | Q3 (4m) | Q4 (3m) | 2 | HIGH |

| Activation Functions | Q3 (3m) | — | — | 1 | MED |

| RNN vs Transformer | — | Q5 (4m) | — | 1 | MED |

| DNN Training Challenges | — | Q7 (4m) | — | 1 | MED |

| Batch Normalisation | — | — | Q5 (5m) | 1 | MED |

Priority Guide

| Priority | Rule | Your Action |

|---|---|---|

| MUST | Every exam, >= 3 appearances | Master completely. Can explain on whiteboard from memory. |

| HIGH | 2 out of 3 exams | Understand well. Can calculate and explain. |

| MED | 1 out of 3 exams | Know key points. Can write 3-4 sentences if asked. |

The 80/20 Rule: 4 Topics = ~65% of All Marks

1. Bias-Variance + Design Choices (~20% of all marks)

- Diagnose overfitting vs underfitting from numbers/curves

- For each fix: say YES/NO + link to the specific diagnosis

- Never confuse: regularisation fights overfitting, NOT underfitting

2. CNN Calculations (~15% of all marks)

- Two formulas: conv output + pool output

- Practice multi-layer pipeline calculations

- Know valid vs same padding

3. Transformer / Attention (~15% of all marks)

- Masked attention = prevent seeing future tokens

- Multi-head attention = multiple perspectives simultaneously

- ViT: patches → embeddings → [CLS] token → classification

4. Data Preprocessing (~15% of all marks)

- Which imputation for which data type

- When to remove attribute vs impute

- Read pipeline → infer data characteristics

+ 2 More for Safety (~20% more marks)

- Learning Rate — curve shapes, momentum, LR schedules

- Confusion Matrix — calculate accuracy/precision/recall, spot class imbalance traps

Total Marks by Topic (All Exams Combined)

Bias-Variance/DC ████████████████████ 20 marks

CNN █████████████ 13 marks

Transformer ████████████ 12 marks

Data Preprocessing ███████████ 11 marks

Learning Rate ████████ 8 marks

Eval Metrics ███████ 7 marks

Batch Norm █████ 5 marks

DNN Training ████ 4 marks

RNN ████ 4 marks

Activation Func ███ 3 marks

Teacher's Exam Style Analysis

Core Philosophy

"We privilege quality over quantity" — concise, clear, correct.

The teacher tests applied understanding, not memorisation. Every question gives a scenario and asks you to reason about it.

Question Patterns That Repeat Every Exam

Pattern 1: "Evaluate These Suggestions" (EVERY EXAM)

Format: Given model settings + results, evaluate 2-3 suggestions.

How to nail it:

- FIRST: diagnose the problem (overfitting or underfitting?)

- THEN: for each suggestion, say YES/NO

- THEN: explain WHY by connecting to your diagnosis

Scoring: 2 marks each (1 for answer, 1 for reasoning connected to scenario)

| Scenario | Diagnosis | What Helps | What Doesn't |

|---|---|---|---|

| Train HIGH, Val LOW | Overfitting | Regularisation, more data, data aug | More epochs, bigger model |

| Train LOW, Val LOW | Underfitting | Bigger model, more features | Regularisation, dropout |

Pattern 2: CNN Dimension Calculation (EVERY EXAM)

Format: Given architecture spec → compute output at each layer → find FC input size.

How to nail it: Write this for EVERY layer:

[Layer Name]

Input: [H, W, C]

Formula: ((H + 2p - f) / s) + 1

Output: [H', W', C']

Pattern 3: Transformer Two-Part Question (EVERY EXAM)

Format: (a) Explain mechanism X. (b) Why is it useful?

How to nail it: Part (a) = WHAT it does. Part (b) = WHY it matters (concrete benefit).

Pattern 4: Loss Curve / Metric Interpretation (2/3 EXAMS)

Format: Given graph or numbers → diagnose + suggest fix.

Traps the Teacher Sets (And How to Avoid Them)

| Trap | What Students Do Wrong | Correct Answer |

|---|---|---|

| Underfitting + regularisation | "Use dropout to improve!" | NO — dropout fights overfitting, this is underfitting |

| Multi-label output activation | "Use softmax" | NO — sigmoid (independent per output) |

| Zero weight initialisation | "Smaller weights = better" | NO — zero creates symmetry, neurons can't differentiate |

| More epochs when overfitting | "Train longer to learn more" | NO — worsens overfitting |

| High accuracy with imbalanced data | "70% accuracy = good model" | Check precision/recall — might just predict majority class |

| Max vs Avg pooling output size | "Different pooling = different size" | SAME size, only values differ |

Sentence Patterns in Questions → What They Want

| Question Says | They Actually Want |

|---|---|

| "Explain if it is likely to improve..." | YES/NO + reasoning linked to the specific scenario |

| "Describe performance in terms of bias and variance" | Identify overfitting/underfitting from curves |

| "Briefly justify" | 2-3 sentences MAX with the key reason |

| "Show your calculation steps" | Formula → numbers → result (at each layer) |

| "What do you think about this model?" | Go BEYOND numbers — what is the model actually doing? |

| "Explain in your own words" | Show understanding, not textbook recitation |

Concepts That Always Appear Together

Bias-Variance ←→ Regularisation ←→ Design Choices

(one question covers all three — master the connections)

CNN Dimensions ←→ Valid/Same Padding ←→ FC Layer Size

(pipeline calculation from start to end)

Transformer ←→ Masked Attention ←→ Positional Encoding ←→ ViT

(mechanism + why it exists)

Loss Curves ←→ Learning Rate ←→ Optimizers

(visual diagnosis skill)

Confusion Matrix ←→ Class Imbalance ←→ Misleading Accuracy

(numbers game — always check precision AND recall)

Module A — Data Preprocessing

Exam Importance

MUST | Every exam has a data preprocessing question (2025 Q1, 2024 Q1, Practice Q1)

Feynman Draft

Imagine you're a chef and someone delivers raw ingredients to your kitchen. Some tomatoes are rotten (outliers), some boxes are missing labels (missing values), some ingredients are measured in grams while others are in kilograms (different scales). You can't cook with this mess — you need to clean and prepare everything first. That's data preprocessing.

The 4 things you might need to do:

-

Missing Values(缺失值) — Some cells in your spreadsheet are empty

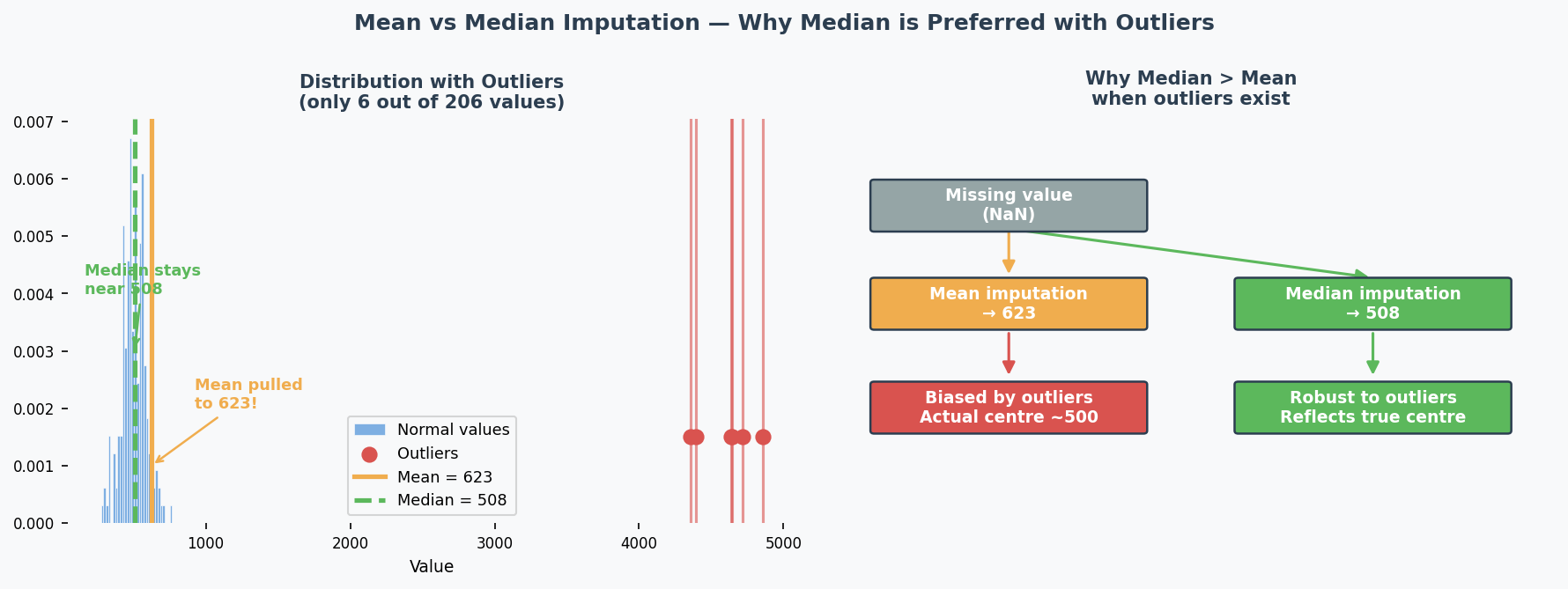

- Numerical data? → Fill with median (robust to outliers) or mean — this is called Imputation(插补/填补)

- Categorical data? → Fill with most frequent value (mode)

- Almost all missing? → Remove the entire column (attribute)

-

Outliers(异常值/离群值) — Values that are absurdly far from the rest

- Look at: is max/min way larger than mean + a few standard deviations?

- Example: mean=500, std=100, but max=50000 → definitely outliers

-

Scaling(缩放) — Features on different scales confuse the model

- Standardisation(标准化) (z-score): $ x' = (x - \mu) / \sigma $ → mean=0, std=1

- Use when features have different units/ranges

-

Encoding(编码) — Models need numbers, not text

- One-hot encoding(独热编码): turn "Red/Blue/Green" into [1,0,0], [0,1,0], [0,0,1]

- Use for categorical data with NO natural ordering

Toy Example: Dataset with 10,000 samples:

| Attribute | Type | Missing | Mean | Std | Max | Min |

|---|---|---|---|---|---|---|

| Attr 1 | Binary | 0 | / | / | / | / |

| Attr 2 | Numerical | 15 | 500 | 100 | 50000 | -1000 |

| Attr 3 | Categorical | 0 | / | / | / | / |

| Attr 4 | Numerical | 9995 | 1.2 | 0.2 | 2.0 | 0.0 |

| Attr 5 | Numerical | 23 | 25360 | 30215 | 125000 | -75000 |

Analysis:

- Most-frequent imputation? NO — binary and categorical have zero missing values

- Median imputation(中位数插补)? YES — Attr 2 (15 missing) and Attr 5 (23 missing) have some missing numerical values; median is better than mean because outliers exist

- Remove attribute(移除特征)? YES — Attr 4 has 9995/10000 missing → useless, imputation would create fake data

- Outlier removal(异常值移除)? YES — Attr 2's max (50000) is ~495 standard deviations from the mean!

Common Misconception: Students think "always impute" is the right answer. But if 99.95% of values are missing, imputation creates misleading data — just remove it.

Core Intuition: Preprocessing matches each data problem to the right cleaning tool — like choosing the right kitchen tool for each ingredient.

The Pipeline Reading Trick (2024 Exam Favourite)

The teacher loves giving you a pipeline diagram and asking "what does the raw data look like?"

Reverse-engineer the pipeline:

| Pipeline Step | What It Tells You About Raw Data |

|---|---|

| Median imputer(中位数填补器) | Numerical data with missing values; likely has outliers (median is more robust than mean) |

| Most-frequent imputer(众数填补器) | Categorical data with missing values |

| Standardisation(标准化) | Features on different scales |

| Log transformation(对数变换) | Heavy-tailed distribution(重尾分布) (some very large values) |

| One-hot encoding(独热编码) | Categorical data, not too many categories, no natural ordering |

Example from 2024 Q1:

- Pipeline 1: median imputer → standardisation → log transform

- → Numerical data, missing values, different scales, heavy-tailed

- Pipeline 2: most-frequent imputer → one-hot encoding

- → Categorical data, missing values, no ordinal relationship

Past Exam Questions

2025 Q1 [2m]: Given dataset table, justify 4 cleaning steps (yes/no + why) 2024 Q1 [4m]: Given 2 pipelines, describe characteristics of raw data Practice Q1 [5m]: Describe 2 approaches to missing data + when each is preferred

中文思维 → 英文输出

| 你脑中的中文想法 | 考试中应该写的英文 |

|---|---|

| 这个特征缺失值太多了,应该删掉 | "The attribute should be removed because [X]% of values are missing, making imputation unreliable." |

| 用中位数比均值好,因为有离群值 | "Median imputation is preferred over mean because the data contains outliers — the median is robust to extreme values." |

| 数据需要标准化因为量纲不同 | "Standardisation is necessary because features are on different scales." |

| 这个是分类数据,用独热编码 | "One-hot encoding is applied because the data is categorical with no natural ordering." |

| 从pipeline反推原始数据特征 | "The use of [step] suggests that the raw data [characteristic]." |

| 这个数据有异常值,最大值太离谱了 | "The attribute contains outliers — the maximum value is [X] standard deviations from the mean." |

| 二元数据不需要填补 | "Binary attributes with no missing values do not require imputation." |

本章 Chinglish 纠正

| Chinglish (avoid) | Correct English |

|---|---|

| "The data has a lot of missing" | "The data contains a significant proportion of missing values" |

| "We should delete this feature" | "This attribute should be removed" |

| "Use median because it is more better" | "Median is preferred because it is more robust to outliers" |

| "The data need to be standard" | "The data requires standardisation" |

| "This feature is category type" | "This is a categorical attribute" |

| "The max value is too big, it is outlier" | "The maximum value is [X] standard deviations above the mean, indicating the presence of outliers" |

Whiteboard Self-Test

- Can you list 4 data cleaning operations and when to use each?

- Given a dataset summary table, can you justify each cleaning step?

- Given a pipeline diagram, can you describe what the raw data looks like?

- Do you know why median is preferred over mean for imputation with outliers?

Bias-Variance Tradeoff & Design Choices

Exam Importance

MUST | The single most tested topic: 4 questions across all exams, ~20 marks total

Feynman Draft

Imagine you're learning to throw darts at a bullseye.

-

High bias(高偏差) = you consistently miss in the same direction. Your aim is systematically off. You're too rigid — like using only your wrist instead of your whole arm. This is underfitting(欠拟合) — your model is too simple to capture the real pattern.

-

High variance(高方差) = your throws are scattered all over the board. Sometimes you hit the bullseye, sometimes the wall. You're too sensitive to tiny movements. This is overfitting(过拟合) — your model memorises the training data noise instead of learning the real pattern.

How do you diagnose this from training curves?

| What You See | Diagnosis | Name |

|---|---|---|

| Training accuracy HIGH, Validation accuracy LOW | High variance | Overfitting |

| Training accuracy LOW, Validation accuracy LOW | High bias | Underfitting |

| Training accuracy HIGH, Validation accuracy HIGH | Good fit! | Keep it |

Toy Example with Numbers:

| Scenario | Train Acc | Val Acc | Diagnosis | What to Do |

|---|---|---|---|---|

| A | 95% | 60% | Overfitting(过拟合) | Regularisation, more data |

| B | 50% | 50% | Underfitting(欠拟合) | Bigger model, remove regularisation |

| C | 92% | 88% | Good fit | Ship it |

Common Misconception: "If validation accuracy is low, always add regularisation." WRONG! Regularisation helps overfitting (A), but makes underfitting (B) even WORSE because it constrains the model further.

Core Intuition: Bias(偏差) = model too simple for the problem. Variance(方差) = model too complex for the data amount.

The Design Choices Decision Tree (EXAM ESSENTIAL)

This is the teacher's favourite question format. Memorise this:

Step 1: DIAGNOSE

Train >> Val? → Overfitting (high variance)

Train ≈ Val ≈ low? → Underfitting (high bias)

Step 2: PRESCRIBE

If OVERFITTING(过拟合):

✅ Regularisation(正则化) (L1, L2, Dropout) → constrains model complexity

✅ More/diverse training data → helps generalise(泛化)

✅ Data augmentation(数据增强) → more variety without new data

✅ Batch normalisation(批量归一化) → regularising effect

✅ Early stopping(提前停止) → stop before overfitting

✅ Reduce model size → less capacity to memorise

❌ More epochs → makes it WORSE

❌ Bigger model → makes it WORSE

If UNDERFITTING(欠拟合):

✅ Increase model size (more layers/neurons) → more capacity(容量)

✅ Train longer → give it time to learn

✅ Remove/reduce regularisation → stop constraining

✅ Better features / more data → more signal

✅ Transfer learning(迁移学习) → start from pretrained model

❌ Regularisation → makes it WORSE

❌ Dropout → makes it WORSE

❌ Smaller model → makes it WORSE

Past Exam Questions with Answer Logic

2024 Q2 [6 marks] — Overfitting Scenario

Setup: 5 hidden layers, ReLU, 20 neurons/layer, 1000 epochs. Train=95%, Val=60%.

| Suggestion | Answer | Reasoning |

|---|---|---|

| Train for 2000 epochs | NO | Already overfitting → more training = memorise more noise |

| Larger dataset | YES | More diverse data helps learn general patterns, not noise |

| L2 regularisation | YES | Penalises large weights → simpler, more generalisable model |

Practice Q3 [6 marks] — Underfitting Scenario

Setup: 2 hidden layers, ReLU, 5 neurons/layer, 2000 epochs, L1 regularisation. Train=50%, Val=50% (achievable=95%).

| Suggestion | Answer | Reasoning |

|---|---|---|

| Increase network size | YES | Underfitting = model too small → need more capacity |

| Initialise weights to 0 | NO | Creates symmetry → all neurons learn identical things → can't differentiate features |

| Use dropout | NO | Dropout is regularisation → fights overfitting, not underfitting |

2025 Q2 [3 marks] — Curve Interpretation

Setup: Training curves after 20 epochs showing gap between train/val accuracy and diverging loss curves.

(a) Diagnose: High variance (overfitting) — clear gap between training and validation. Possibly also high bias if training loss is still high.

(b) Two changes (each targeting different aspect):

- Regularisation (e.g., L2, dropout) → reduces overfitting

- Data augmentation → more varied training data → better generalisation

- Batch normalisation → has regularising effect

- Increase model size (if bias is high) → more capacity to fit

How to Read Training Curves

Quick reference table:

| What you see on the plot | Diagnosis | Fix |

|---|---|---|

| Train loss ↓, Val loss ↑ after a point | Overfitting(过拟合) (high variance) | Dropout, L2, more data, early stop |

| Both losses stay HIGH | Underfitting(欠拟合) (high bias) | Bigger model, more epochs, less regularisation |

| Loss oscillates / explodes | LR too high | Reduce LR ×10, use scheduler |

| Both losses barely move | LR too low | Increase LR, use warm-up |

| Both losses ↓ and converge | Good fit | Keep going or early stop |

English Expression Templates

Diagnosing:

- "The model displays high variance as there is a clear gap between training and validation accuracy."

- "This indicates overfitting, where the model fits the training data too closely but fails to generalise."

Prescribing:

- "Applying regularisation can help reduce overfitting by limiting model complexity."

- "Training on a larger dataset might help the model learn more general patterns."

- "This will not help because the model is already underfitting — adding regularisation would constrain it further."

中文思维 → 英文输出

| 你脑中的中文想法 | 考试中应该写的英文 |

|---|---|

| 过拟合了,训练高验证低 | "The model is overfitting — the training accuracy (X%) is significantly higher than the validation accuracy (Y%)." |

| 欠拟合,两个都很低 | "The model is underfitting, as both training and validation accuracies are low, indicating insufficient model capacity." |

| 加正则化能改善 | "Applying regularisation is likely to improve validation accuracy by constraining model complexity." |

| 不能再多训练了,会更差 | "Training for more epochs will not help — it is likely to worsen overfitting as the model continues to memorise training noise." |

| dropout不能解决欠拟合 | "Dropout will not help because the model is underfitting. Dropout reduces effective capacity, which would worsen the problem." |

| 模型太简单了,学不到东西 | "The model lacks sufficient capacity to capture the underlying patterns in the data." |

| 需要更多数据来泛化 | "Increasing the dataset size is likely to help the model generalise better by providing more diverse examples." |

| 权重初始化为0不行 | "Initialising all weights to zero creates symmetry — all neurons learn identical features, preventing the network from differentiating." |

本章 Chinglish 纠正

| Chinglish (avoid) | Correct English |

|---|---|

| "The model is overfit" | "The model is overfitting" (use progressive form for the state) |

| "It should add regularisation" | "Applying regularisation would help" |

| "The gap is too big" | "There is a significant discrepancy between training and validation performance" |

| "More data can solve" | "Increasing the dataset size is likely to help the model generalise better" |

| "The model is not enough complex" | "The model has insufficient capacity" |

| "Train more epoch will be worse" | "Training for more epochs is likely to worsen overfitting" |

Whiteboard Self-Test

- Can you draw the bias-variance diagnosis table from memory?

- Given train=95%/val=55%, what's the diagnosis? What 3 things help?

- Given train=50%/val=50%, what's the diagnosis? Why does dropout NOT help?

- Can you explain why zero weight initialisation is bad?

- Can you explain why more epochs worsens overfitting?

Optimization: Learning Rate, Schedules & Optimizers

Exam Importance

HIGH | 2 out of 3 exams (2025 Q4, 2024 Q4) — 8 marks total

Feynman Draft

Imagine you're blindfolded on a hilly landscape, trying to find the lowest valley. You take steps downhill based on the slope you feel under your feet. That's Gradient Descent(梯度下降).

The learning rate(学习率) is your step size:

- Too big (0.5): You leap so far you jump OVER the valley and end up on the other side, maybe even higher. Your loss goes UP. This is divergence(发散).

- Too small (0.001): You take tiny baby steps. You'll eventually get there, but it takes forever. This is slow convergence(收敛缓慢).

- Just right (0.01): You stride confidently into the valley. Fast convergence(收敛) to low loss.

- Slightly too big (0.1): You get near the valley but keep overshooting(超调) back and forth, settling at a suboptimal point.

The 4 Loss Curves — This Exact Question Was on 2025 AND 2024:

| Curve | Shape | Learning Rate | Why |

|---|---|---|---|

| Red (solid) | Loss goes UP / oscillates | 0.5 (highest) | Steps too large → jumps over optimum repeatedly |

| Orange (solid) | Fast drop but plateaus HIGH | 0.1 | Overshoots, settles at suboptimal point |

| Green (solid) | Fast drop to LOWEST loss | 0.01 | Sweet spot — fast convergence to good minimum |

| Blue (dashed) | Very slow descent | 0.001 (lowest) | Tiny steps → barely moves |

Common Misconception: "Curve 2 could be lr=0.001 because it gets stuck." While small lr CAN get stuck in local minima, the teacher's intended answer is: converging to a high loss = lr slightly too high (overshooting), not too low. Match the explanation consistently to the other curves.

Core Intuition: Learning rate controls step size — too big overshoots, too small is slow, just right converges fast to a good minimum.

Learning Rate Schedules(学习率调度) (2024 Q4.1)

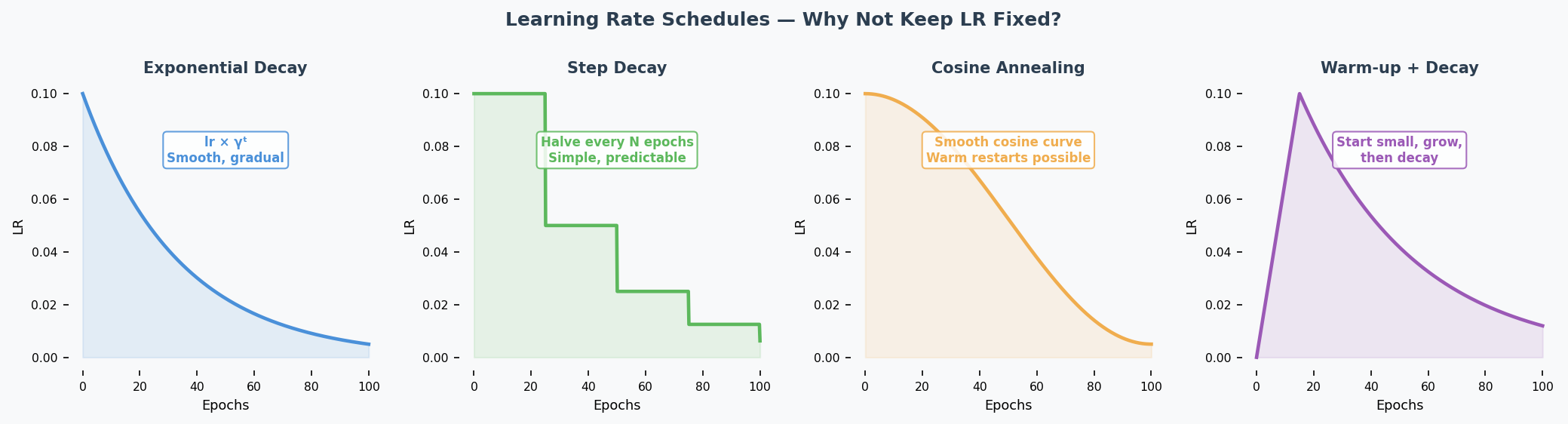

What: Change the learning rate during training instead of keeping it fixed.

Why: Start with large steps (explore quickly), then shrink steps (fine-tune near optimum(最优点)).

| Schedule | How It Works | Benefit |

|---|---|---|

| Exponential decay(指数衰减) | $lr_t = lr_0 \times \gamma^t$ | Smooth, gradual decrease |

| Step decay(阶梯衰减) | Halve lr every N epochs | Simple, predictable |

| Cosine annealing(余弦退火) | lr follows cosine curve | Warm restarts possible |

| Warmup(预热) | Start small, increase, then decrease | Avoids early instability |

Exam answer (1 example is enough): "Exponential learning rate decay reduces the lr as training progresses. This is beneficial because it allows taking large steps initially to move quickly towards an optimum, then smaller steps to avoid overshooting it."

Momentum(动量) (2024 Q4.2)

Analogy: Imagine pushing a ball downhill. Without momentum, the ball moves exactly where the current slope points — every tiny bump changes its direction. With momentum, the ball builds up speed and rolls smoothly past small bumps, heading in the general downhill direction.

Mechanism: Instead of updating weights(权重) using ONLY the current gradient(梯度), momentum keeps a running average of past gradients:

$$v_t = \beta \cdot v_{t-1} + (1-\beta) \cdot \nabla L$$ $$w = w - lr \cdot v_t$$

Where $\beta$ (typically 0.9) controls how much past gradients matter.

Effects:

- Smooths updates: Averages out noisy gradients → more stable direction

- Accelerates convergence(加速收敛): Builds up speed in consistent downhill directions

- Escapes shallow local minima(局部最小值): Momentum carries the ball through small bumps

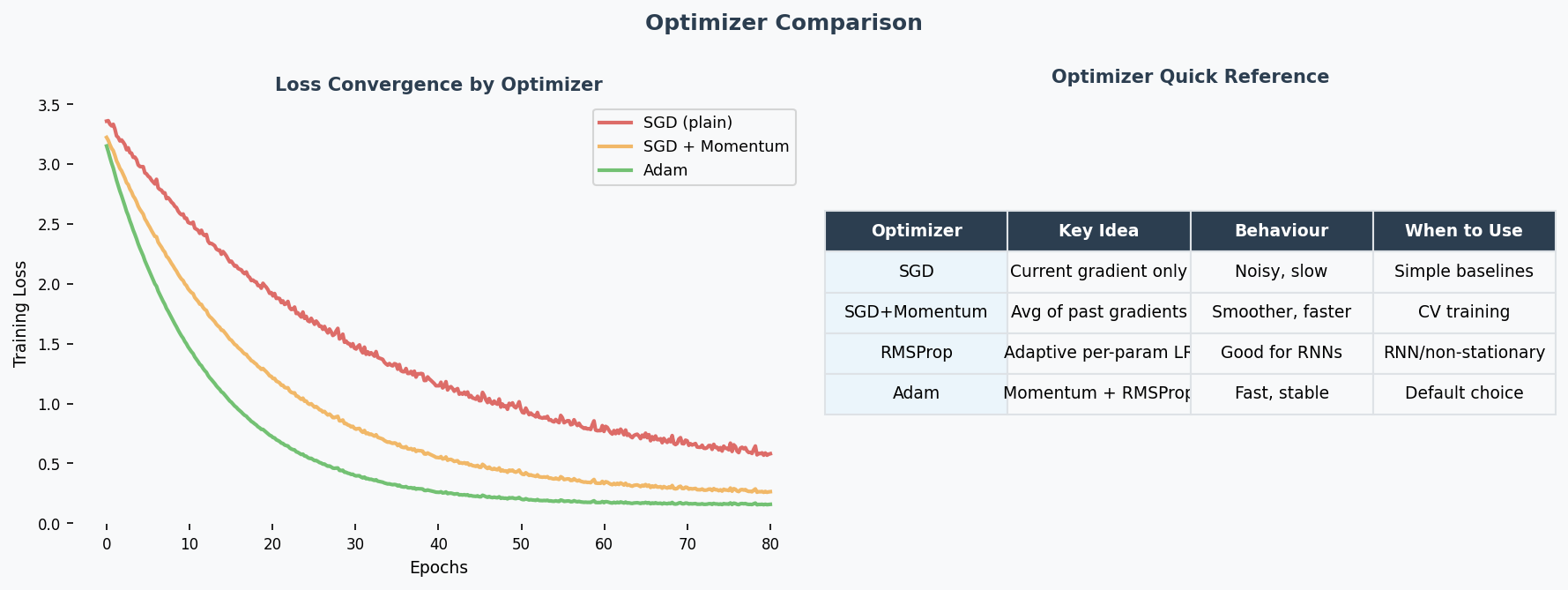

Key Optimizers Quick Reference

| Optimizer | Mechanism | When to Use |

|---|---|---|

| SGD(随机梯度下降) | Fixed learning rate, uniform for all parameters | Baseline; simple problems; when you want full control |

| SGD + Momentum(动量) | Accumulates past gradients (velocity term), smooths updates | Noisy gradients; saddle points(鞍点); most standard training |

| RMSProp | Adapts lr per-parameter using running average of squared gradients — divides by √(avg of grad²) | Non-stationary problems; RNNs; uneven gradient scales |

| Adam | Combines momentum (1st moment) + RMSProp (2nd moment) — adaptive(自适应) lr with momentum smoothing | Best default choice; fast convergence; works well out-of-the-box for most tasks |

Why Adam is the go-to optimizer (2024 Q7 — "better optimisers"): Adam adapts the learning rate for each parameter individually. Parameters with large gradients get smaller steps; parameters with small gradients get larger steps. This is especially helpful for deep networks where gradient magnitudes vary wildly across layers — it directly mitigates the vanishing/exploding gradient problem(梯度消失/梯度爆炸) at the optimiser level.

When SGD still wins: For very large-scale training (e.g., ImageNet), well-tuned SGD + momentum + lr schedule can generalise better than Adam. Adam sometimes converges to sharper minima, while SGD finds flatter (more generalisable) minima.

Past Exam Questions

2025 Q4 [4m]: Match 4 loss curves to learning rates 0.5, 0.1, 0.01, 0.001. Justify each. 2024 Q4 [4m]: (1) Give LR schedule example + why beneficial. (2) Explain momentum.

中文思维 → 英文输出

| 你脑中的中文想法 | 考试中应该写的英文 |

|---|---|

| loss在震荡上升,学习率太大 | "The loss curve diverges, indicating the learning rate is too high — the gradient updates overshoot the minimum." |

| loss下降很慢 | "The loss decreases very slowly, suggesting the learning rate is too small." |

| 学习率衰减好处 | "A learning rate schedule allows fast initial convergence while enabling fine-tuning near the optimum." |

| 动量能平滑更新 | "Momentum smooths the optimisation trajectory by maintaining an exponentially decaying average of past gradients." |

| Adam是最好的默认选择 | "Adam is an effective default optimiser as it adapts the learning rate per parameter." |

| 曲线先降后升,过拟合了 | "The validation loss initially decreases then increases, indicating the onset of overfitting." |

| 这条曲线收敛到一个比较高的值 | "The loss converges to a suboptimal value, suggesting the learning rate is slightly too high, causing the updates to overshoot." |

本章 Chinglish 纠正

| Chinglish (avoid) | Correct English |

|---|---|

| "The learning rate is too much" | "The learning rate is too high" |

| "Loss is going up means overfitting" | "A diverging loss indicates the learning rate is too high, not overfitting" |

| "Adam is the best optimizer" | "Adam is generally an effective default choice" (hedge appropriately in academic writing) |

| "The curve is vibrating" | "The loss curve oscillates" |

| "Learning rate should be decay" | "A learning rate schedule should be applied" |

| "Momentum can help the speed" | "Momentum accelerates convergence by smoothing the gradient updates" |

Whiteboard Self-Test

- Can you draw 4 loss curves for different learning rates and label each?

- Can you explain why a diverging loss curve means the lr is too high?

- Can you name one LR schedule and explain why it helps?

- Can you explain momentum in your own words (not just the formula)?

Regularisation & Batch Normalisation

Exam Importance

HIGH | Tested directly (Practice Q5) and indirectly in every Design Choices question

Feynman Draft

Imagine you're studying for an exam. Overfitting(过拟合) is like memorising the textbook word-for-word — you ace the practice test but fail the real exam because you memorised answers instead of understanding concepts.

Regularisation(正则化) is like a study technique that forces you to actually understand: someone randomly covers parts of your notes (Dropout(随机失活)), or penalises you for writing overly complicated answers (L1/L2).

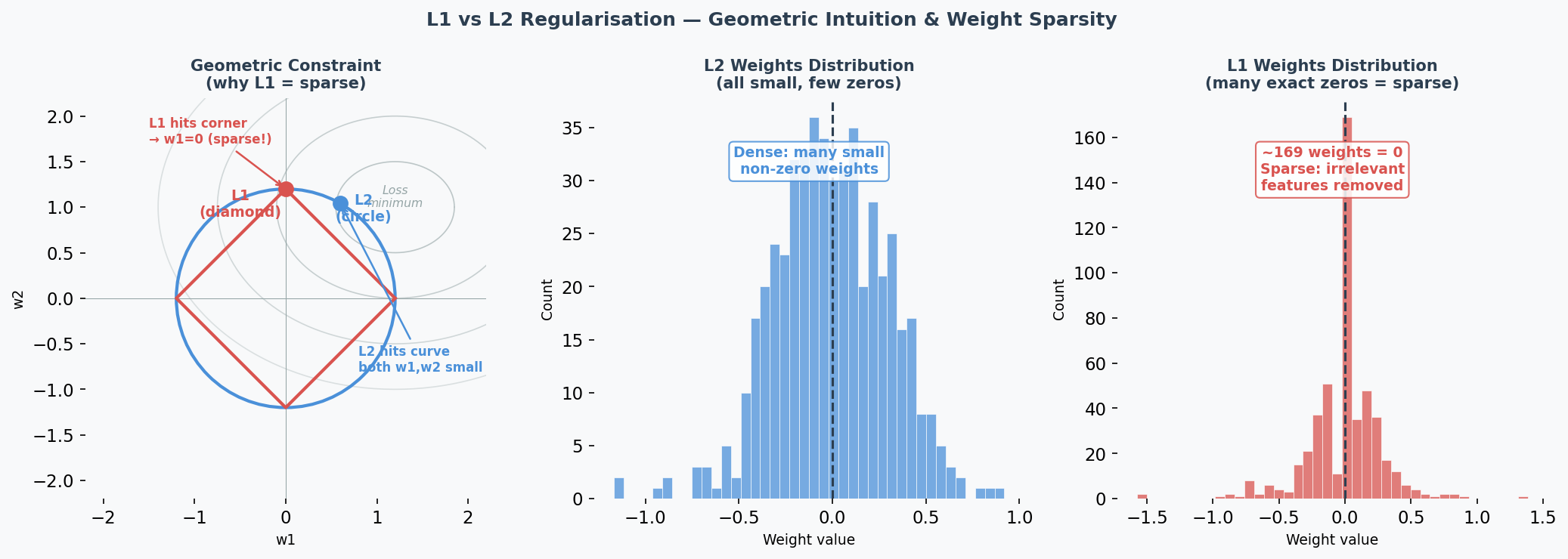

L1 and L2 Regularisation

Think of weights as "how much attention" the model pays to each feature.

-

L2 (Ridge / 岭回归): Adds penalty proportional to weight² → pushes ALL weights to be small but non-zero — this is called weight decay(权重衰减). Like telling someone "you can use all ingredients, but use them sparingly."

$$L_{total} = L_{original} + \lambda \sum w_i^2$$

-

L1 (Lasso): Adds penalty proportional to |weight| → pushes some weights to exactly 0. Like telling someone "pick only the most important ingredients and ignore the rest." Creates sparse(稀疏) models — performing automatic feature selection(特征选择).

$$L_{total} = L_{original} + \lambda \sum |w_i|$$

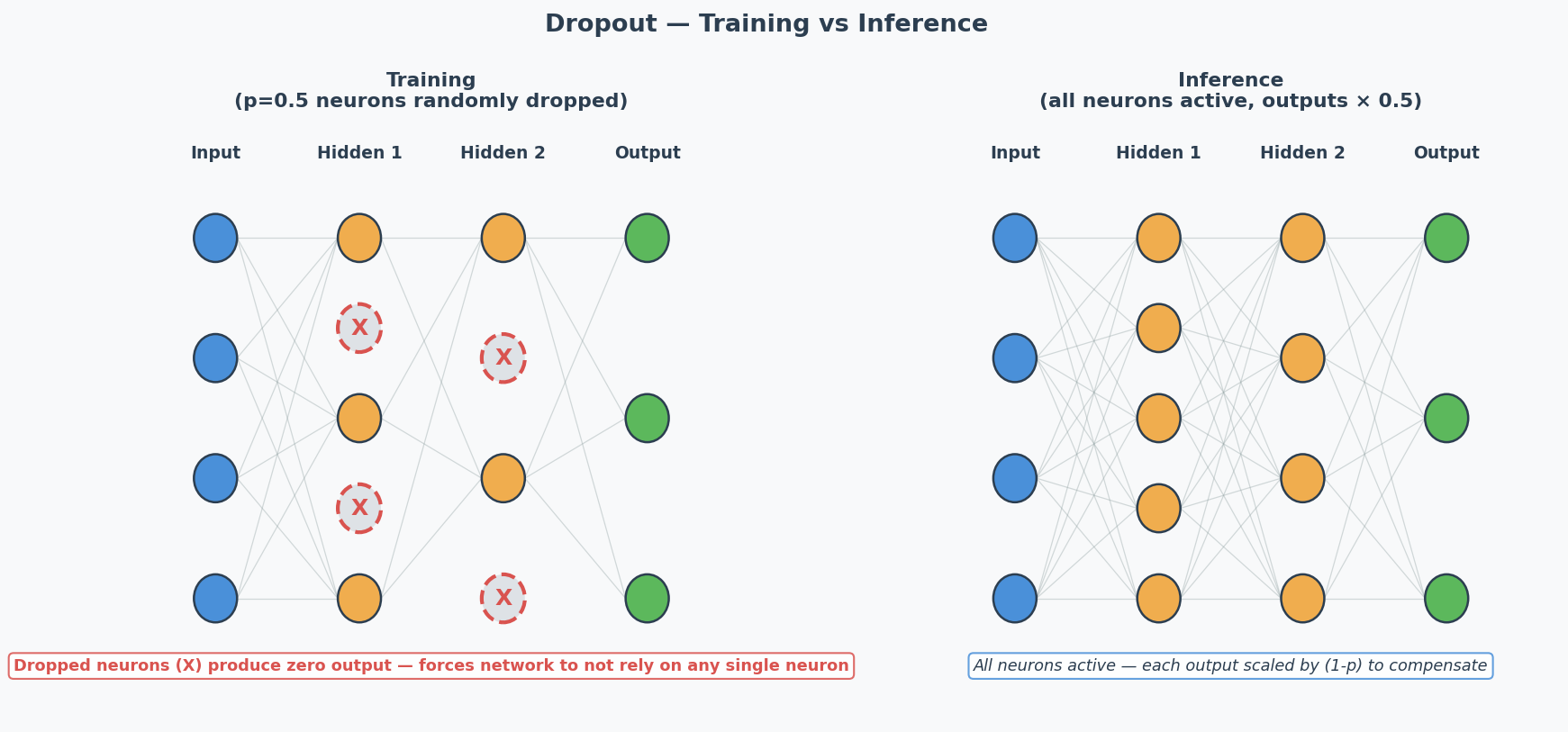

Dropout

During training, randomly "turn off" neurons with probability $p$ (typically 0.5). Forces the network to learn redundant representations — no single neuron can be relied on.

Key: Dropout is ONLY active during training. During inference, all neurons are used (but outputs are scaled by 1-p to compensate).

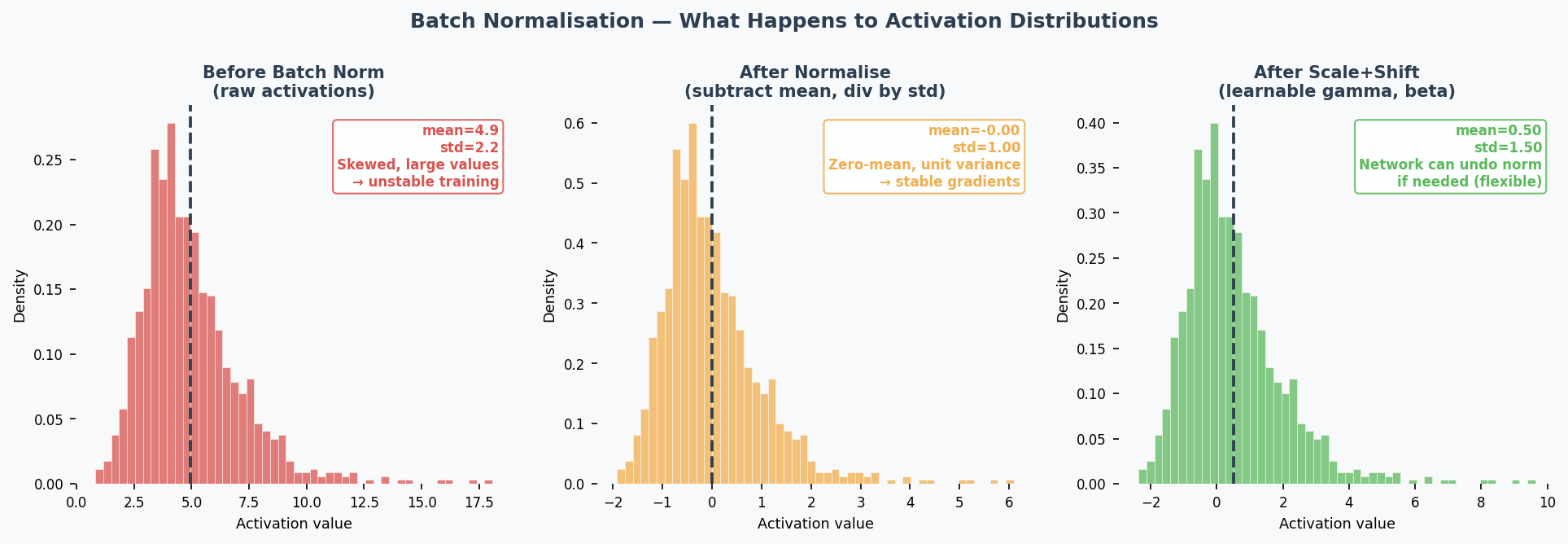

Batch Normalisation(批量归一化) (Practice Q5 — 5 marks)

What: Normalise(归一化) the inputs to each layer by subtracting mean and dividing by std of the current mini-batch(小批量).

$$\hat{x} = \frac{x - \mu_{batch}}{\sqrt{\sigma^2_{batch} + \epsilon}}$$

Then apply learnable scale ($\gamma$) and shift ($\beta$): $y = \gamma \hat{x} + \beta$

4 Effects (know at least 2 for the exam):

| Effect | Explanation |

|---|---|

| Speeds up training(加速训练) | Keeps activations(激活值) in a good range → gradients stay healthy → can use larger learning rates |

| Reduces vanishing/exploding gradients(减少梯度消失/爆炸) | Normalisation prevents activations from becoming extremely small or large |

| Regularisation effect(正则化效果) | Mini-batch statistics add noise to activations → acts like implicit regularisation → reduces overfitting |

| Reduces sensitivity to weight initialisation(降低对权重初始化的敏感性) | Bad initial weights would create extreme activations → batch norm corrects this automatically |

Common Misconception: "Batch norm is just standardisation." No — it also has learnable parameters ($\gamma$, $\beta$) that let the network undo the normalisation if that's beneficial. And the normalisation per mini-batch introduces noise that has a regularising effect.

Core Intuition: Regularisation = purposely limiting model complexity to prevent memorisation and force generalisation.

When to Use What (Design Choices Context)

| Technique | Fights | Don't Use When |

|---|---|---|

| L2 regularisation | Overfitting | Underfitting |

| L1 regularisation | Overfitting | Underfitting |

| Dropout | Overfitting | Underfitting |

| Batch normalisation | Various (speeds training, mild regularisation) | — (almost always helps) |

| Early stopping | Overfitting | Underfitting |

| Data augmentation | Overfitting | — |

Early Stopping(提前停止)

What: Monitor validation loss during training. When it stops improving for $N$ consecutive epochs (patience), stop training — even if training loss is still decreasing.

Why it works: The point where validation loss starts rising is exactly the point where the model begins memorising training noise. Stopping there gives you the best generalisation.

In practice: Save a checkpoint of model weights at each validation improvement. When patience runs out, roll back to the best checkpoint.

L1 Sparsity(稀疏性) vs L2 Shrinkage(收缩) — Why the Difference?

Geometric intuition: L1's constraint region is a diamond (corners touch axes); L2's is a circle. The optimal point is where the loss contour(损失等高线) meets the constraint boundary. The diamond's sharp corners align with axes → weights are pushed to exactly 0. The circle has no corners → weights are pushed toward 0 but never reach it.

Practical consequence:

- Use L1 when you suspect many features are irrelevant (automatic feature selection)

- Use L2 when all features are somewhat useful (just reduce their magnitudes)

- The hyperparameter(超参数) λ controls regularisation strength(正则化强度): higher λ = stronger penalty. If λ is too high → underfitting(欠拟合) (weights too constrained); too low → minimal regularisation effect.

Critical exam trap: If the model is underfitting (train=val=low), adding regularisation makes it WORSE by further constraining the model.

Past Exam Questions

Practice Q5 [5m]: Explain 2 effects of batch normalisation (2 marks each: name + explanation). 2024 Q2: L2 regularisation as a suggestion for overfitting → YES, explain why. Practice Q3: Dropout as a suggestion for underfitting → NO, explain why. 2025 Q2b: Suggest changes for overfitting → regularisation is a valid answer.

中文思维 → 英文输出

| 你脑中的中文想法 | 考试中应该写的英文 |

|---|---|

| L2让权重变小 | "L2 regularisation penalises large weights, encouraging the model to learn a simpler, more generalisable representation." |

| L1让一些权重变成0 | "L1 regularisation drives some weights to exactly zero, performing automatic feature selection." |

| Dropout让网络不依赖某个神经元 | "Dropout prevents co-adaptation by randomly deactivating neurons, forcing the network to learn distributed representations." |

| Batch norm加速训练 | "Batch normalisation speeds up training by keeping activations in a stable range, allowing higher learning rates." |

| 正则化不能解决欠拟合 | "Regularisation constrains model complexity, which helps with overfitting but worsens underfitting." |

| 正则化强度太大了 | "Excessive regularisation over-constrains the model, leading to underfitting." |

| L1能做特征选择 | "L1 regularisation induces sparsity, effectively performing feature selection by eliminating irrelevant weights." |

本章 Chinglish 纠正

| Chinglish (avoid) | Correct English |

|---|---|

| "Dropout can prevent the overfit" | "Dropout helps prevent overfitting" |

| "Batch norm makes training more faster" | "Batch normalisation accelerates training" |

| "The regularisation is too strong so the model is underfit" | "Excessive regularisation over-constrains the model, leading to underfitting" |

| "L1 makes some weight become zero" | "L1 regularisation drives certain weights to exactly zero" |

| "Batch norm is just standardisation" | "Batch normalisation normalises activations per mini-batch, with learnable parameters and an implicit regularisation effect" |

| "Early stopping is stop early" | "Early stopping halts training when validation performance stops improving" |

Whiteboard Self-Test

- Can you explain L2 regularisation in one sentence?

- Can you explain why dropout doesn't help underfitting?

- Can you list 4 effects of batch normalisation?

- Can you explain the regularisation effect of batch norm (why mini-batch noise helps)?

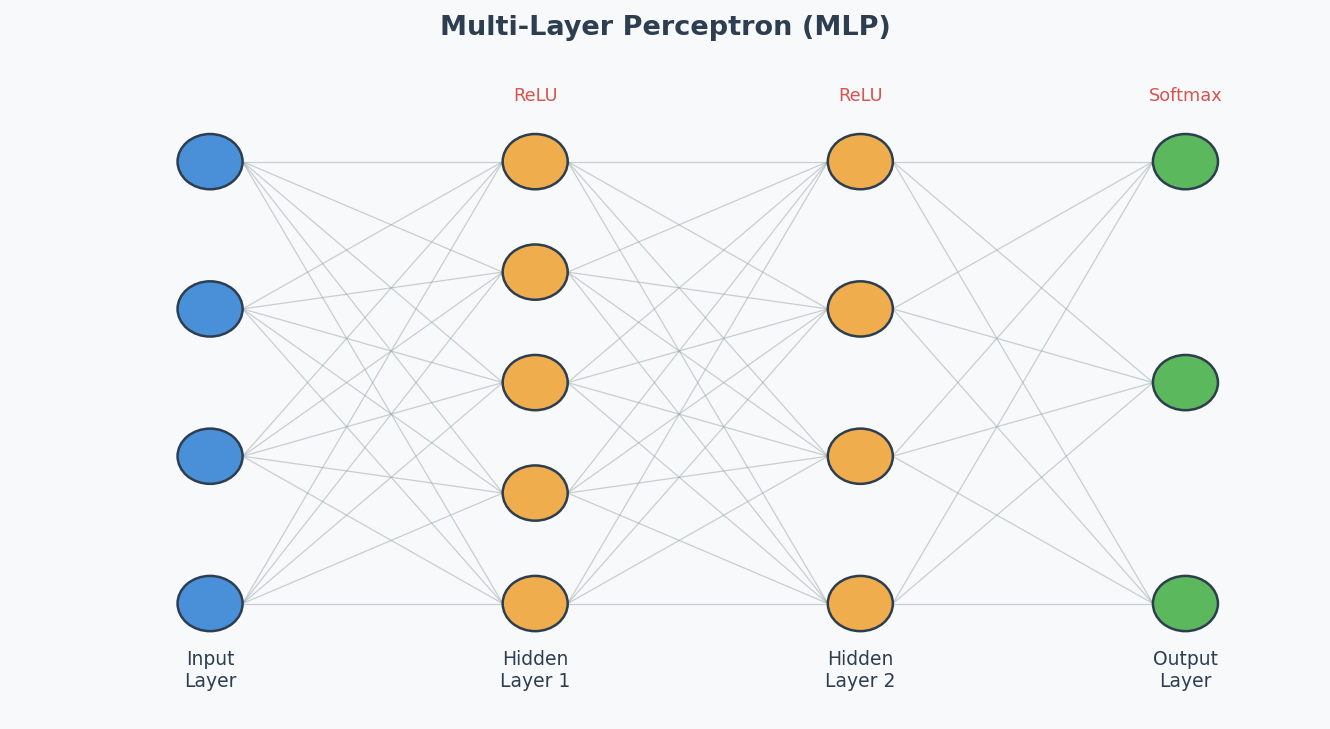

MLP, Activation Functions & DNN Training

Exam Importance

MED | 2025 Q3 (activation functions), 2024 Q7 (DNN training challenges)

Feynman Draft: Neural Networks

Imagine a team of workers in a factory assembly line. Each worker receives inputs, does a simple calculation (multiply by a weight, add a bias), and passes the result through a "decision gate" (activation function(激活函数)) to the next worker.

One worker can only draw a straight line to separate things. But stack many workers in layers, and they can draw incredibly complex boundaries — that's a Deep Neural Network (DNN)(深度神经网络).

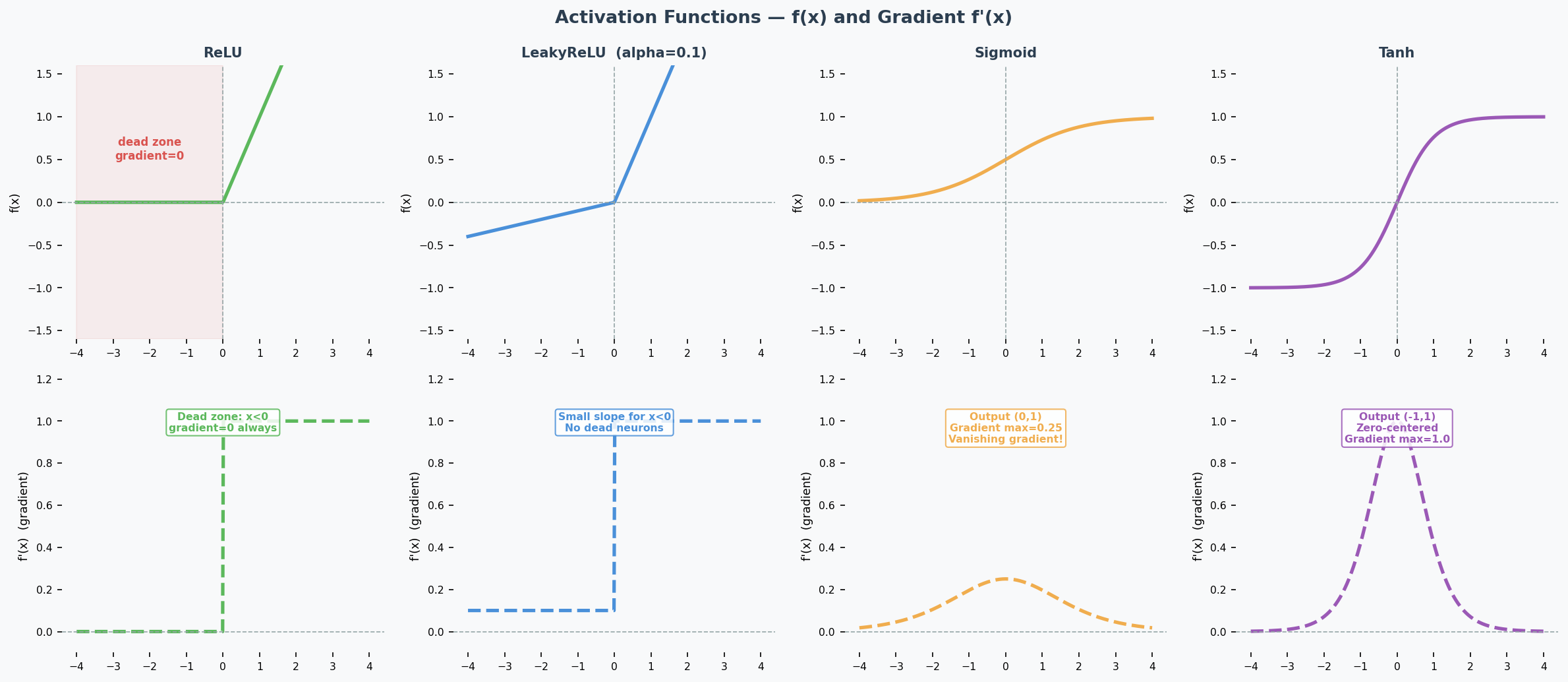

Activation Functions (2025 Q3)

ReLU and the Dying ReLU Problem

ReLU (Rectified Linear Unit): $f(x) = \max(0, x)$

The problem: If a neuron's input is always negative, ReLU outputs 0 and its gradient is also 0. The neuron stops learning permanently — this is the Dying ReLU Problem(神经元死亡问题). Look at the gradient plot above: the flat zero region on the left is the dead zone.

LeakyReLU (The Fix)

$$\text{LeakyReLU}(x) = \begin{cases} x & \text{if } x > 0 \ \alpha x & \text{if } x \leq 0 \end{cases}$$

How it helps: The small slope $\alpha$ (typically 0.01–0.1) means the gradient is never zero — dead neurons can recover.

Output Activation(输出激活函数): Sigmoid vs Softmax (2025 Q3b)

| Scenario | Activation | Why |

|---|---|---|

| Multi-class(多分类) (exactly 1 label) | Softmax | Outputs sum to 1 → probability distribution over classes |

| Multi-label(多标签) (multiple labels possible) | Sigmoid | Each output independently between 0 and 1 |

| Binary classification | Sigmoid (1 output) or Softmax (2 outputs) | Both work |

| Regression | Linear (none) | Unbounded continuous output |

2025 Q3b scenario: Manufacturing quality control — single image may contain multiple anomaly types simultaneously. This is multi-label → use sigmoid, because each anomaly is predicted independently.

Common Misconception: "Always use softmax for classification." Only when it's MULTI-CLASS (one label). For multi-label, softmax is wrong because it forces outputs to sum to 1 — detecting one anomaly would lower the probability of detecting others.

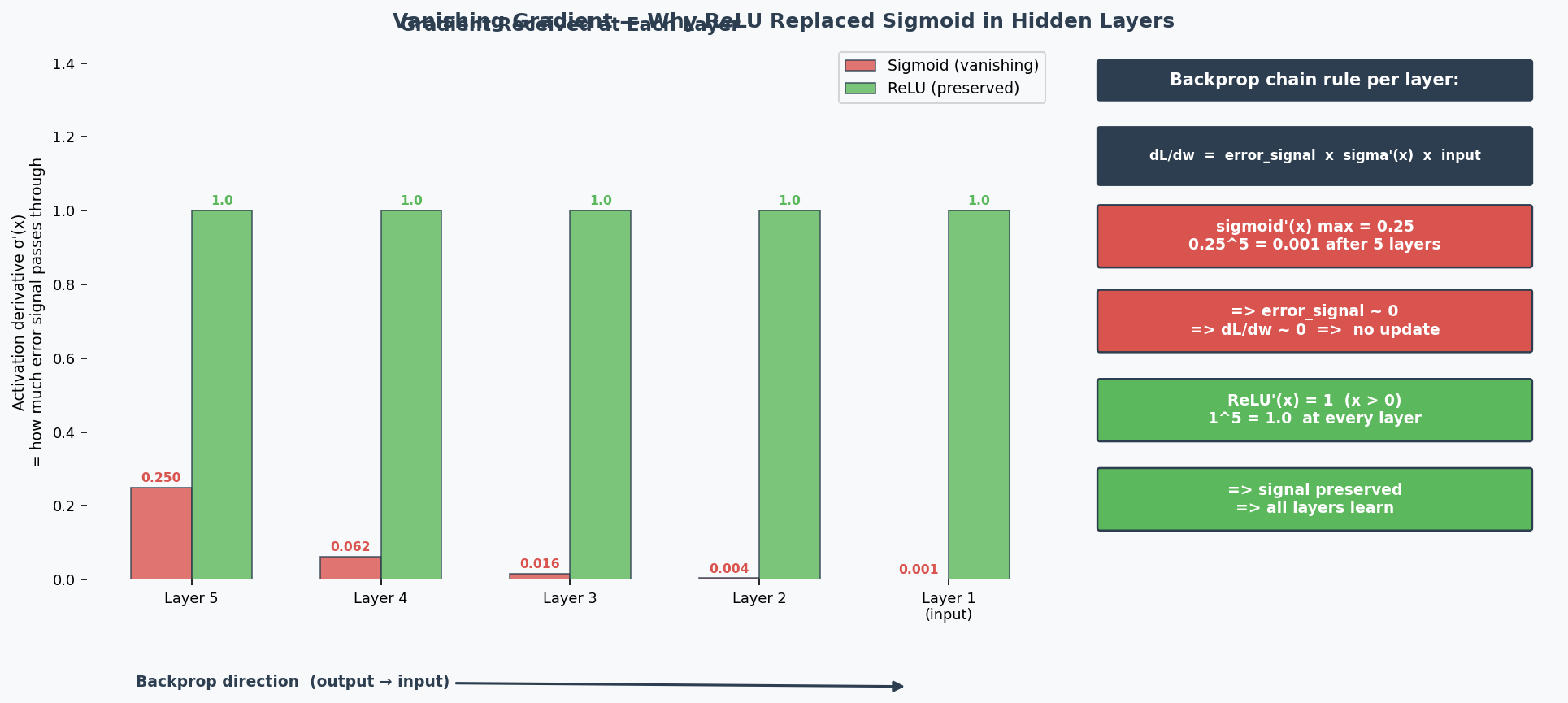

Why Deep Networks Are Hard to Train (2024 Q7)

The Problems:

-

Vanishing Gradients(梯度消失): During backpropagation(反向传播), gradients are multiplied through many layers via the chain rule. With sigmoid activation, the maximum derivative is only 0.25 — so gradients are multiplied by ≤0.25 at each layer. After 6 layers: $0.25^6 ≈ 0.0002$ — the gradient reaching early layers is nearly 0, so they can't learn. ReLU fixes this because its derivative is exactly 1 for positive inputs, preserving gradient magnitude perfectly.

-

Exploding Gradients(梯度爆炸): Same multiplication, but with values > 1 → gradient grows exponentially → training becomes unstable.

-

Overfitting(过拟合): More parameters = more capacity to memorise training data noise.

-

Longer training time: More computations per forward/backward pass.

The Solutions (name 2 for the exam):

| Solution | How It Helps |

|---|---|

| Batch normalisation(批归一化) | Keeps activations in healthy range → gradients don't vanish/explode |

| Skip connections(跳跃连接) (ResNet) | Gradient flows directly through shortcut → bypasses vanishing gradient problem |

| LSTM/GRU (for sequences) | Gating mechanisms control information flow → mitigate vanishing gradients |

| Better optimisers (Adam) | Adaptive learning rates per parameter → more stable training |

| Proper weight initialisation (He, Xavier) | Prevents activations from starting too large or small |

| Gradient clipping(梯度裁剪) | Caps gradient magnitude → prevents explosion |

Weight Initialisation(权重初始化): Why Zero = Bad (Practice Q3)

If all weights are 0, then:

- All neurons compute the same output (0)

- All gradients are the same

- All weights update by the same amount

- All neurons remain identical forever → symmetry problem(对称性问题)

The network is essentially a single neuron repeated N times. It can't learn different features.

Correct initialisation: Random values, properly scaled:

- Xavier/Glorot: For sigmoid/tanh: $\text{Var}(w) = 1/n_{in}$

- He: For ReLU: $\text{Var}(w) = 2/n_{in}$

Why two different methods? Each is designed to keep the variance of activations stable across layers for a specific activation function:

- Xavier assumes the activation is roughly linear around 0 (true for sigmoid/tanh near their centre). It balances forward and backward signal variance.

- He accounts for the fact that ReLU kills half the inputs (outputs 0 for negative), so it doubles the variance to compensate. Using Xavier with ReLU → activations shrink to 0 in deep networks. Using He with sigmoid → activations may saturate.

Rule of thumb: Match initialisation to activation — He for ReLU/LeakyReLU, Xavier for sigmoid/tanh.

Architecture Diagram

中文思维 → 英文输出

| 中文思路 | 考试英文表达 |

|---|---|

| ReLU的梯度在负数时是0,神经元就死了 | "When inputs are consistently negative, ReLU outputs zero with a zero gradient, causing the neuron to stop learning permanently — this is the dying ReLU problem." |

| LeakyReLU给负数一个小斜率来修复 | "LeakyReLU introduces a small positive slope for negative inputs, ensuring the gradient is never zero and allowing dead neurons to recover." |

| 多标签用sigmoid,多分类用softmax | "For multi-label classification, sigmoid is appropriate because each output is independent. Softmax is unsuitable as it forces outputs to sum to 1." |

| 深度网络难训练因为梯度消失 | "Deep networks are difficult to train because gradients are multiplied through many layers via the chain rule, causing them to vanish exponentially." |

| 权重全初始化为0有对称性问题 | "Zero initialisation creates a symmetry problem — all neurons compute identical outputs and receive identical gradients, making them unable to learn different features." |

本章 Chinglish 纠正

| Chinglish (避免) | 正确表达 |

|---|---|

| "The neuron is dead" | "The neuron has become inactive due to the dying ReLU problem" |

| "Softmax is for classification" | "Softmax is for multi-class classification; sigmoid is for multi-label" |

| "Deep network is hard to train" | "Deep networks present training challenges, particularly vanishing gradients" |

Whiteboard Self-Test

- Can you explain the dying ReLU problem and how LeakyReLU fixes it?

- When do you use sigmoid vs softmax for the output layer?

- Can you name 2 reasons why deep networks are hard to train?

- Can you name 2 solutions that make deep training easier?

- Why is initialising weights to 0 a bad idea?

CNN — Convolutional Neural Networks

Exam Importance

MUST | Every exam has a CNN calculation question (2025 Q6, 2024 Q6, Practice Q7)

Feynman Draft

Imagine you're looking at a photo and trying to find a cat. You don't examine every pixel individually — your eyes scan small regions looking for patterns: edges, then curves, then ears, then a face. A CNN works exactly like this.

A CNN(卷积神经网络) slides small "windows" (filters/kernels(卷积核/滤波器)) across the image. Each filter detects a specific pattern:

- Layer 1 filters: detect simple edges (horizontal, vertical, diagonal)

- Layer 2 filters: combine edges into shapes (corners, curves)

- Layer 3+ filters: combine shapes into objects (ears, eyes, faces)

After sliding filters, we shrink the image with pooling(池化) (like zooming out) to focus on "where" a pattern exists rather than its exact pixel position.

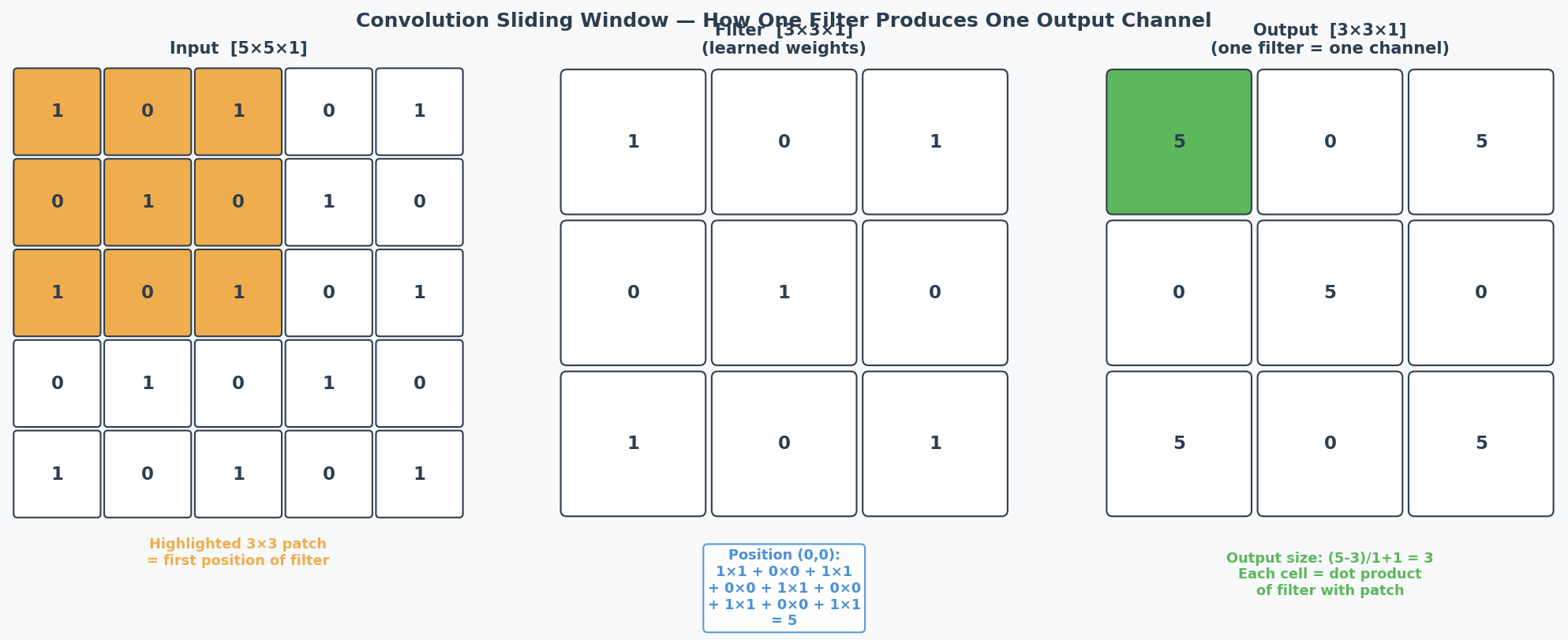

Toy Example: A 5x5 image with a 3x3 filter(特征图 = feature map)

Common Misconception: "More filters = bigger output feature map." NO — more filters increases the DEPTH (channels), not the spatial dimensions. Spatial size depends on kernel size, stride, and padding.

Core Intuition: CNN = sliding pattern detector. Shallow layers find edges, deep layers find objects.

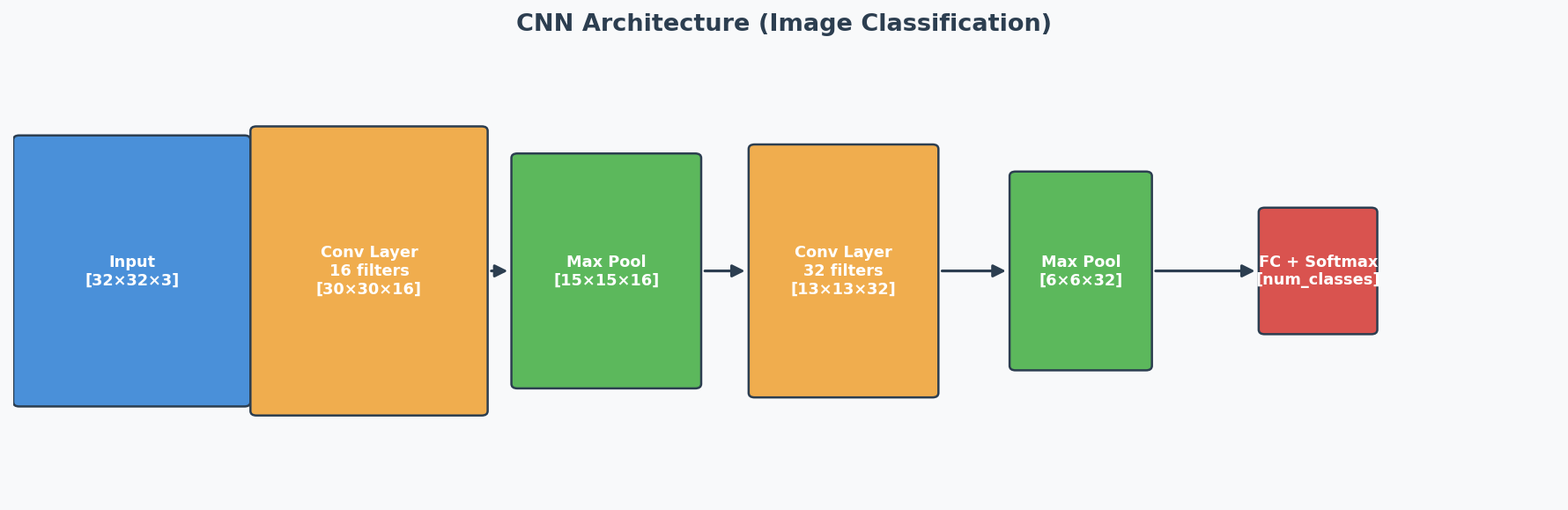

Architecture Overview

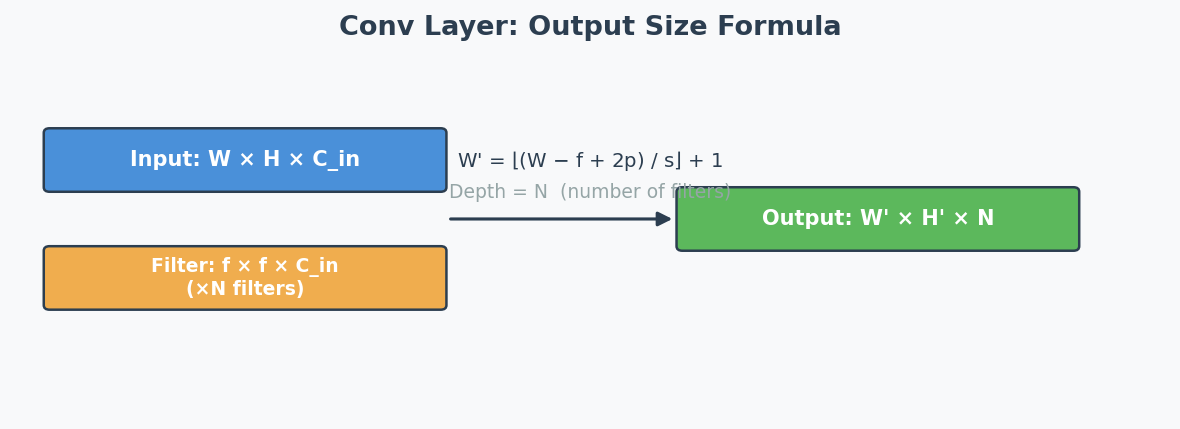

The Two Formulas You MUST Memorize

Formula 1: Convolution Output Size

$$\text{output} = \left\lfloor \frac{n + 2p - f}{s} \right\rfloor + 1$$

Where:

- $n$ = input spatial dimension (height or width)

- $p$ = padding(填充) (valid = 0, same = computed so output = input)

- $f$ = filter/kernel size

- $s$ = stride(步幅)

Output depth = number of filters $n'_C$

Formula 2: Pooling Output Size

$$\text{output} = \left\lfloor \frac{n - f}{s} \right\rfloor + 1$$

Where:

- $n$ = input spatial dimension

- $f$ = pool kernel size

- $s$ = stride (usually = f)

Output depth = same as input depth (pooling doesn't change channels!)

Key difference: Pooling has NO padding (p=0 always).

Padding Types

| Type | Meaning | Formula Effect |

|---|---|---|

| Valid padding(无填充) | No padding, p = 0 | Output shrinks |

| Same padding(等尺寸填充) | Pad so output spatial size = input spatial size | $p = (f-1)/2$ when $s=1$ |

Same padding shortcut: When stride = 1 and same padding → output spatial dimensions = input spatial dimensions. Just change the depth to the number of filters.

Worked Example: 2025 Q6 (The Exact Exam Question)

Architecture:

- Input: [35, 35, 3]

- Conv1: 10 filters, kernel=7, stride=2, valid padding

- MaxPool1: kernel=2, stride=2

- Conv2: 20 filters, kernel=3, stride=1, same padding

- MaxPool2: kernel=2, stride=2

- FC layer: ? inputs, 10 outputs

Step-by-step:

Layer: Conv1 (valid, p=0)

Input: [35, 35, 3]

Calc: (35 + 2*0 - 7) / 2 + 1 = 28/2 + 1 = 14 + 1 = 15

Output: [15, 15, 10] ← 10 from number of filters

Layer: MaxPool1

Input: [15, 15, 10]

Calc: (15 - 2) / 2 + 1 = 13/2 + 1 = 6.5 + 1 = 7.5 → floor = 7

Output: [7, 7, 10] ← depth unchanged

Layer: Conv2 (same padding, stride=1)

Input: [7, 7, 10]

Calc: same padding + stride 1 → spatial stays same

Output: [7, 7, 20] ← 20 from number of filters

Layer: MaxPool2

Input: [7, 7, 20]

Calc: (7 - 2) / 2 + 1 = 5/2 + 1 = 2.5 + 1 = 3.5 → floor = 3

Output: [3, 3, 20] ← depth unchanged

Flatten(展平): 3 × 3 × 20 = 180

Answer: (ii) 180 ✓

Worked Example: 2024 Q6

1) Conv: Input [50,50,5], ten 5×5×5 filters, stride=3, padding=0

(50 + 2*0 - 5) / 3 + 1 = 45/3 + 1 = 15 + 1 = 16

Output: [16, 16, 10]

2) AvgPool: Input [50,50,5], 5×5 filter, stride=5

(50 - 5) / 5 + 1 = 45/5 + 1 = 9 + 1 = 10

Output: [10, 10, 5] ← depth stays 5!

3) MaxPool: Same answer as AvgPool! Max vs average only changes VALUES, not dimensions.

Worked Example: Practice Q7

Given: Input [21,21,3] → Conv (no padding, s=2) → Output [9,9,100]

Find: n'C, f, nC

- n'C = 100 (depth of output = number of filters)

- f: (21 + 0 - f)/2 + 1 = 9 → (21 - f)/2 = 8 → 21 - f = 16 → f = 5

- nC = 3 (depth of input = filter width/depth)

Edge detection question: Early layers (close to input) detect edges because they see small local regions (receptive field(感受野)). Deeper layers combine these into complex features (shapes → objects).

Key Facts to Remember

| Fact | Detail |

|---|---|

| Conv changes depth | Output depth = number of filters |

| Pooling preserves depth | Output depth = input depth |

| Max vs Avg pooling | Same output SIZE, different values |

| Valid padding | p = 0, output shrinks |

| Same padding (s=1) | Output spatial size = input spatial size |

| Floor function | When division isn't exact, round DOWN |

| Filter depth | Must match input depth (filter is 3D: f × f × input_channels) |

中文思维 → 英文输出

| 中文思路 | 考试英文表达 |

|---|---|

| 先写公式再代入数字 | "Using the formula: output = floor((n + 2p - f) / s) + 1, substituting n=35, p=0, f=7, s=2: (35-7)/2 + 1 = 15." |

| 池化不改变深度 | "Pooling reduces the spatial dimensions while preserving the depth (number of channels)." |

| 最大池化和平均池化输出尺寸一样 | "Max pooling and average pooling produce outputs with the same dimensions; only the values differ." |

| Same padding时空间尺寸不变 | "With same padding and stride 1, the output spatial dimensions match the input." |

| 输出深度等于滤波器数量 | "The depth of the output equals the number of filters applied." |

本章 Chinglish 纠正

| Chinglish (避免) | 正确表达 |

|---|---|

| "The output size is 15 times 15 times 10" | "The output dimensions are [15, 15, 10]" |

| "Pooling will change the channel" | "Pooling does not change the number of channels — only spatial dimensions are reduced" |

| "The filter number decides the deep" | "The number of filters determines the output depth (channels)" |

Whiteboard Self-Test

- Can you write both formulas from memory?

- Can you compute: Input [28,28,1] → Conv(16 filters, k=5, s=1, valid) → ?

- Can you compute: [24,24,16] → MaxPool(k=2, s=2) → ?

- What's the difference between valid and same padding?

- Why does max pooling not change the depth?

- Which layers detect edges? Why?

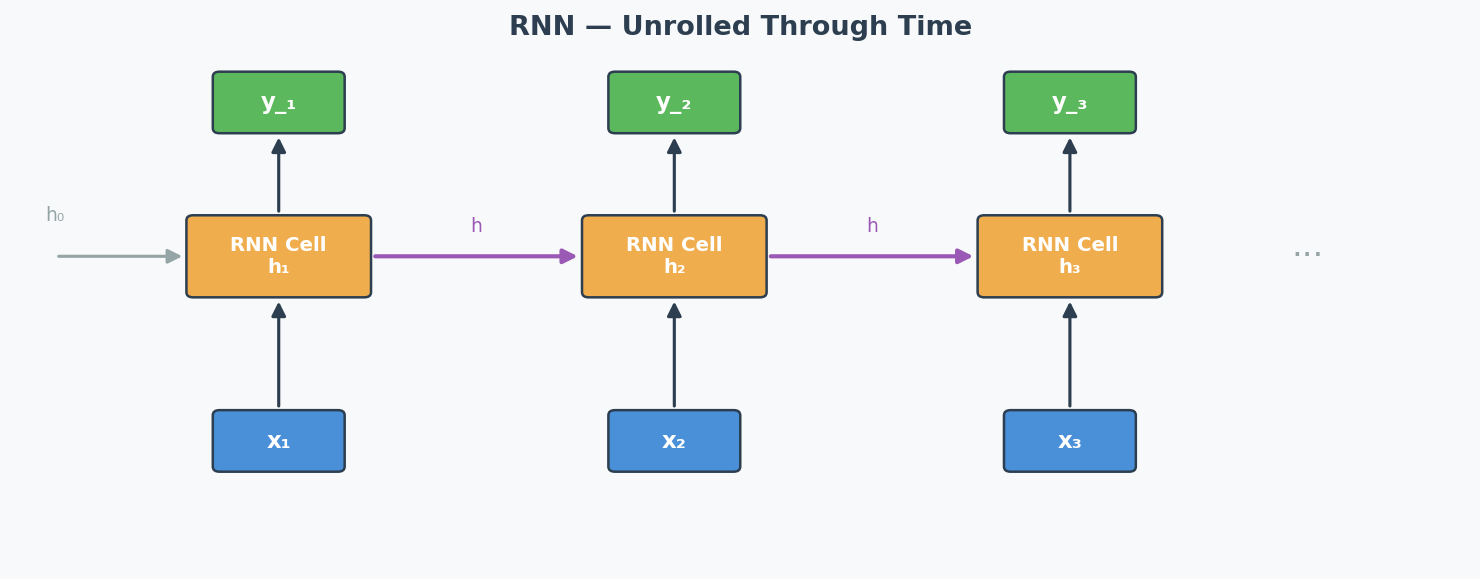

RNN / LSTM / GRU — Recurrent Neural Networks

Exam Importance

MED | Tested in 2024 Q5 (alongside Transformer comparison) — 4 marks

Feynman Draft

Imagine you're watching a movie and trying to understand the plot. You don't forget everything after each scene — you carry a running memory of what happened before. When a character says "he went back to the castle," you remember who "he" is and which castle from earlier scenes.

That's exactly what an RNN (Recurrent Neural Network)(循环神经网络) does. It processes a sequence (words, time steps, video frames) one element at a time, and passes a hidden state(隐藏状态) from one step to the next — like your running memory of the movie.

Input: x₁ x₂ x₃ x₄

↓ ↓ ↓ ↓

State: → [h₁] →→ [h₂] →→ [h₃] →→ [h₄] → output

Each box takes BOTH the current input AND the previous hidden state.

h₂ = f(W·h₁ + U·x₂ + b)

The Sequential Processing Trade-off (The Exact Exam Question — 2024 Q5):

Advantage: Because the RNN uses sequential processing(顺序处理), processing one step at a time, it naturally captures the order of the sequence. You don't need to tell it "this word comes first, that word comes second" — it inherently knows because it processes them in order. The sequential structure IS the ordering mechanism.

Drawback: Because each step MUST wait for the previous step's hidden state to finish, you cannot parallelise(并行化) the computation. For a sequence of length 1000, you need 1000 sequential operations. This makes training very slow for long sequences.

Additionally, the hidden state must carry ALL information from the past through a single vector — for very long sequences, early information gets "washed out." This is related to the vanishing gradient problem(梯度消失问题).

Why Vanilla RNNs Struggle with Long Sequences

During backpropagation through time (BPTT)(时间反向传播), gradients are multiplied by the recurrent weight matrix at each time step:

$$\frac{\partial h_t}{\partial h_1} = \prod_{i=1}^{t-1} \frac{\partial h_{i+1}}{\partial h_i}$$

If these partial derivatives are < 1 → gradients vanish (early parts of the sequence get no learning signal) If they are > 1 → gradients explode (training becomes unstable)

Practical impact: A vanilla RNN trained on a 100-word sentence might "forget" what happened in the first few words by the time it reaches the end.

LSTM — Long Short-Term Memory

LSTM solves the vanishing gradient problem by adding gating mechanisms(门控机制) that control information flow:

┌──────────── Cell State (highway of information) ──────────────┐

│ │

│ ┌─────┐ ┌─────┐ ┌─────┐ │

│ │Forget│ │Input │ │Output│ │

│ │ Gate │ │ Gate │ │ Gate │ │

│ └──┬──┘ └──┬──┘ └──┬──┘ │

│ │ │ │ │

└───────┴────────────┴────────────┴──────────────────────────────┘

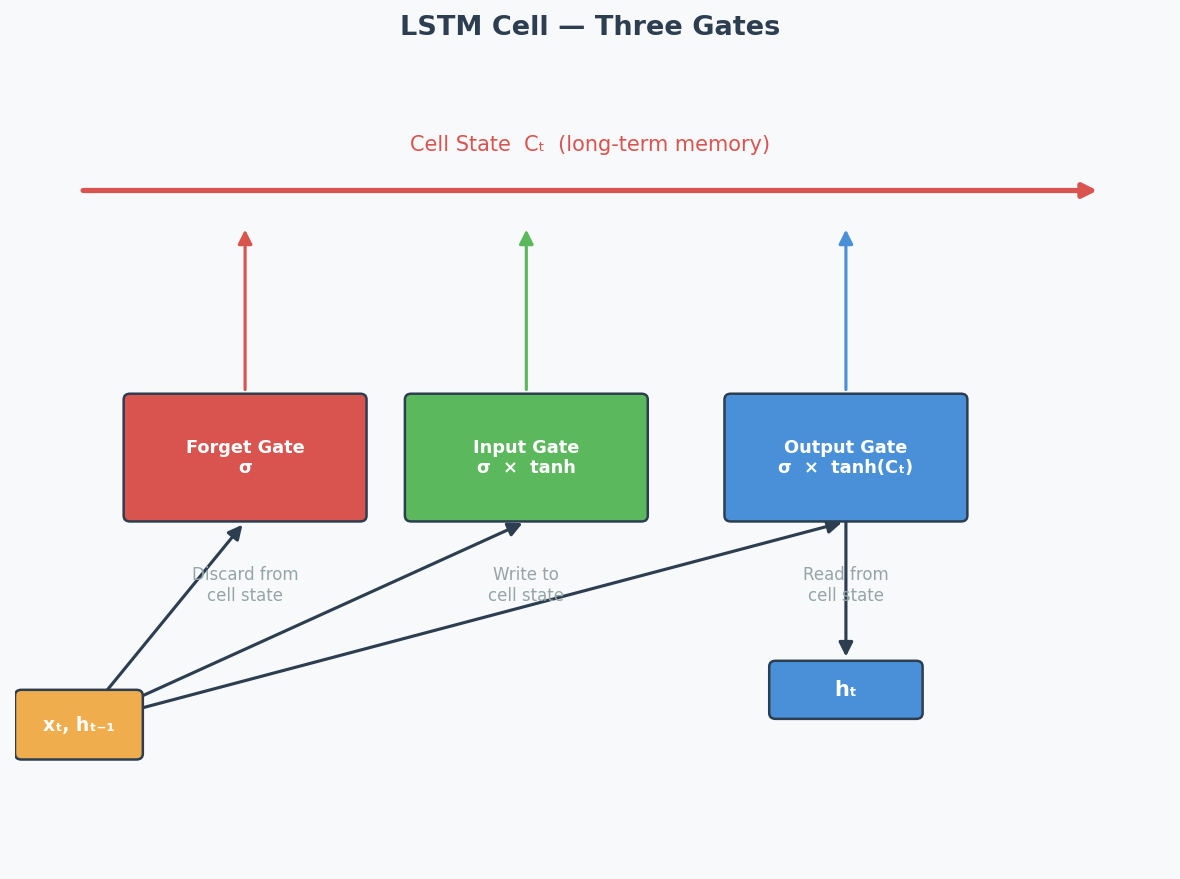

| Gate | What It Does | Analogy |

|---|---|---|

| Forget Gate(遗忘门) | Decides what old information to discard | "Should I forget the first scene?" |

| Input Gate(输入门) | Decides what new information to store | "Is this new scene important?" |

| Output Gate(输出门) | Decides what to output from the cell state | "What part of my memory is relevant now?" |

The cell state(细胞状态) acts like a conveyor belt — information can flow through unchanged if the gates allow it. This creates a direct path for gradients to flow back through time without being multiplied at each step → solves vanishing gradients.

GRU — Gated Recurrent Unit

GRU is a simplified version of LSTM with only 2 gates:

| Gate | What It Does |

|---|---|

| Reset Gate(重置门) | Controls how much past information to forget (similar to forget gate) |

| Update Gate(更新门) | Controls the balance between old state and new candidate (combines forget + input) |

GRU vs LSTM: GRU has fewer parameters → faster to train, sometimes performs just as well. LSTM is more expressive for complex long-range dependencies.

How Transformers Fix the RNN Problem (2024 Q5.2)

| Problem | RNN Approach | Transformer Solution |

|---|---|---|

| Sequence order | Implicit (process sequentially) | Explicit via positional encoding |

| Parallelisation | NOT possible (sequential dependency) | FULLY parallel (all positions at once) |

| Long-range dependencies | Difficult (vanishing gradients) | Direct connections via self-attention |

| Speed | Slow for long sequences | Fast (O(1) sequential operations, O(n²) total) |

The key answer for 2024 Q5.2:

- The Transformer processes ALL input positions simultaneously using embeddings (not sequentially) → enables parallel computation → much faster

- But this loses order information → solved by adding positional encoding to the embeddings

- Self-attention creates direct connections between any two positions → no vanishing gradient over distance

Exam Answer Template for 2024 Q5

(1) Why is sequential processing both an advantage and drawback?

"Sequential processing is an advantage because it naturally captures the order of the input sequence — each hidden state implicitly encodes position information based on the processing order. However, it is also a drawback because each step depends on the previous hidden state, making it impossible to parallelise computation. For long sequences, this leads to very slow training and inference times."

(2) How does the Transformer alleviate this?

"The Transformer architecture processes all input positions in parallel by creating embeddings for each token simultaneously, rather than processing them sequentially. This dramatically speeds up computation. However, since parallel processing loses positional information, the Transformer adds positional encoding to the embeddings to integrate information about the sequence order."

Architecture Diagrams

RNN Unrolled Through Time:

LSTM Cell — Three Gates:

中文思维 → 英文输出

| 中文思路 | 考试英文表达 |

|---|---|

| RNN按顺序处理是优点也是缺点 | "Sequential processing is both an advantage and a drawback: it naturally captures temporal order, but prevents parallelisation." |

| LSTM用门控解决梯度消失 | "LSTM mitigates vanishing gradients by introducing gating mechanisms that control information flow through a dedicated cell state." |

| Transformer用位置编码补回顺序信息 | "The Transformer compensates for the loss of order information by adding positional encoding to embeddings." |

| GRU比LSTM简单,参数少 | "GRU simplifies LSTM by combining the forget and input gates into a single update gate, reducing the number of parameters." |

| RNN不能并行所以慢 | "Sequential processing prevents parallelisation, making RNN training slow for long sequences." |

本章 Chinglish 纠正

| Chinglish (避免) | 正确表达 |

|---|---|

| "RNN can remember the before information" | "RNNs maintain a hidden state that carries information from previous time steps" |

| "LSTM has three gates to control the memory" | "LSTM uses three gates (forget, input, output) to regulate information flow through the cell state" |

| "Transformer is better than RNN in all ways" | "Transformers excel in most scenarios, but RNNs may be preferred for resource-constrained or streaming applications" |

Whiteboard Self-Test

- Can you draw the basic RNN unrolled diagram (input → hidden state → next step)?

- Can you explain sequential processing as BOTH advantage and drawback?

- Can you name the 3 gates in LSTM and what each does?

- Can you explain how the Transformer solves the parallelisation problem?

- Why do vanilla RNNs have trouble with long sequences?

Transformer & Attention Mechanism

Exam Importance

MUST | Every exam has a Transformer question (2025 Q5, 2024 Q5, Practice Q6)

Feynman Draft

Imagine you're reading a long book and someone asks: "What did the main character feel about the letter?"

You don't re-read every word. You skim for relevant parts — you pay more attention to sentences about the character and the letter, and less attention to descriptions of the weather. That's Attention(注意力机制).

Now imagine you have 8 friends, and each one reads the book looking for something different: one tracks emotions, one tracks characters, one tracks locations, one tracks time. Then they share notes. That's Multi-Head Attention(多头注意力)— multiple "perspectives" on the same input.

The Transformer's Big Idea:

RNNs read words one by one (like reading a book left to right, can't skip ahead). This is slow. The Transformer reads ALL words at once (like seeing the whole page), then uses Attention to figure out which words relate to which. Much faster.

But wait — if you see all words at once, you lose the order! "Dog bites man" ≠ "Man bites dog". Solution: add Positional Encoding(位置编码)— a signal that tells the model "this word is in position 1, this one is position 2..."

Toy Example: "The cat sat on the mat"

With attention, when processing "sat", the model assigns weights:

"The" → 0.05 (not very relevant)

"cat" → 0.60 (WHO sat? very relevant!)

"sat" → 0.10 (itself)

"on" → 0.05 (grammar word)

"the" → 0.05 (not very relevant)

"mat" → 0.15 (WHERE sat? somewhat relevant)

Common Misconception: "Transformers are just faster RNNs." No — they work fundamentally differently. RNNs process sequentially (maintaining hidden state). Transformers process all positions in parallel (using attention to find relationships).

Core Intuition: Attention = learned "relevance weighting" between all pairs of inputs, processed in parallel.

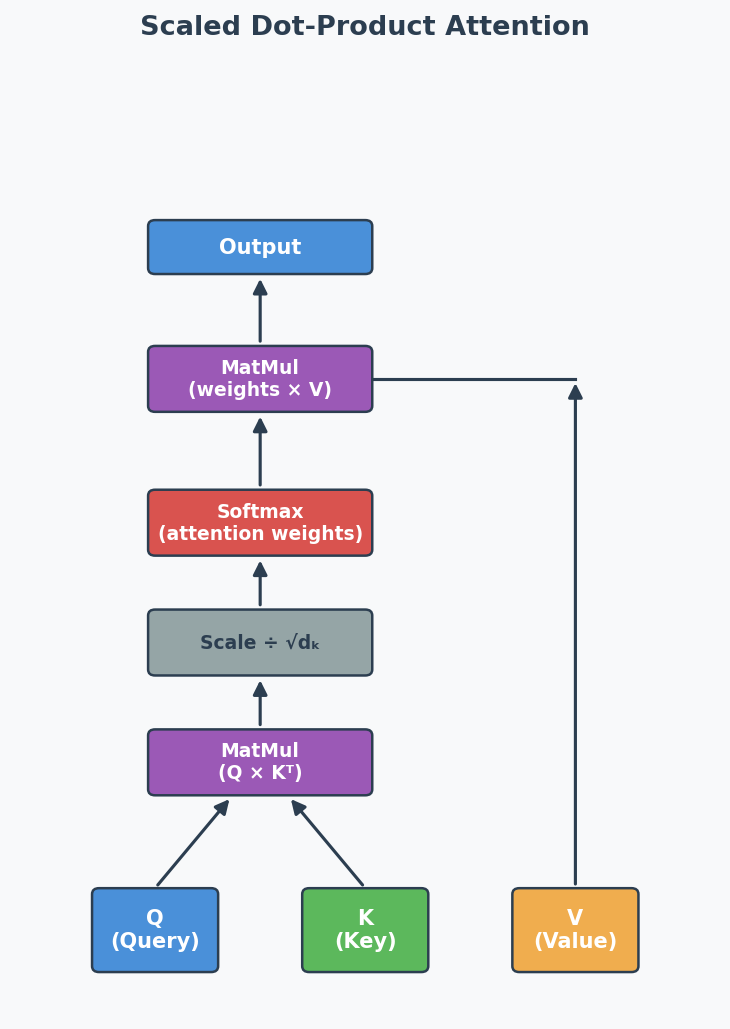

Formal Definition: Scaled Dot-Product Attention

Scaled Dot-Product Attention(缩放点积注意力):

$$\text{Attention}(Q, K, V) = \text{softmax}\left(\frac{QK^T}{\sqrt{d_k}}\right) V$$

Where:

- Q (Query): "What am I looking for?"

- K (Key): "What do I contain?"

- V (Value): "What information do I provide?"

- $d_k$: dimension of keys (scaling factor to prevent huge dot products)

The softmax creates attention weights (sum to 1) → multiply by V to get weighted combination.

Multi-Head Attention (考试高频)

Instead of one attention function, run h attention heads in parallel, each with its own learned Q, K, V weight matrices:

$$\text{MultiHead}(Q, K, V) = \text{Concat}(\text{head}_1, ..., \text{head}_h) W^O$$

Why multiple heads?

- Each head learns to focus on different aspects (syntax, semantics, position)

- Single head would have an averaging effect — blurs different types of relationships

- Multiple heads capture richer, more diverse patterns

Masked Attention in Decoder (2025 Q5a, 2024 Q5a)

What: In the decoder, when predicting token at position $t$, the attention is masked to prevent looking at positions $t+1, t+2, ...$

Why: During training, all tokens are available (teacher forcing(教师强迫)), but the model must learn to predict WITHOUT seeing the future. The mask sets future positions to $-\infty$ before softmax → attention weights become 0 for future tokens.

In plain English: It's like covering the right half of the answer sheet during an exam — you can only see what you've already written, not what comes next. This preserves the autoregressive(自回归) property: each prediction depends only on previous predictions.

Without mask: With mask:

"I love cats" "I love cats"

↕ ↕ ↕ → → → (can only look LEFT)

All attend to all Each token only attends to previous tokens

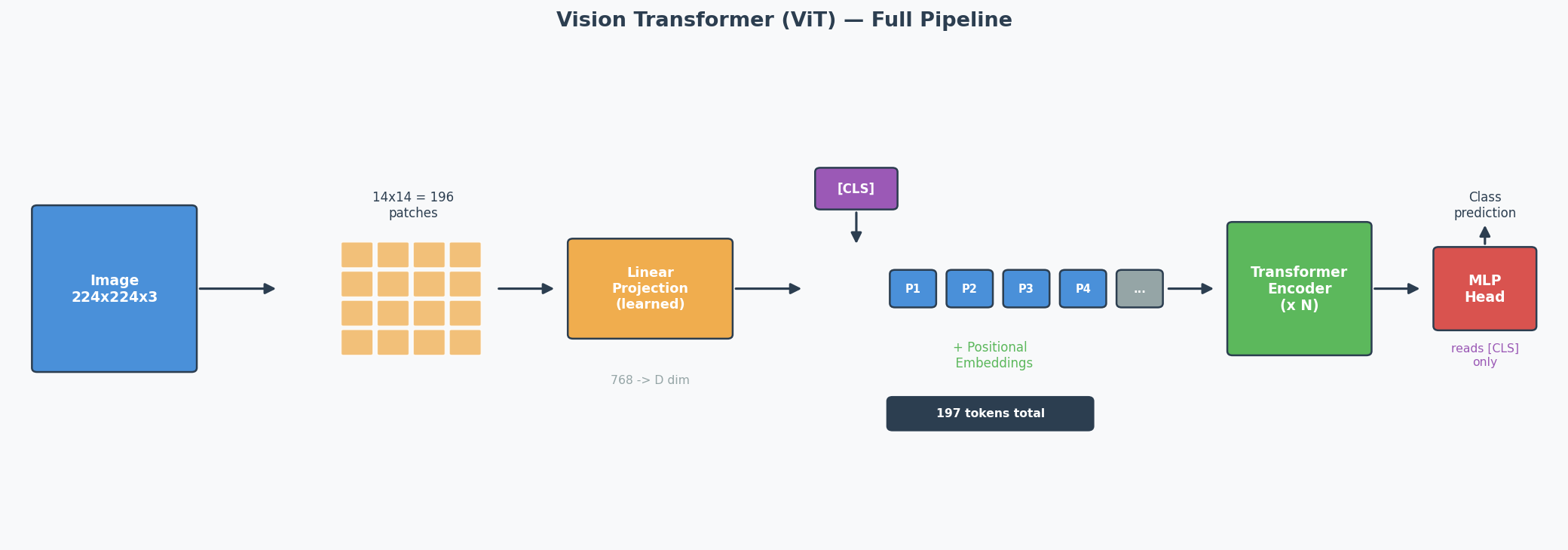

Vision Transformer (ViT) — Full Pipeline (2025 Q5b)

The Core Idea

CNNs use sliding filters to process images. ViT asks: what if we just cut the image into patches(图像块) and feed them into a standard Transformer? It turns out this works — and for large datasets, ViT matches or beats CNNs.

The ViT Pipeline (Step by Step)

Concrete Example: 224 × 224 image, patch size = 16 × 16

Step 1: Split into patches

224 / 16 = 14 patches per side → 14 × 14 = 196 patches total

Each patch is 16 × 16 × 3 (RGB) = 768 values

Step 2: Linear projection (patch embedding)

Each patch (768 values) → linearly projected to a D-dimensional vector

This is NOT just flattening — it's a learned linear layer

Output: 196 vectors of dimension D

Step 3: Prepend [CLS] token

Add one learnable vector at position 0

Sequence is now: [CLS], patch_1, patch_2, ..., patch_196

Total: 197 tokens

Step 4: Add positional embeddings

Each of the 197 positions gets a learnable positional embedding (added, not concatenated)

Without this: the model can't distinguish top-left patch from bottom-right

Step 5: Pass through Transformer encoder

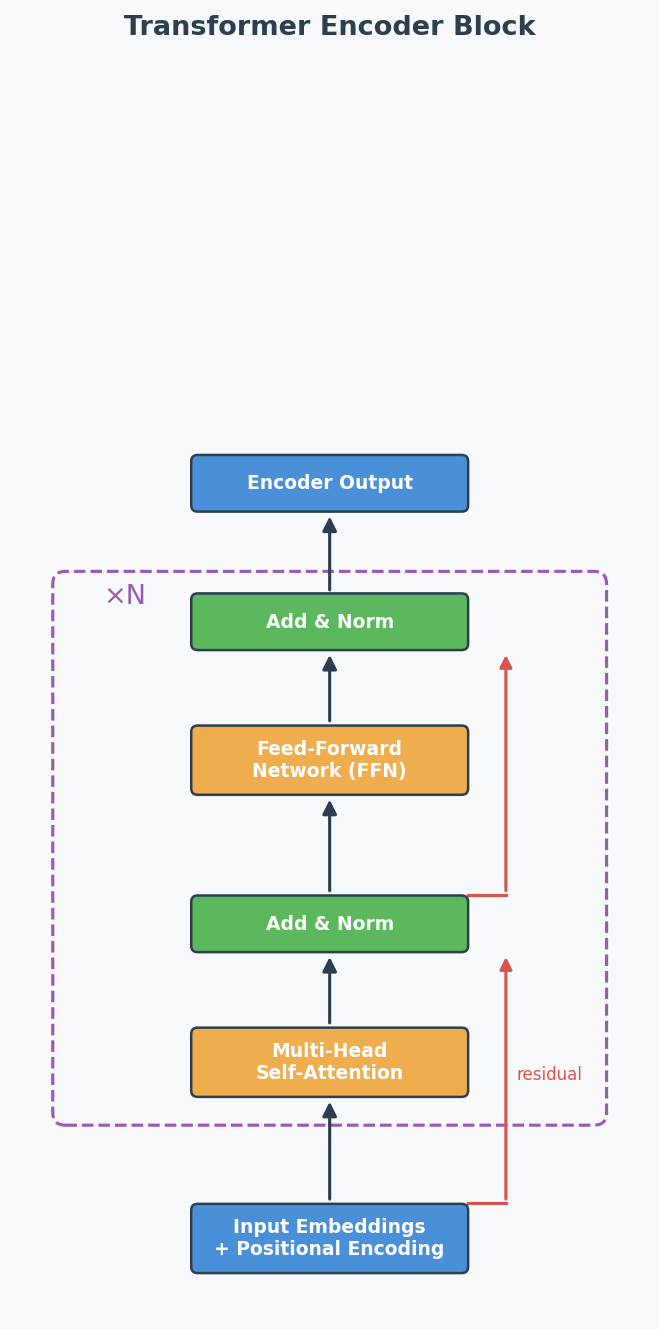

Standard encoder: Multi-Head Self-Attention → Add & Norm → FFN → Add & Norm

Repeated N times (ViT-Base uses N=12)

"Add & Norm" explained:

- ADD = residual/skip connection: output = sublayer(x) + x

Why: gradient flows directly through the '+' → prevents vanishing gradients in deep models

- NORM = Layer Normalisation: normalise across features for each token

Why: keeps activations stable → faster, more stable training

Step 6: Classification

Take ONLY the [CLS] token's output → pass through MLP head → class prediction

Why Patches Instead of Pixels?

Self-attention complexity is O(n²) where n = number of tokens.

| Approach | n (tokens) | Attention operations |

|---|---|---|

| Pixel-level (224×224) | 50,176 | ~2.5 billion — impossible |

| Patch-level (16×16 patches) | 196 | ~38,000 — feasible |

Patches reduce the sequence length by a factor of 256, making attention computationally tractable.

The [CLS] Token — What and Why

What: A special learnable embedding prepended to the patch sequence. It has no image content initially — it starts as random values and is learned during training.

How it works: Through self-attention across all encoder layers, the [CLS] token gradually aggregates information from ALL patches into a single global representation — like a "summary" token.

Why not just use all patch outputs? You could (some variants use Global Average Pooling over all patch embeddings instead). But [CLS] is more efficient: the MLP classification head only needs to read one vector instead of processing 196 vectors.

ViT vs CNN — Key Differences (Likely Exam Comparison)

| Aspect | CNN | ViT |

|---|---|---|

| Basic operation | Sliding filters (convolution) | Self-attention over patches |

| Inductive bias(归纳偏置) | Strong: locality + translation invariance built in | Weak: no assumptions about spatial structure |

| Small datasets | Better — inductive bias compensates for limited data | Worse — needs pre-training on large data |

| Large datasets | Good | Better — fewer assumptions → more flexible |

| Computation pattern | Local (each filter sees a small region) | Global (each patch attends to ALL other patches) |

| Long-range dependencies | Only in deep layers (receptive field grows with depth) | From layer 1 (full attention is global) |

Common Misconception: "ViT is always better than CNN." Wrong — ViT only beats CNN when trained on large datasets (e.g., ImageNet-21k, JFT-300M). On small datasets, CNN's inductive bias gives it a significant advantage. This is why ViT models are typically pre-trained on large data then fine-tuned on smaller target datasets.

Core Intuition: ViT trades CNN's built-in assumptions (locality, translation invariance) for the Transformer's flexibility — this pays off only when you have enough data to learn those patterns from scratch.

RNN vs Transformer (2024 Q5)

| Aspect | RNN | Transformer |

|---|---|---|

| Processing | Sequential (one token at a time) | Parallel (all tokens at once) |

| Order info | Implicit (from sequential processing) | Explicit (positional encoding needed) |

| Speed | Slow for long sequences | Fast (parallelisable) |

| Long-range deps | Struggles (vanishing gradients) | Good (direct attention connections) |

| Advantage | Natural order capture | Parallelisation + long-range attention |

| Drawback | Can't parallelise | Needs positional encoding, O(n²) attention |

Exam answer structure for 2024 Q5:

- Advantage of sequential: RNNs naturally capture sequence order through their step-by-step processing — no extra mechanism needed.

- Drawback of sequential: Can't parallelise → slow for long sequences. Each step must wait for the previous one.

- How Transformer fixes it: Uses embeddings to represent all positions at once (parallel), then adds positional encoding to restore order information that would otherwise be lost.

Past Exam Questions Summary

| Exam | Question | What They Asked |

|---|---|---|

| 2025 Q5a | Masked attention in decoder | Why mask? (autoregressive property) |

| 2025 Q5b | ViT [CLS] token | What is it? Why useful? (aggregation + efficiency) |

| 2024 Q5 | RNN advantage/drawback + how Transformer fixes | Sequential processing trade-off |

| Practice Q6a | What is multi-head attention? | Multiple attention heads with separate Q/K/V |

| Practice Q6b | Why is multi-head attention useful? | Different aspects, avoids averaging |

English Expression Templates

Explaining attention:

- "The attention mechanism allows the model to focus on the most relevant parts of the input sequence when making predictions."

- "Attention computes a weighted sum of values, where weights reflect the relevance of each input position."

Explaining masked attention:

- "Masking prevents each position from attending to future tokens, ensuring predictions depend only on known outputs."

- "This preserves the autoregressive property during training."

Explaining multi-head:

- "Multi-head attention runs several attention functions in parallel, each focusing on different aspects of the input."

- "This is beneficial because a single head would have an averaging effect over all types of relationships."

Architecture Diagrams

Transformer Encoder Block:

Scaled Dot-Product Attention:

中文思维 → 英文输出

| 中文思路 | 考试英文表达 |

|---|---|

| 注意力就是给每个位置加权 | "The attention mechanism computes a weighted sum of values, where the weights reflect the relevance of each input position to the current query." |

| 多头是为了关注不同方面 | "Multi-head attention runs several attention functions in parallel, each with its own learned projections, allowing the model to focus on different aspects simultaneously." |

| 遮蔽是为了不看未来的token | "Masking prevents each position from attending to future tokens, preserving the autoregressive property during training." |

| CLS token聚合所有patch的信息 | "The [CLS] token aggregates information from all image patches through self-attention, providing a global representation for classification." |

| ViT需要大数据才比CNN好 | "ViT outperforms CNN only when trained on large-scale datasets; on small datasets, CNN's stronger inductive bias is advantageous." |

本章 Chinglish 纠正

| Chinglish (避免) | 正确表达 |

|---|---|

| "Attention can let model focus on important part" | "The attention mechanism enables the model to dynamically focus on the most relevant parts of the input" |

| "Mask is for preventing cheat" | "Masking prevents information leakage from future tokens during training" |

| "ViT is cut picture to small pieces" | "ViT splits the image into non-overlapping patches and processes them as a sequence of tokens" |

Whiteboard Self-Test

- Can you draw the Transformer encoder block (self-attention → add&norm → FFN → add&norm)?

- Can you explain Q, K, V in one sentence each?

- Can you explain masked attention and WHY it's needed?