COMPSCI 713 — AI Fundamentals: Exam Preparation

University of Auckland | Semester 1, 2026 | Instructor: Xinyu Zhang

About This Book

This knowledge base is built to help you learn and prepare for the COMPSCI 713 in-course test (Week 7, 60 minutes, 20 marks).

Every concept is explained using the Feynman method: first in plain language with analogies, then formally with math, then applied to real exam questions. The goal is not just memorisation — it’s understanding.

How to Use This Book

- Start with Part 0 — read the exam analysis to understand what’s tested and with what weight

- Work through modules in priority order — 🔴 modules first (A, B, G, F), then 🟠 (D, H), then 🟡 (C, E)

- For each chapter: read the Feynman Draft first to build intuition, then study the formal definitions, then try the practice problems

- Use the English Expression Guide before the test — practise the sentence templates

- Attempt all 3 mock exams under timed conditions (55 min answering)

- Check your cheat sheet — the frequency map chapter has recommendations for what to write on your handwritten A4 page

Exam Format (Sample Test S1 2026)

| Item | Detail |

|---|---|

| Duration | 60 min (5 min reading + 55 min answering) |

| Total marks | 20 |

| Questions | 6 short-answer questions |

| Notes allowed | Double-sided handwritten A4 page |

| Calculators | Not permitted |

| Style | Quality over quantity — concise, clear answers |

Coverage Map (Weeks 2-5)

| Week | Lecture | Topic | Module |

|---|---|---|---|

| W2 | L1 | Symbolic Logic (Propositional + FOL) | A 🔴 |

| W2 | L2 | Logic Neural Networks (LNN) | B 🔴 |

| W3 | L1 | Knowledge Representation (Expert Systems, Ontologies, KG) | C 🟡 |

| W3 | L2 | Knowledge Graphs for AI (TransE, Embeddings, RAG) | D 🟠 |

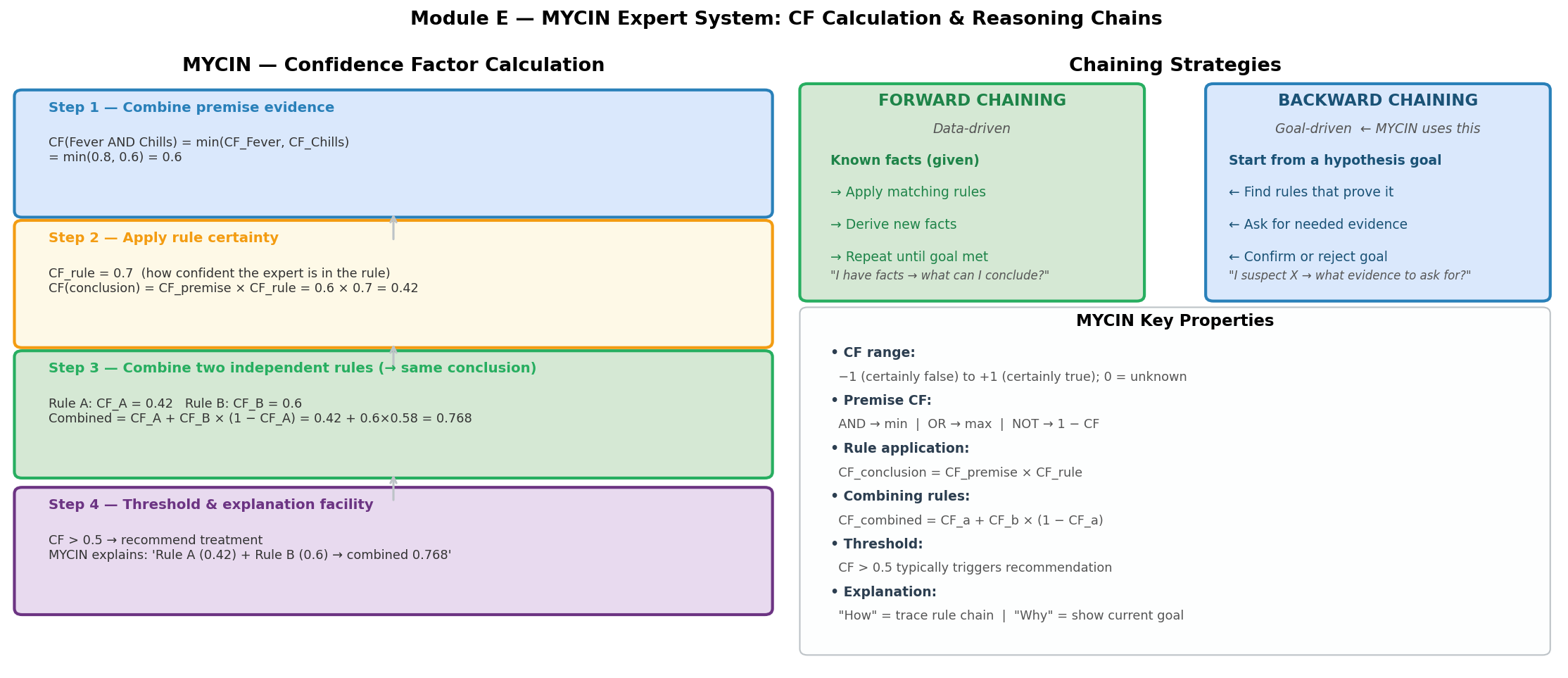

| W4 | L1 | MYCIN Expert System (Confidence Factors) | E 🟡 |

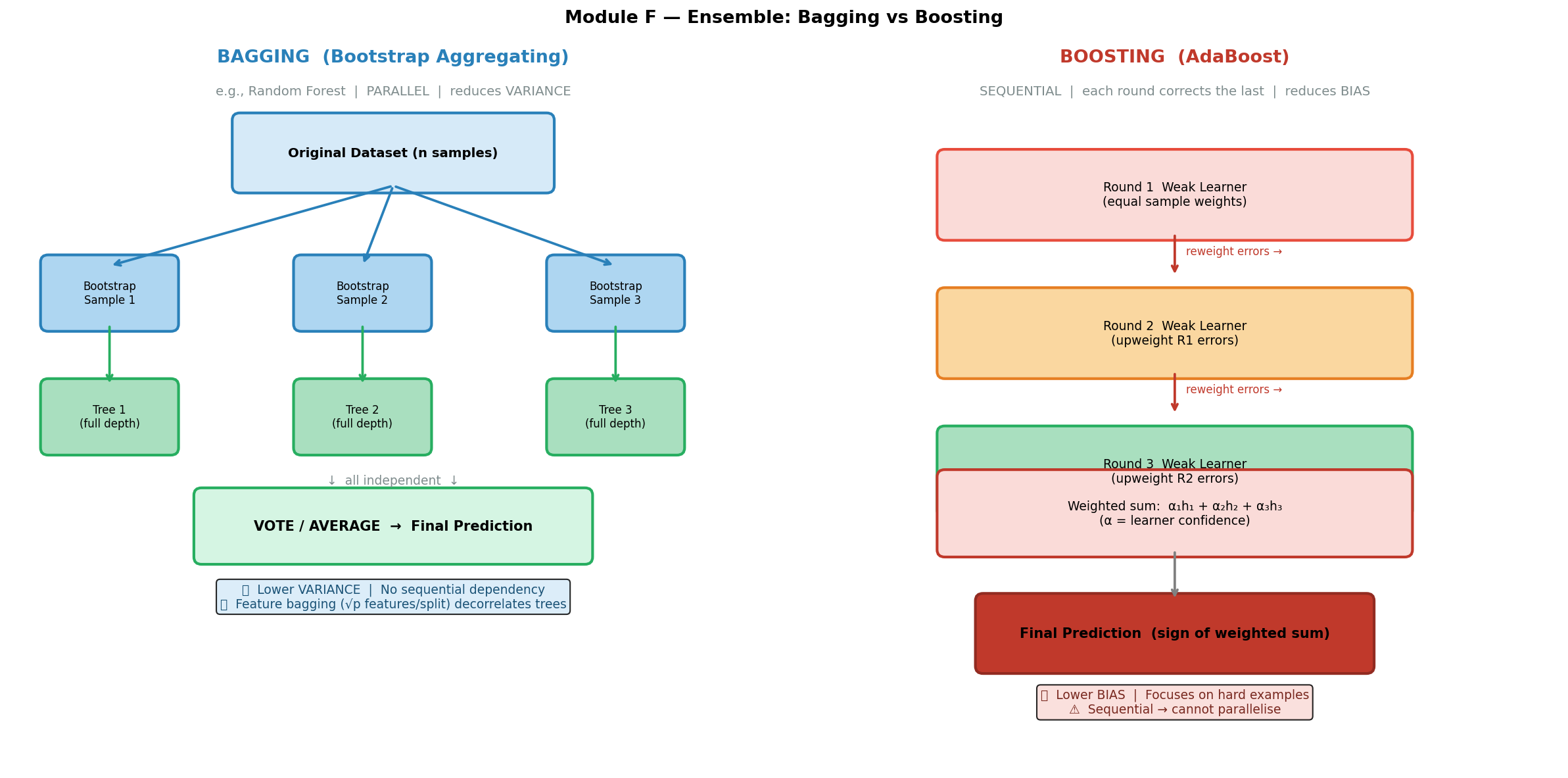

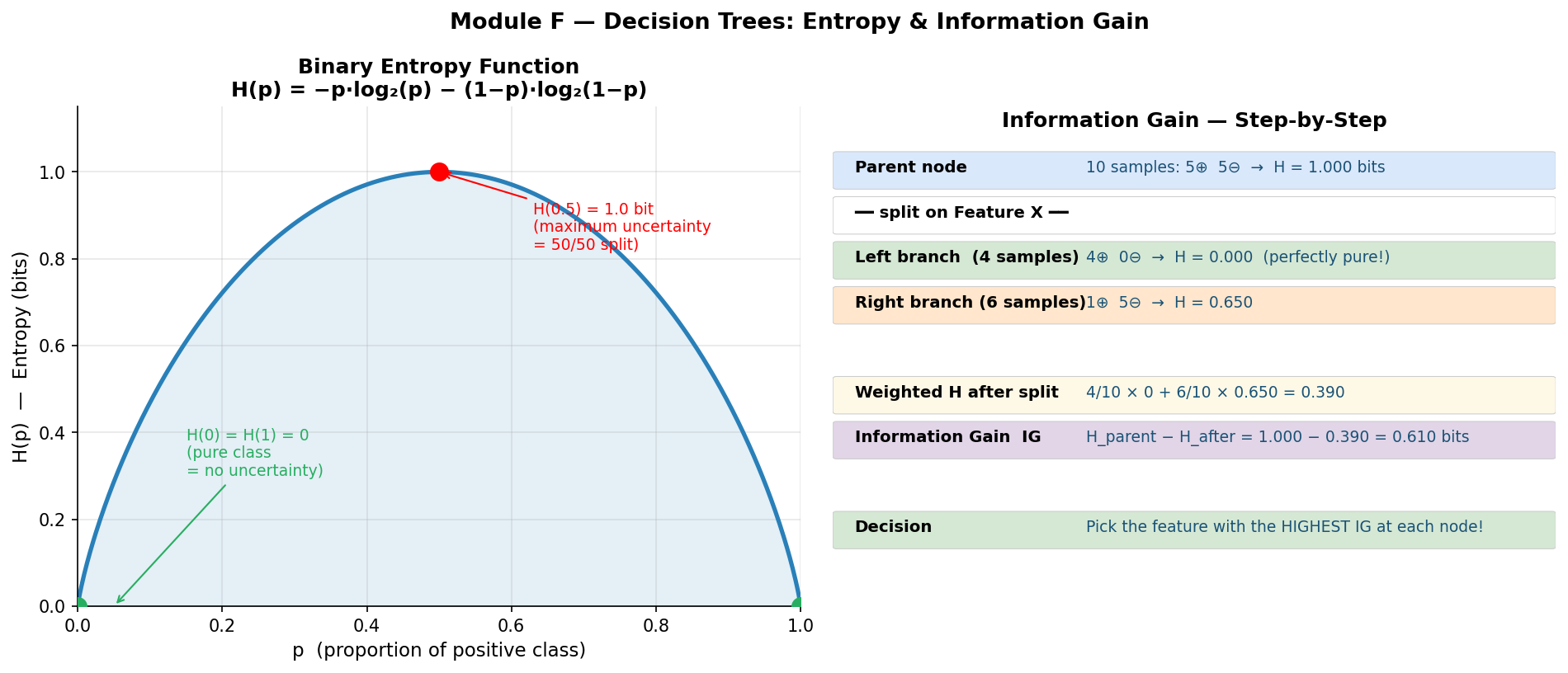

| W4 | L2 | Decision Trees & Ensembles (Bagging, Boosting) | F 🔴 |

| W5 | L1 | Soft Computing (Fuzzy Logic, Bayesian, Vagueness vs Uncertainty) | G 🔴 |

| — | — | Multi-Agent Systems (Robot Soccer) | H 🟠 |

Priority Legend

- 🔴 必考 (Must-Know): Appeared in sample test with high mark weight

- 🟠 高频 (High Frequency): Appeared in sample test with moderate weight

- 🟡 中频 (Medium): Full lecture topic, not in sample but likely in actual test

真题逐题分析 — Complete Exam Analysis

Course: COMPSCI 713: AI Fundamentals, University of Auckland Instructor: Xinyu Zhang (mid-semester) / Thomas (final exam, partial) Scope: ALL available exam papers — S1 2025 Sample, S1 2025 Actual, S1 2026 Sample, S1 2024 Final Purpose: Question-by-question breakdown for exam preparation

How to Use This Document(使用指南)

- First pass: Skim the tables at the end of each exam section to see topic/mark distribution

- Second pass: Read the Learning Points for your weakest topics

- Third pass: Use the Common Mistakes as a self-check before the exam

- Final review: Jump to Cross-Exam Patterns at the bottom

💡 核心发现: 每一份试卷都考了 Symbolic Logic, LNN, Knowledge Graphs, 和 Decision Trees/Ensembles。这四个是绝对必考项。

Exam Paper 1: S1 2025 Sample Test

Format: 15 marks, 6 questions, 60 minutes (5 reading + 55 answering) Allowed aids: One double-sided handwritten A4 page

Q1 — Symbolic Logic [3 marks]

Question Summary

(a) Propositional Logic — Modus Tollens [~1.5m]

Scenario: A secure facility grants entry only if a person has a valid ID ($I$) AND fingerprint matches ($F$). Rule: $(I \wedge F) \rightarrow E$. Observed: person was NOT granted entry ($\neg E$).

Task: Deduce what must be true about $I$ and $F$.

(b) First-Order Logic [~1.5m]

Task: Write “Not all birds can fly” in FOL using $\text{Fly}(x)$. Give a realistic example.

Expected Answer

(a):

- By Modus Tollens: $(I \wedge F) \rightarrow E$ and $\neg E$ implies $\neg(I \wedge F)$

- By De Morgan: $\neg I \vee \neg F$

- Conclusion: Either the person lacked a valid ID, or the fingerprint didn’t match (or both)

(b):

- FOL: $\neg \forall x , \text{Fly}(x)$, equivalently $\exists x , \neg \text{Fly}(x)$

- Example: “Penguins are birds but cannot fly”

Analysis

| Item | Detail |

|---|---|

| Topic | Symbolic Logic(符号逻辑) |

| Lecture | W2L1 |

| Type | 推理 + 形式化 (Deduction + Formalisation) |

| Difficulty | ★★☆ |

| Keywords | propositional logic, modus tollens, FOL, universal quantifier, negation |

| Exam intent | Can student apply basic inference rules AND translate English → FOL? |

Learning Points(学习要点)

- Modus Tollens 是本课程考试的第一推理模式: $(P \rightarrow Q), \neg Q \vdash \neg P$。务必熟练到条件反射

- “Not all” = $\neg \forall x$: 注意不是 $\forall x , \neg$(后者意为“所有都不“,语义完全不同)

- De Morgan 定律: $\neg(A \wedge B) = \neg A \vee \neg B$,推导结论时经常用到

⚠️ Common Mistake: Writing $\forall x , \neg \text{Fly}(x)$ which means “NO bird can fly” — much stronger than “not all birds can fly.”

Q2 — Logic Neural Networks (LNN) [2 marks]

Question Summary

Scenario: Smart home LNN rule: HeatingOn $\leftarrow$ Cold $\otimes$ AtHome (differentiable AND).

(a) Interpret in natural language. How does it differ from Boolean? [1m]

(b) Compute with Cold = 0.9, AtHome = 0.4. Discuss whether heating activates. [1m]

Expected Answer

(a):

- Natural language: “If it is cold AND someone is at home, turn on the heating.”

- Difference: Boolean AND requires both inputs strictly TRUE (1). LNN’s $\otimes$ works with continuous truth values in $[0, 1]$, producing intermediate results that capture partial truth and enable gradient-based learning.

(b):

- Product t-norm: $0.9 \times 0.4 = 0.36$

- Lukasiewicz: $\max(0, 0.9 + 0.4 - 1) = 0.3$

- Whether heating activates depends on threshold: if $\alpha = 0.3$, yes; if $\alpha = 0.7$, no.

Analysis

| Item | Detail |

|---|---|

| Topic | Logic Neural Networks(逻辑神经网络) |

| Lecture | W2L2 |

| Type | 概念解释 + 计算 (Explain + Compute) |

| Difficulty | ★★☆ |

| Keywords | LNN, soft conjunction, t-norm, product, Lukasiewicz, threshold |

| Exam intent | Why do we need differentiable logic? Can student compute with t-norms? |

Learning Points

- 必背三个 t-norm:

- Product: $a \times b$

- Lukasiewicz: $\max(0, a + b - 1)$

- Godel/min: $\min(a, b)$

- Boolean vs LNN 的关键差异: Boolean 是离散的 {0,1};LNN 是连续的 [0,1],支持梯度下降

⚠️ Common Mistake: Forgetting to discuss the threshold. Computing 0.36 is not enough — you must state what activation decision follows.

Q3 — Knowledge Graph Embeddings [2 marks]

Question Summary

Explain the role of entity/relation embeddings in KG completion. Introduce a common KG inference task with an example.

Expected Answer

- Embeddings: Map entities and relations to dense vectors in continuous space, enabling mathematical operations for reasoning

- Inference task: Link prediction — given $(h, r, ?)$, predict tail entity

- Example: $(Einstein, bornIn, ?) \rightarrow Germany$

Analysis

| Item | Detail |

|---|---|

| Topic | Knowledge Graphs(知识图谱) |

| Lecture | W3L2 |

| Type | 概念解释 + 举例 (Explain + Example) |

| Difficulty | ★☆☆ |

| Keywords | KG embedding, TransE, link prediction, knowledge completion |

Learning Points

- TransE 核心公式: $h + r \approx t$(头实体向量 + 关系向量 ≈ 尾实体向量)

- 三种推理任务: tail prediction $(h, r, ?)$, head prediction $(?, r, t)$, relation prediction $(h, ?, t)$

⚠️ Common Mistake: Confusing “embedding” with “one-hot encoding.” Embeddings are dense, low-dimensional, learned vectors — not sparse indicator vectors.

Exam tip(答题技巧): 永远给具体例子。“(Einstein, bornIn, ?) → Germany” 远比 “it predicts missing links” 好。

Q4 — Embodied AI / Robot Soccer [2 marks]

Question Summary

Robot soccer league: overhead camera, 225 features per frame, team of 5 robots, no inter-robot communication. Describe strategies/collective behaviours.

Expected Answer

Any of (1 mark each, max 2):

- Collective behaviours: passing strategy, interception prediction, passing point value assessment

- Positioning strategies: formations for attack/defense

- Role-based strategies: dynamic role assignment based on game situation

- Centralized control: overhead camera acts as single controller for all 5 robots

Analysis

| Item | Detail |

|---|---|

| Topic | Embodied AI / Multi-Agent Systems(具身AI/多智能体) |

| Lecture | Multi-Agent lecture |

| Type | 知识回忆 (Recall) |

| Difficulty | ★☆☆ |

| Exam intent | Tests lecture attendance — can you name concrete strategies? |

Learning Points

- 三大策略类别: (1) 集体行为 — passing, (2) 位置策略 — formation, (3) 角色分配 — dynamic assignment

- 关键细节: No communication → centralized control via overhead camera → single decision-maker

⚠️ Common Mistake: Being too vague. “They work together” = 0 marks. Name specific strategies.

Q5 — Random Forest / Bagging [3 marks]

Question Summary

Dataset with 225 features.

(a) How are features selected per tree? How many? [2m]

(b) Why is feature bagging a good idea? [1m]

Expected Answer

(a):

- Random subset of features sampled per tree (not all 225)

- Typical: $\sqrt{p} = \sqrt{225} = 15$ features per tree

- Different trees see different feature subsets

(b):

- Prevents trees from being highly correlated (e.g., same dominant feature always at root)

- Decorrelated trees → ensemble averaging reduces variance more effectively

Analysis

| Item | Detail |

|---|---|

| Topic | Decision Trees & Ensembles(决策树与集成方法) |

| Lecture | W4L2 |

| Type | 解释 + 计算 (Explain + Calculate) |

| Difficulty | ★★☆ |

| Exam intent | Tests “why” not just “what” — understanding the purpose of feature bagging |

Learning Points

- $\sqrt{p}$ rule: For $p$ features, sample $\sqrt{p}$ per tree. For 225 features → 15.

- Bagging vs Boosting:

- Bagging → parallel trees → reduces variance

- Boosting → sequential trees → reduces bias

- 两层随机化: (1) Bootstrap sampling of data rows, (2) Random sampling of features. Both reduce correlation.

⚠️ Common Mistake: Confusing bootstrap sampling (random data points) with feature bagging (random features). Both happen in Random Forest; they serve different purposes.

Q6 — MYCIN / Expert Systems [3 marks]

Question Summary

Medical diagnosis scenario using backward chaining. Patient has a runny nose. Possible diagnoses: common cold, allergies, measles. Demonstrate backward chaining reasoning.

Expected Answer

- Backward chaining: Start from hypothesis, work backward to check conditions

- Hypothesis: Common Cold → needs runny nose ✓, fever ?, cough ?

- Hypothesis: Allergies → needs runny nose ✓, sneezing ?, itchy eyes ?

- Hypothesis: Measles → needs runny nose ✓, rash ?, high fever ?

- Ask additional questions to discriminate between hypotheses

- Contrast with forward chaining: start from facts, derive conclusions

Analysis

| Item | Detail |

|---|---|

| Topic | Expert Systems / MYCIN(专家系统) |

| Lecture | W3L1 (Knowledge Representation) |

| Type | 推理过程演示 (Demonstrate reasoning process) |

| Difficulty | ★★☆ |

| Keywords | backward chaining, hypothesis, rule-based reasoning, MYCIN |

| Exam intent | Can student trace backward chaining step by step? |

Learning Points

- Backward chaining 三步法: (1) Start with hypothesis, (2) Check conditions, (3) Ask for missing info

- Forward vs Backward: Forward = data-driven (fact → conclusion); Backward = goal-driven (hypothesis → verify)

- MYCIN 特色: Uses certainty factors (CF) instead of probabilities; backward chaining for diagnosis

⚠️ Common Mistake: Describing forward chaining when asked for backward chaining. Direction matters!

S1 2025 Sample Test — Summary Table

| Q | Topic | Marks | % | Cognitive Level |

|---|---|---|---|---|

| Q1 | Symbolic Logic | 3 | 20% | Apply + Formalise |

| Q2 | LNN | 2 | 13% | Explain + Compute |

| Q3 | KG Embeddings | 2 | 13% | Explain + Exemplify |

| Q4 | Robot Soccer | 2 | 13% | Recall |

| Q5 | Random Forest | 3 | 20% | Explain + Calculate |

| Q6 | MYCIN / Expert Systems | 3 | 20% | Demonstrate reasoning |

| Total | 15 | 100% |

Exam Paper 2: S1 2025 Actual Test

Format: 15 marks, 6 questions, 60 minutes This is the REAL exam that was sat

Q1 — Symbolic Logic [2 marks]

Question Summary

(a) Given $(P \vee Q) \rightarrow R$ and $\neg R$. Apply Modus Tollens. [1m]

(b) Given $\forall x(\text{Cheat}(x) \rightarrow \text{Disqualified}(x))$ and Alice is not disqualified. Conclude about Alice. [1m]

Expected Answer

(a):

- Modus Tollens: $(P \vee Q) \rightarrow R$ and $\neg R$ implies $\neg(P \vee Q)$

- By De Morgan: $\neg P \wedge \neg Q$

- Both P and Q must be false

(b):

- Universal instantiation: $\text{Cheat}(\text{Alice}) \rightarrow \text{Disqualified}(\text{Alice})$

- Given $\neg \text{Disqualified}(\text{Alice})$, by Modus Tollens: $\neg \text{Cheat}(\text{Alice})$

- Conclusion: Alice did not cheat

Analysis

| Item | Detail |

|---|---|

| Topic | Symbolic Logic(符号逻辑) |

| Lecture | W2L1 |

| Type | 推理 (Pure deduction) |

| Difficulty | ★★☆ |

| Keywords | modus tollens, disjunction, De Morgan, universal instantiation, FOL |

| Exam intent | Modus Tollens again! Plus combining FOL with propositional reasoning |

Learning Points

- 这道题和 Sample 的区别: Sample 用 $(I \wedge F) \rightarrow E$,Actual 用 $(P \vee Q) \rightarrow R$。结论不同!

- $\neg(A \wedge B) = \neg A \vee \neg B$(至少一个为假)

- $\neg(A \vee B) = \neg A \wedge \neg B$(两个都假)

- FOL + Modus Tollens 组合拳: Universal instantiation 先把 $\forall x$ 具体化为 Alice,再用 Modus Tollens

⚠️ Common Mistake: For $\neg(P \vee Q)$, some students write “$P$ or $Q$ is false” — this is WRONG. BOTH must be false. De Morgan on disjunction gives conjunction of negations.

🔑 关键对比: AND 的否定 → 至少一个假 (disjunction); OR 的否定 → 全部假 (conjunction). 这是必须刻在脑子里的。

Q2 — LNN with Truth Bounds [3 marks]

Question Summary

Scenario: Autonomous vehicle collision alert system. Two sensors:

- Pedestrian detector: $P$ with bounds $[L_P, U_P] = [0.8, 0.9]$

- Obstacle detector: $Q$ with bounds $[L_Q, U_Q] = [0.3, 0.6]$

Rule: Alert $\leftarrow P \vee Q$ (disjunction, not conjunction!)

Alert threshold: $\alpha = 0.7$

(a) Determine alert status [2m]

(b) Why are bounds (instead of point estimates) useful in safety-critical applications? [1m]

Expected Answer

(a):

-

Co-norm for OR (using Lukasiewicz):

- Lower bound: $\min(1, L_P + L_Q) = \min(1, 0.8 + 0.3) = 1.0$

- Upper bound: $\min(1, U_P + U_Q) = \min(1, 0.9 + 0.6) = 1.0$

-

OR result bounds: $[1.0, 1.0]$

-

Since lower bound $1.0 \geq \alpha = 0.7$: Alert ACTIVATES

Alternative (product-based co-norm):

- $P \vee Q = P + Q - P \cdot Q$

- Lower: $0.8 + 0.3 - 0.8 \times 0.3 = 0.86$

- Upper: $0.9 + 0.6 - 0.9 \times 0.6 = 0.96$

- Bounds: $[0.86, 0.96]$, both $\geq 0.7$: Alert ACTIVATES

(b):

- Bounds capture epistemic uncertainty — we know the truth value lies somewhere in the interval

- In safety-critical systems, we can make conservative decisions: if even the lower bound exceeds threshold, we act

- Point estimates hide uncertainty; bounds let us reason about worst-case scenarios

Analysis

| Item | Detail |

|---|---|

| Topic | LNN with Truth Bounds(带真值边界的 LNN) |

| Lecture | W2L2 |

| Type | 计算 + 论述 (Compute + Argue) |

| Difficulty | ★★★ |

| Keywords | LNN, truth bounds, co-norm, disjunction, safety-critical, epistemic uncertainty |

| Exam intent | Can student compute with bounds (not just point values)? Understands safety implications? |

Learning Points

- 这是 LNN 的升级版考法: Sample 考 AND 的点值计算,Actual 考 OR 的区间计算

- AND vs OR t-norm/co-norm:

- AND (t-norm): Product → $a \times b$; Lukasiewicz → $\max(0, a + b - 1)$

- OR (co-norm): Product → $a + b - a \times b$; Lukasiewicz → $\min(1, a + b)$

- Safety-critical reasoning: 用 lower bound 做决策 = 最保守策略

⚠️ Common Mistake: Using AND formula when the question says OR! Read the operator carefully: $\otimes$ = AND, $\oplus$ = OR, $\vee$ = OR.

⚠️ 另一个常见错误: 忘记 bounds 是区间运算。不能只算一个值,要算 [lower, upper]。

Q3 — Knowledge Graphs / TransE [2 marks]

Question Summary

(a) Explain the TransE embedding model [1m]

(b) Write the TransE scoring function [1m]

Expected Answer

(a):

- TransE represents entities and relations as vectors in the same space

- Core idea: for a true triple $(h, r, t)$, the head plus relation should approximate the tail: $h + r \approx t$

(b):

- Scoring function: $f(h, r, t) = |h + r - t|$ (L1 or L2 norm)

- Lower score = more likely to be true

- Training: minimize score for true triples, maximize for false (negative sampling)

Analysis

| Item | Detail |

|---|---|

| Topic | Knowledge Graphs / TransE |

| Lecture | W3L2 |

| Type | 概念 + 公式 (Concept + Formula) |

| Difficulty | ★☆☆ |

| Exam intent | TransE is the simplest and most testable KG model — can you state the formula? |

Learning Points

- TransE 必背: $f(h,r,t) = |h + r - t|$,越小越可能是真三元组

- 与 Sample 的区别: Sample 考概念层面(什么是 embedding),Actual 考公式层面(TransE 具体怎么算)

- 局限性: TransE 无法建模 1-to-N 关系(如一个国家有多个城市)

⚠️ Common Mistake: Writing $h + r = t$ (equality) instead of $h + r \approx t$ (approximation). The model learns to minimize the distance, not enforce exact equality.

Q4 — Decision Trees / CART [2 marks]

Question Summary

What does “greedy” mean in the context of CART (Classification and Regression Trees)?

Expected Answer

- Greedy = at each node, CART picks the locally optimal split (maximum information gain or minimum Gini impurity) without considering future splits

- It does not evaluate all possible tree structures to find the global optimum

- This makes it computationally efficient but potentially suboptimal

- Why greedy? Finding the optimal tree is NP-hard

Analysis

| Item | Detail |

|---|---|

| Topic | Decision Trees(决策树) |

| Lecture | W4L1-L2 |

| Type | 概念解释 (Concept explanation) |

| Difficulty | ★☆☆ |

| Keywords | CART, greedy algorithm, local optimum, information gain, Gini impurity |

| Exam intent | Tests understanding of algorithm design philosophy, not just mechanics |

Learning Points

- “Greedy“三要素: (1) 每步选当前最优 (2) 不回溯 (3) 不保证全局最优

- 为什么接受 greedy?: 找最优树是 NP-hard;greedy 在实践中效果够好

- Ensemble 弥补 greedy: Random Forest 通过多棵 greedy tree 的聚合来逼近更好的解

⚠️ Common Mistake: Saying greedy means “fast.” Greedy is about the optimization strategy (local vs global), not speed.

Q5 — Fuzzy Logic [3 marks]

Question Summary

Contrast traditional (Boolean) logic vs fuzzy logic for the rule: IF athlete is STRONG AND athlete is HEAVY THEN athlete is HAMMER_THROWER

Expected Answer

Traditional Logic:

- STRONG = {yes, no}, HEAVY = {yes, no} → HAMMER_THROWER = {yes, no}

- Sharp boundaries: an athlete is either strong or not

- AND = Boolean AND: both must be true for conclusion to hold

Fuzzy Logic:

- STRONG(x) ∈ [0, 1], HEAVY(x) ∈ [0, 1] → HAMMER_THROWER(x) ∈ [0, 1]

- Gradual membership: “somewhat strong” = 0.6, “very heavy” = 0.9

- AND = t-norm (e.g., min): HAMMER_THROWER ≥ min(0.6, 0.9) = 0.6

- Captures vagueness — no sharp cutoff between “strong” and “not strong”

Analysis

| Item | Detail |

|---|---|

| Topic | Fuzzy Logic / Soft Computing |

| Lecture | W5L1 |

| Type | 对比分析 (Compare & Contrast) |

| Difficulty | ★★☆ |

| Exam intent | Core theme: why do we need fuzzy logic? What problem does it solve? |

Learning Points

- 对比答题模板: 分三行写 — (1) Traditional: binary, (2) Fuzzy: continuous, (3) WHY fuzzy is better for this case

- Fuzzy logic 解决 vagueness: “Strong” 没有明确边界 → 需要 membership function

- 给具体数字: 说 “STRONG(athlete) = 0.6” 比抽象描述好得多

⚠️ Common Mistake: Confusing fuzzy logic with probability. Fuzzy = degree of membership (to what extent is this athlete “strong”?). Probability = likelihood of an event (what’s the chance this athlete wins?).

Q6 — GA / Embodied AI [3 marks]

Question Summary

Design a fitness function for a BigDog walking robot using Genetic Algorithm optimization.

Expected Answer

Fitness function components:

- Distance traveled (primary): $f_1 = d / d_{max}$ — further is better

- Stability (constraint): $f_2 = 1 - \text{angular_deviation} / \text{max_deviation}$ — less wobble is better

- Energy efficiency (secondary): $f_3 = 1 - E_{used} / E_{max}$ — less energy is better

- Penalty: $f_{penalty} = -C$ if robot falls

Combined: $F = w_1 f_1 + w_2 f_2 + w_3 f_3 + f_{penalty}$

Key design considerations:

- Must balance multiple objectives

- Weights reflect priority (distance > stability > efficiency typically)

- Penalties for catastrophic failure (falling) should be large

Analysis

| Item | Detail |

|---|---|

| Topic | Genetic Algorithms / Fitness Function Design(遗传算法/适应度函数设计) |

| Lecture | GA/NEAT lectures |

| Type | 设计题 (Design) |

| Difficulty | ★★★ |

| Keywords | fitness function, multi-objective, GA, embodied AI, BigDog |

| Exam intent | Can student translate a real-world goal into a mathematical optimization objective? |

Learning Points

- Fitness function 设计万能框架: (1) 定义主目标, (2) 加约束, (3) 加惩罚项, (4) 用加权求和合并

- 开放题没有唯一答案: 关键是逻辑自洽 + 覆盖关键方面

- 必须提到权衡: 速度 vs 稳定性 vs 能耗

⚠️ Common Mistake: Only considering one objective (e.g., just distance). Real fitness functions must balance multiple competing goals.

S1 2025 Actual Test — Summary Table

| Q | Topic | Marks | % | Cognitive Level |

|---|---|---|---|---|

| Q1 | Symbolic Logic (Modus Tollens + FOL) | 2 | 13% | Apply + Deduce |

| Q2 | LNN (Truth Bounds + OR) | 3 | 20% | Compute + Argue |

| Q3 | KG / TransE | 2 | 13% | Explain + Formula |

| Q4 | Decision Trees (CART greedy) | 2 | 13% | Explain concept |

| Q5 | Fuzzy Logic | 3 | 20% | Compare & Contrast |

| Q6 | GA / Fitness Function Design | 3 | 20% | Design |

| Total | 15 | 100% |

Exam Paper 3: S1 2026 Sample Test

Format: 20 marks, 6 questions, 60 minutes (5 reading + 55 answering) Note: Marks increased from 15 → 20. Same topics, more depth required.

Q1 — Symbolic Logic [5 marks]

Question Summary

(a) Propositional Logic — with Truth Table [3m]

Same scenario as S1 2025 Sample: $(I \wedge F) \rightarrow E$, given $\neg E$. But now explicitly requires a truth table for full marks.

(b) FOL — Birds [2m]

Same “not all birds can fly” question.

Expected Answer

(a):

Step 1: Truth table for $X \rightarrow E$ where $X = I \wedge F$:

| $X$ | $E$ | $X \rightarrow E$ |

|---|---|---|

| 0 | 0 | 1 |

| 0 | 1 | 1 |

| 1 | 0 | 0 |

| 1 | 1 | 1 |

When $E = 0$ and implication is TRUE: $X$ must be 0. [1 mark]

Step 2: Truth table for $I \wedge F$:

| $I$ | $F$ | $I \wedge F$ |

|---|---|---|

| 0 | 0 | 0 |

| 0 | 1 | 0 |

| 1 | 0 | 0 |

| 1 | 1 | 1 |

$I \wedge F = 0$ when at least one is 0. [1 mark]

Step 3: Conclusion — person either lacked valid ID, or fingerprint didn’t match, or both. [1 mark]

(b):

- $\neg \forall x , \text{Fly}(x)$ [1 mark]

- Example: penguins, ostriches, kiwi (kiwi 特别适合 UoA 的语境!) [1 mark]

Analysis

| Item | Detail |

|---|---|

| Topic | Symbolic Logic |

| Lecture | W2L1 |

| Difficulty | ★★☆ |

| Compared to 2025 | Same scenario, more marks → must show truth table explicitly |

Learning Points

- 2026 版本更重视过程: 3 marks for truth table vs 2025’s ~1.5 marks. Show ALL steps.

- 真值表是得分保障: 即使你能直接用 Modus Tollens 推出结论,画真值表拿更稳的分

💡 策略提示: 5 marks = 25% of total. Spend proportional time (~14 minutes). Don’t rush the truth table.

Q2 — LNN [4 marks]

Question Summary

Same HeatingOn scenario as S1 2025 Sample, but 4 marks (was 2).

(a) Interpret rule + compare with Boolean [2m]

(b) Compute with Cold = 0.9, AtHome = 0.4 [2m]

Expected Answer

Same as S1 2025 Sample Q2 but more detail expected for the extra marks:

- (a): Need deeper comparison — mention gradient-based learning, continuous optimization, partial truth

- (b): Show at least two t-norms, discuss threshold selection, explain practical implications

Analysis

| Item | Detail |

|---|---|

| Topic | LNN |

| Compared to 2025 | Same question, doubled marks → expects more thorough answer |

| Difficulty | ★★☆ |

Learning Points

- More marks = more depth expected:

- 2m version: basic computation + brief threshold mention

- 4m version: multiple t-norms + threshold discussion + why LNN matters for AI

- 安全策略: 写出所有你知道的 t-norm 计算结果,对比它们

Q3 — Knowledge Graph Embeddings [2 marks]

Question Summary

Same as S1 2025 Sample Q3: explain entity/relation embeddings + KG inference task + example.

Expected Answer

Identical to S1 2025 Sample Q3. (See above.)

Learning Points

- 三年不变: 这道题完全一样。说明 KG embedding 是必考的固定题型。

Q4 — Robot Soccer [2 marks]

Question Summary

Same as S1 2025 Sample Q4: overhead camera, 225 features, describe strategies.

Expected Answer

Identical to S1 2025 Sample Q4. (See above.)

Learning Points

- 同样三年不变: Robot Soccer 策略也是固定考点。

Q5 — Random Forest [3 marks]

Question Summary

Same as S1 2025 Sample Q5: feature selection + why feature bagging.

Expected Answer

Identical to S1 2025 Sample Q5. (See above.)

Q6 — Vagueness vs Uncertainty [4 marks]

Question Summary

New question type (not in S1 2025 Sample):

Classify 4 scenarios:

- Patient described as “high risk” → Vagueness

- Security system estimates burglary → Uncertainty

- Student rated “almost excellent” → Vagueness

- Spam filter classifies email → Uncertainty

Expected Answer

| Scenario | Classification | Reasoning |

|---|---|---|

| “high risk” patient | Vagueness | Blurry boundary — what counts as “high”? Degree, not yes/no |

| Alarm/burglary estimate | Uncertainty | Unknown ground truth — did burglary actually happen? |

| “almost excellent” student | Vagueness | Gradual concept — no sharp boundary between “good” and “excellent” |

| Spam filter | Uncertainty | Probabilistic inference — inferring unknown class from features |

Analysis

| Item | Detail |

|---|---|

| Topic | Soft Computing — Vagueness vs Uncertainty |

| Lecture | W5L1 |

| Difficulty | ★☆☆ (if you know the distinction) |

| Exam intent | THE central philosophical distinction of soft computing |

Learning Points

- 万能判断法则:

- Vagueness → “To what degree?” → Fuzzy Logic (membership functions)

- Uncertainty → “How likely?” → Bayesian Reasoning (probabilities)

- 快速测试: 概念边界模糊 → vagueness; 世界状态未知 → uncertainty

- 语言线索: “high”, “almost”, “kind of” → vagueness; “estimate”, “predict”, “classify” → uncertainty

⚠️ Common Mistake: Thinking fuzzy logic handles uncertainty. NO — fuzzy handles vagueness; Bayes handles uncertainty. This is THE most important distinction in W5.

S1 2026 Sample Test — Summary Table

| Q | Topic | Marks | % | Cognitive Level |

|---|---|---|---|---|

| Q1 | Symbolic Logic (truth table + FOL) | 5 | 25% | Apply + Formalise |

| Q2 | LNN (soft AND computation) | 4 | 20% | Explain + Compute |

| Q3 | KG Embeddings | 2 | 10% | Explain + Exemplify |

| Q4 | Robot Soccer | 2 | 10% | Recall |

| Q5 | Random Forest / Bagging | 3 | 15% | Explain + Calculate |

| Q6 | Vagueness vs Uncertainty | 4 | 20% | Classify scenarios |

| Total | 20 | 100% |

Exam Paper 4: S1 2024 Final Exam (Thomas’s Section)

Note: This is the final exam (not mid-semester), with questions from a different instructor (Thomas). These topics may or may not appear in 2026’s mid-semester, but they are useful for final exam preparation and general knowledge.

Q1 — Continual Learning [4 marks]

Question Summary

Concept drift, replay methods, Gaussian Mixture Models in continual learning.

Expected Answer

- Concept drift: Data distribution changes over time; model must adapt

- Replay methods: Store subset of old data; replay during training on new data to prevent catastrophic forgetting

- GMM: Can be used to model data distributions; detect drift by comparing distributions

- Stability-plasticity tradeoff: Too much stability → can’t learn new; too much plasticity → forgets old

Analysis

| Item | Detail |

|---|---|

| Topic | Continual Learning(持续学习) |

| Difficulty | ★★☆ |

| Priority for 2026 mid-sem | 🟢 LOW — Thomas’s topic, unlikely in mid-semester |

Q2 — BFS vs UCS [3 marks]

Question Summary

Compare Breadth-First Search and Uniform-Cost Search.

Expected Answer

- BFS: Expands shallowest node first; optimal when all edge costs equal; uses FIFO queue

- UCS: Expands lowest-cost node first; optimal for any non-negative costs; uses priority queue

- Key difference: BFS = optimal for unweighted; UCS = optimal for weighted graphs

Analysis

| Item | Detail |

|---|---|

| Topic | Search Algorithms |

| Priority for 2026 mid-sem | 🟢 LOW — not in Xinyu’s question pattern |

Q3 — MCTS / UCB1 [3 marks]

Question Summary

Explain the components of the UCB1 formula used in Monte Carlo Tree Search.

Expected Answer

$$UCB1 = \bar{X}_j + C \sqrt{\frac{\ln N}{n_j}}$$

- $\bar{X}_j$: average reward of node $j$ (exploitation term)

- $N$: total visits to parent

- $n_j$: visits to node $j$

- $C$: exploration constant

- $\sqrt{\ln N / n_j}$: exploration term — favors less-visited nodes

- Balances exploration vs exploitation

Analysis

| Item | Detail |

|---|---|

| Topic | MCTS / UCB1 |

| Priority for 2026 mid-sem | 🟡 MEDIUM — exploration-exploitation could appear in GA context |

Q4 — RL for Pac-Man [1 mark]

Question Summary

Define state, action, policy, reward for RL applied to Pac-Man.

Expected Answer

- State: Current game board configuration (ghost positions, pellet locations, Pac-Man position)

- Action: Move direction (up, down, left, right)

- Policy: Mapping from state to action (which direction to move in each situation)

- Reward: +10 eating pellet, +200 eating ghost, -500 dying, -1 per time step

Analysis

| Item | Detail |

|---|---|

| Topic | Reinforcement Learning(强化学习) |

| Priority for 2026 mid-sem | 🟢 LOW |

Q5 — GNN [2 marks]

Question Summary

Explain permutation invariance and permutation equivariance in Graph Neural Networks.

Expected Answer

- Permutation invariance: Output doesn’t change when node ordering changes (graph-level prediction)

- Permutation equivariance: Output permutes consistently with input permutation (node-level embeddings)

Analysis

| Item | Detail |

|---|---|

| Topic | Graph Neural Networks |

| Priority for 2026 mid-sem | 🟢 LOW — not in Xinyu’s observed pattern |

Q6 — Self-Supervised Learning [2 marks]

Question Summary

Distinguish pretext tasks from downstream tasks in self-supervised learning.

Expected Answer

- Pretext task: Artificial task designed to learn representations without labels (e.g., predict rotation, fill masked words)

- Downstream task: Actual target task the representations are used for (e.g., classification, NER)

- Relationship: Pretext → learn general features; fine-tune on downstream task with few labels

Analysis

| Item | Detail |

|---|---|

| Topic | Self-Supervised Learning |

| Priority for 2026 mid-sem | 🟢 LOW |

Additional Topics from S1 2024 Final (Answer Key)

The following topics appeared in the S1 2024 final exam answer key:

| Topic | Content | Priority for Mid-Sem |

|---|---|---|

| DQN | Online vs target network, bootstrapping | 🟢 LOW |

| Self-Attention | Q/K/V vectors, advantage over traditional attention | 🟡 MEDIUM |

| LLM System Design | Technical route for LLM-based system | 🟡 MEDIUM |

| Decision Tree vs Forest | Interpretability/efficiency trade-off | 🟠 HIGH (DT is core) |

| Naive Bayes | Conditional independence, feature relevance assumptions | 🟡 MEDIUM |

| NEAT | Mobile robot application, fitness function design | 🟠 HIGH (GA/NEAT is core) |

| Self-Supervised Learning | Pretext/downstream tasks | 🟢 LOW |

| Replay in Continual Learning | Stability-plasticity tradeoff | 🟢 LOW |

| CNN in Self-Driving | CNN application in autonomous vehicles | 🟡 MEDIUM |

Cross-Exam Patterns(跨卷规律总结)

Pattern 1: Repeated Questions(原题重复出现)

以下题目在多份试卷中几乎一模一样地出现:

| Question | S1 2025 Sample | S1 2025 Actual | S1 2026 Sample |

|---|---|---|---|

| $(I \wedge F) \rightarrow E$, $\neg E$ → Modus Tollens | ✅ | variant: $(P \vee Q) \rightarrow R$ | ✅ (with truth table) |

| FOL: “Not all birds can fly” | ✅ | variant: Cheat/Disqualified | ✅ |

| LNN HeatingOn ← Cold ⊗ AtHome | ✅ | variant: Bounds + OR | ✅ |

| KG embeddings + inference task | ✅ | variant: TransE formula | ✅ |

| Robot Soccer strategies | ✅ | — | ✅ |

| Random Forest feature bagging | ✅ | variant: CART greedy | ✅ |

| MYCIN backward chaining | ✅ | — | — |

| Fuzzy logic contrast | — | ✅ | — |

| Vagueness vs Uncertainty | — | — | ✅ |

| GA fitness function | — | ✅ | — |

💡 核心发现: Xinyu 喜欢在 Sample Test 和 Actual Test 之间做微调而非大改。Sample 就是 Actual 的预告片!

Pattern 2: Question Evolution(题目进化路径)

每个核心考点在不同年份有“升级版“:

Symbolic Logic 进化链:

S1 2025 Sample: (I∧F)→E, ¬E → 推理(无真值表要求)

S1 2025 Actual: (P∨Q)→R, ¬R → 推理 + FOL 组合

S1 2026 Sample: 同 2025 Sample 但要求画真值表,5 marks

→ 趋势: 从 “能推理” → “能推理 + 证明过程” → “能推理 + 证明 + 变体”

LNN 进化链:

S1 2025 Sample: 点值计算 (AND), 2 marks

S1 2025 Actual: 区间计算 (OR) + safety reasoning, 3 marks

S1 2026 Sample: 点值计算 (AND), 4 marks (deeper explanation)

→ 趋势: AND 和 OR 交替考,区间 vs 点值交替考

KG 进化链:

S1 2025 Sample: "Explain embeddings" (概念)

S1 2025 Actual: "Write TransE formula" (公式)

S1 2026 Sample: "Explain embeddings" (概念, 同2025 Sample)

→ 趋势: 概念和公式交替考。两手都要准备。

Pattern 3: Mark Allocation Trends

| Topic | 2025 Sample (15m) | 2025 Actual (15m) | 2026 Sample (20m) |

|---|---|---|---|

| Symbolic Logic | 3m (20%) | 2m (13%) | 5m (25%) |

| LNN | 2m (13%) | 3m (20%) | 4m (20%) |

| KG | 2m (13%) | 2m (13%) | 2m (10%) |

| Decision Trees/RF | 3m (20%) | 2m (13%) | 3m (15%) |

| Soft Computing/Fuzzy | — | 3m (20%) | 4m (20%) |

| Embodied AI/GA | 2m (13%) | 3m (20%) | 2m (10%) |

| Expert Systems | 3m (20%) | — | — |

Key insight: Symbolic Logic + LNN consistently take 35-45% of total marks. These two topics alone are worth nearly half the exam.

Pattern 4: Cognitive Level Distribution

| Level | Description | Typical % |

|---|---|---|

| Recall | Name strategies, list features | ~15% |

| Explain | Describe how/why something works | ~30% |

| Compute | Calculate t-norm, truth table, $\sqrt{p}$ | ~25% |

| Compare | Fuzzy vs Boolean, vagueness vs uncertainty | ~15% |

| Design | Fitness function, system strategy | ~15% |

Topic Priority Matrix for 2026 Mid-Semester(2026 期中复习优先级)

Based on cross-exam analysis, here is the definitive priority ranking:

| Priority | Topic | Expected Marks | Study Time |

|---|---|---|---|

| 🔴 MUST | Symbolic Logic (Modus Tollens + truth table + FOL) | 4-5m | 20% |

| 🔴 MUST | LNN (AND/OR, point/bounds, t-norm/co-norm) | 3-4m | 20% |

| 🔴 MUST | Knowledge Graphs (TransE, embeddings, inference) | 2m | 10% |

| 🔴 MUST | Decision Trees & Random Forest (greedy, bagging, $\sqrt{p}$) | 2-3m | 10% |

| 🔴 MUST | Soft Computing (vagueness vs uncertainty, fuzzy vs Boolean) | 3-4m | 15% |

| 🟠 HIGH | Embodied AI / Robot Soccer (strategies, centralized control) | 2m | 8% |

| 🟠 HIGH | GA / NEAT (fitness function design) | 2-3m | 10% |

| 🟠 HIGH | Expert Systems / MYCIN (backward chaining) | 0-3m | 5% |

| 🟡 MEDIUM | Naive Bayes (conditional independence) | 0-2m | 2% |

Exam Strategy Recommendations(应试策略建议)

Time Management(时间分配)

For a 20-mark, 55-minute exam:

- ~2.75 minutes per mark

- Q1 (5m): ~14 minutes

- Q2 (4m): ~11 minutes

- Q3 (2m): ~5.5 minutes

- Q4 (2m): ~5.5 minutes

- Q5 (3m): ~8 minutes

- Q6 (4m): ~11 minutes

Cheatsheet Priorities(A4 速查表优先写什么)

Your double-sided A4 page should include (in order of priority):

- Truth table templates — implication, AND, OR truth tables pre-drawn

- Modus Tollens + De Morgan — write the formulas

- T-norm / Co-norm formulas — all 3 variants for AND and OR

- LNN bounds computation — interval arithmetic rules

- TransE formula — $f(h,r,t) = |h + r - t|$

- $\sqrt{p}$ formula — for Random Forest feature bagging

- Vagueness vs Uncertainty — decision table with examples

- Backward vs Forward chaining — one-line definitions

- Fitness function template — multi-objective weighted sum

- Key FOL patterns — $\neg \forall x = \exists x \neg$

Answer Writing Tips(答题技巧)

- Show your work: 2026 version gives more marks for process (truth tables, step-by-step computation)

- Give concrete examples: “(Einstein, bornIn, ?) → Germany” > “it predicts missing links”

- Use the scenario: Refer back to the specific context (smart home, autonomous vehicle, etc.)

- Label your steps: “Step 1: … Step 2: … Therefore: …”

- Quality over quantity: The exam explicitly states this. Be concise and precise.

- When asked “why”: Give the mechanism, not just the outcome. “Feature bagging decorrelates trees, making ensemble averaging more effective at reducing variance.”

Appendix: Complete Question Index(完整题目索引)

For quick reference, every question across all papers:

| Paper | Q# | Marks | Topic | Key Task |

|---|---|---|---|---|

| 2025 Sample | Q1 | 3 | Symbolic Logic | Modus Tollens + FOL |

| 2025 Sample | Q2 | 2 | LNN | AND computation |

| 2025 Sample | Q3 | 2 | KG | Embeddings + inference |

| 2025 Sample | Q4 | 2 | Robot Soccer | List strategies |

| 2025 Sample | Q5 | 3 | Random Forest | Feature bagging |

| 2025 Sample | Q6 | 3 | MYCIN | Backward chaining |

| 2025 Actual | Q1 | 2 | Symbolic Logic | Modus Tollens (OR variant) + FOL |

| 2025 Actual | Q2 | 3 | LNN | Bounds + OR + safety |

| 2025 Actual | Q3 | 2 | KG / TransE | TransE formula |

| 2025 Actual | Q4 | 2 | Decision Trees | CART greedy |

| 2025 Actual | Q5 | 3 | Fuzzy Logic | Boolean vs Fuzzy |

| 2025 Actual | Q6 | 3 | GA / BigDog | Fitness function design |

| 2026 Sample | Q1 | 5 | Symbolic Logic | Truth table + FOL |

| 2026 Sample | Q2 | 4 | LNN | AND computation (deeper) |

| 2026 Sample | Q3 | 2 | KG | Embeddings + inference |

| 2026 Sample | Q4 | 2 | Robot Soccer | List strategies |

| 2026 Sample | Q5 | 3 | Random Forest | Feature bagging |

| 2026 Sample | Q6 | 4 | Vagueness vs Uncertainty | Classify 4 scenarios |

| 2024 Final | Q1 | 4 | Continual Learning | Concept drift + replay |

| 2024 Final | Q2 | 3 | Search | BFS vs UCS |

| 2024 Final | Q3 | 3 | MCTS | UCB1 formula |

| 2024 Final | Q4 | 1 | RL | State/action/policy/reward |

| 2024 Final | Q5 | 2 | GNN | Permutation invariance |

| 2024 Final | Q6 | 2 | Self-Supervised | Pretext vs downstream |

考点频率分布 — Topic Frequency Heat Map

基于全部可用考试卷的统计分析(S1 2025 Sample, S1 2025 Actual, S1 2026 Sample, S1 2024 Final)

📊 考点频率总览

| 知识模块 | 出现次数 | 总分占比 | 优先级 | 考查形式 |

|---|---|---|---|---|

| Symbolic Logic (PL + FOL + Modus Tollens) | 4/4 卷 | 13-25% | 🔴 必考 | 推理推导 + FOL 翻译 |

| Logic Neural Networks (Soft Logic + Truth Bounds) | 3/3 mid-tests | 13-20% | 🔴 必考 | 计算 + 概念对比 |

| Knowledge Graphs (TransE + Embeddings + Inference) | 3/3 mid-tests | 10-13% | 🔴 必考 | 概念解释 + 公式 |

| Decision Trees & Ensembles (CART + RF + Bagging) | 3/4 卷 | 10-20% | 🔴 必考 | 概念理解 + 应用 |

| Soft Computing (Fuzzy Logic + Vagueness vs Uncertainty) | 3/4 卷 | 15-20% | 🔴 必考 | 对比分析 + 场景分类 |

| NEAT & Genetic Algorithms | 2/4 卷 | 13-20% | 🟠 高频 | 适应度函数设计 |

| Embodied AI & Robot Soccer | 2/3 mid-tests | 10-13% | 🟠 高频 | 策略描述 |

| MYCIN / Expert Systems (Backward Chaining) | 1/3 mid-tests | 13-20% | 🟠 高频 | 推理过程描述 |

| Naïve Bayes | 1/4 卷 (final) | ~10% | 🟡 中频 | 假设解释 |

| Knowledge Representation (Frames, Semantic Nets, RBS) | 0 (直接考题) | — | 🟢 低频 | 间接考查 |

🎯 按考题编号统计(Mid-term Test 模式)

每份试卷固定 6 道简答题,主题分布如下:

| 题号 | S1 2025 Sample (15m) | S1 2025 Actual (15m) | S1 2026 Sample (20m) |

|---|---|---|---|

| Q1 | Symbolic Logic (3m) | Symbolic Logic (2m) | Symbolic Logic (5m) |

| Q2 | LNN (2m) | LNN (3m) | LNN (4m) |

| Q3 | Knowledge Graphs (2m) | Knowledge Graphs (2m) | Knowledge Graphs (2m) |

| Q4 | Robot Soccer (2m) | Decision Trees (2m) | Robot Soccer (2m) |

| Q5 | Random Forest (3m) | Fuzzy Logic (3m) | Random Forest (3m) |

| Q6 | MYCIN/Backward Chaining (3m) | GA/Fitness Function (3m) | Vagueness vs Uncertainty (4m) |

规律总结:

- Q1 永远是 Symbolic Logic(分值从 2→5 递增!)

- Q2 永远是 LNN(分值从 2→4 递增!)

- Q3 永远是 Knowledge Graphs / TransE

- Q4-Q6 在以下主题中轮换:Decision Trees/RF、Soft Computing/Fuzzy、NEAT/GA、Robot Soccer、MYCIN

📈 分值趋势分析

2025 Sample (15m): Logic(3) + LNN(2) + KG(2) + Robot(2) + RF(3) + MYCIN(3)

2025 Actual (15m): Logic(2) + LNN(3) + KG(2) + DT(2) + Fuzzy(3) + GA(3)

2026 Sample (20m): Logic(5) + LNN(4) + KG(2) + Robot(2) + RF(3) + V/U(4)

关键发现:

- 2026 总分从 15→20,额外的 5 分主要加在了 Logic (+2) 和 LNN (+2) 上

- Symbolic Logic 要求从“代数推导“升级为“完整真值表“

- Vagueness vs Uncertainty 是新增的高分考点(4分)

⏰ 建议复习时间分配(基于频率和分值)

| 模块 | 建议时间占比 | 理由 |

|---|---|---|

| Symbolic Logic | 20% | 每卷必考,分值最高(最多 5m),需要熟练掌握真值表 + Modus Tollens |

| LNN | 15% | 每卷必考,分值递增,计算和概念并重 |

| Soft Computing (Fuzzy + Bayes + V/U) | 15% | 高频,vagueness vs uncertainty 新增为高分考点 |

| Decision Trees & Ensembles | 15% | 高频,重点理解“为什么“ (greedy, feature bagging) |

| Knowledge Graphs & TransE | 10% | 每卷必考但分值稳定在 2m,概念性强 |

| NEAT & GA | 10% | 高频,重点是 fitness function 设计 |

| Embodied AI & Robot Soccer | 10% | 中频,概念性强,答题灵活 |

| MYCIN & Expert Systems | 5% | 低频但重要概念(backward chaining) |

🧩 考点共现分析

以下概念经常在同一道题或同一考卷中一起出现:

| 概念组合 | 出现模式 | 意义 |

|---|---|---|

| Modus Tollens + De Morgan’s | 每道 Q1 | 先否定后件,再展开否定前件 |

| LNN + Soft Logic AND | 每道 Q2 | LNN 的核心计算依赖 Product-Sum AND |

| LNN Bounds + Safety-Critical | 2025 Actual | bounds 在自动驾驶中的应用 |

| TransE + Link Prediction | 每道 Q3 | h+r≈t 用于预测缺失链接 |

| Fuzzy vs Traditional Logic | 2025 Actual | 同一规则在两种体系下的对比 |

| GA + Embodied AI | 2025 Actual | 用 GA 训练 BigDog 机器人控制器 |

| Vagueness vs Uncertainty | 2026 Sample | 四个场景分类 |

| Bagging + Feature Bagging | 每道 RF 题 | 两者缺一不可 |

🎯 Cheatsheet 优先级排序

根据出题频率和分值,你的双面 A4 手写笔记应该包含以下内容(按重要性排序):

必须写(占据 60% 空间)

- Modus Tollens 公式 + De Morgan’s Laws + 两种 premise 结构的展开方式

- 完整的 implication 真值表 (4 行)

- LNN Soft Logic (Product-Sum): AND = A×B, OR = A+B-AB, NOT = 1-A

- LNN Truth Bounds 分类规则 (L≥α → TRUE, U≤α → FALSE, etc.)

- OR bounds: L=max(L₁,L₂), U=max(U₁,U₂)

- TransE: h+r≈t, f(h,r,t) = ||h+r-t||

- Entropy: H(X) = -Σp(x)log₂p(x), IG = H(Y) - H(Y|X)

- Gini: G(D) = 1 - Σpᵢ²

- Fuzzy: AND=min, OR=max, NOT=1-μ

- Vagueness vs Uncertainty 判断流程图

建议写(占据 30% 空间)

- Bayes’ Theorem: P(H|e) = P(e|H)P(H)/P(e)

- Naïve Bayes: P(C|x) ∝ P(C)ΠP(xᵢ|C)

- CART = greedy (no look-ahead)

- RF = Bagging + Feature Bagging (√features)

- CF(conclusion) = CF(premise) × CF(rule)

- Forward vs Backward Chaining 对比

- NEAT: speciation distance δ = c₁E/N + c₂D/N + c₃W̄

- Flocking 3 rules: separation, cohesion, alignment

如果有空间(占据 10%)

- FOL 量词否定: ¬∀x P(x) ≡ ∃x ¬P(x)

- STEAM: Joint Persistent Goal (A/U/I)

- Ontology vs KG 区别

- RDF triple 格式

命题风格分析 — Teacher Style Analysis

Instructor: Xinyu Zhang | Course: COMPSCI 713 AI Fundamentals | S1 2026 基于全部可用考试卷分析(S1 2025 Sample, S1 2025 Actual, S1 2026 Sample, S1 2024 Final)

👤 教师信息

- Instructor: Xinyu Zhang (School of Computer Science, University of Auckland)

- Course: COMPSCI 713: AI Fundamentals, S1 2026

- Website: zhangxinyu-xyz.github.io

- 另一位出题者: Thomas (负责 Part 2 — 深度学习/RL/LLM 相关,与 Xinyu 的 Part 1 分开考)

🎯 出题风格总结

1. 偏好应用场景题(Application-Based Scenarios)

Xinyu 的题目几乎从不直接问定义。每道题都嵌入在一个具体场景中:

| 场景类型 | 出现频率 | 具体例子 |

|---|---|---|

| 安全/门禁系统 | 3 次 | Secure facility (I∧F→E), smart office alarm (P∨Q→R) |

| 智能家居 | 2 次 | Smart home heating (LNN HeatingOn) |

| 自动驾驶 | 1 次 | Autonomous vehicle collision alert (LNN bounds) |

| 医疗诊断 | 1 次 | Runny nose backward chaining |

| 体育/健身 | 1 次 | Hammer thrower (fuzzy logic) |

| 机器人 | 2 次 | Robot soccer, BigDog walking |

| 金融/商业 | 1 次 | Stock prediction (random forest) |

应对策略:不要死记硬背定义,要练习在新场景中应用概念。

2. 偏好“为什么“而非“是什么“

典型问法:

- “Why is feature bagging considered a good idea?” (不是 “What is feature bagging?”)

- “What exactly is meant by saying CART is ‘greedy’?” (不是 “Define CART”)

- “Why is using bounds beneficial in safety-critical applications?” (不是 “What are bounds?”)

应对策略:准备好每个概念的原因和动机,不仅仅是定义。

3. 重视对比分析(Contrast & Compare)

高频出题模式:

- Boolean logic vs LNN soft logic(每卷 Q2)

- Traditional logic vs Fuzzy logic(2025 Actual Q5)

- Vagueness vs Uncertainty(2026 Sample Q6)

- Decision tree vs Decision forest(2024 Final)

- Forward chaining vs Backward chaining(隐含在 MYCIN 题中)

应对策略:准备好两栏对比表格,考试时直接画表回答。

4. 计算题轻量但必须准确

- LNN: 0.9 × 0.4 = 0.36 这种简单乘法

- Entropy/Gini: 不会给太复杂的数据,但公式必须正确

- TransE: 概念性理解 h+r≈t 即可,不需要实际向量计算

应对策略:把公式写在 cheatsheet 上,考试时代入数字即可。

5. 评分标准:Quality over Quantity

考试说明明确写道:

“We privilege quality over quantity, i.e., you do not need to write very long answers. Be concise and clear.”

| 答题长度建议 | 分值 | 建议写法 |

|---|---|---|

| 1 mark | 1-2 句话 | 一个关键点,直接命中 |

| 2 marks | 3-4 句话或一个小段落 | 两个关键点 + 简短解释 |

| 3 marks | 一个段落或结构化回答 | 三个关键点 + 各自解释 |

| 4-5 marks | 结构化回答 + 例子/表格 | 多个关键点 + 具体例子 + 对比 |

6. 常用句式模式

Xinyu 的题目经常使用以下句式:

- “Use propositional logic to deduce what must be true about X and Y.” → 用 Modus Tollens + De Morgan’s

- “What does this rule represent in natural language, and how is it different from…” → 翻译 + 对比

- “Explain how the LNN would likely compute…” → 写出计算步骤

- “Contrast how the above rule might work using traditional logic as compared to…” → 画对比表

- “For each of the following situations, state whether it is mainly…” → 分类 + 简短理由

- “Describe one strategy or collective behaviour…” → 从课堂内容中选一个,解释清楚

- “Name the elements that should be part of the fitness function…” → 列出 3-5 个关键要素

🔄 题目进化趋势(2025 → 2026)

| 变化维度 | 2025 | 2026 预测 |

|---|---|---|

| 总分 | 15 marks | 20 marks |

| Logic 分值 | 2-3 marks | 5 marks(要求真值表) |

| LNN 分值 | 2-3 marks | 4 marks(更深入) |

| 新增考点 | — | Vagueness vs Uncertainty (4m) |

| 难度 | 中等 | 稍有提升(需要更完整的推导过程) |

| 时间压力 | 55min/15m ≈ 3.7min/mark | 55min/20m = 2.75min/mark |

关键发现:2026 时间更紧了!每分只有 2.75 分钟,比 2025 的 3.7 分钟少了约 25%。必须更加简洁高效。

⚠️ 常见陷阱与扣分点

| 陷阱 | 说明 | 正确做法 |

|---|---|---|

| 混淆 ¬(A∧B) 和 ¬(A∨B) | De Morgan’s 展开方向不同 | ¬(A∧B)=¬A∨¬B, ¬(A∨B)=¬A∧¬B |

| 混淆 vagueness 和 uncertainty | “high risk” 是 vagueness,“is there a burglary?” 是 uncertainty | 问“概念本身有没有模糊边界“ |

| 忘记说 CART 是 “no look-ahead” | 只说 “maximizes impurity reduction” 只能拿一半分 | 必须强调 greedy = no look-ahead |

| LNN 中混淆 Product-Sum 和 min/max | 考题用 Product-Sum AND (A×B),fuzzy 用 min | 看题目指定的是哪种运算 |

| Feature bagging 只说 “random features” | 需要说明目的是 decorrelate trees | 解释为什么:防止 dominant feature 总是做 root |

| Backward chaining 不说 “start from goal” | 关键是从假设出发 | 明确说 “start with hypothesis, find support” |

| TransE 忘记说 “smaller score = more likely” | 这是 distance-based score | f(h,r,t) = ||h+r-t||, 越小越好 |

📝 高分答题策略

1. 结构化回答

对于 2-3 分的题,使用:

[1句总结] + [关键点1 + 解释] + [关键点2 + 解释]

2. 对比题用表格

| Aspect | Method A | Method B |

|--------|---------|---------|

| ... | ... | ... |

3. 计算题写出每一步

Given: Cold = 0.9, AtHome = 0.4

AND (Product-Sum) = 0.9 × 0.4 = 0.36

If threshold α = 0.5, then 0.36 < 0.5 → heating NOT activated

4. 推理题用链式推导

Given: ¬E

Rule: (I ∧ F) → E

By Modus Tollens: ¬E → ¬(I ∧ F)

By De Morgan's: ¬(I ∧ F) = ¬I ∨ ¬F

∴ Either ¬I or ¬F (or both)

5. 时间管理(2026 格式,20 marks / 55 min)

| 题号 | 预期分值 | 建议时间 | 策略 |

|---|---|---|---|

| Q1 (Logic) | 5m | 12 min | 先写代数推导,再补真值表 |

| Q2 (LNN) | 4m | 10 min | 先翻译,再计算,最后对比 |

| Q3 (KG) | 2m | 5 min | TransE 公式 + 一个例子 |

| Q4 (轮换) | 2m | 5 min | 从课堂内容选一个策略展开 |

| Q5 (轮换) | 3m | 8 min | 结构化回答,每个分点一段 |

| Q6 (轮换) | 4m | 10 min | 每个子题 2-3 句,确保覆盖评分点 |

| 检查 | — | 5 min | 检查计算和 De Morgan’s 方向 |

Symbolic Logic – Propositional & First-Order Logic

🎯 Exam Importance

🔴 GUARANTEED TO APPEAR | Every single test paper has a logic question as Q1

| Test Paper | Question | Marks | Sub-topics |

|---|---|---|---|

| S1 2025 Sample Test | Q1 (3 marks / 15 total = 20%) | 1(a) Modus Tollens + De Morgan’s on $(I \wedge F) \rightarrow E$; 1(b) FOL translation $\neg\forall x, \text{Fly}(x)$ + example | |

| S1 2025 Actual Test | Q1 (2 marks / 15 total = 13%) | 1(a) Modus Tollens + De Morgan’s on $(P \vee Q) \rightarrow R$; 1(b) FOL Modus Tollens with $\forall x(\text{Cheat}(x) \rightarrow \text{Disqualified}(x))$ | |

| S1 2026 Sample Test | Q1 (5 marks / 20 total = 25%) | 1(a) Same $(I \wedge F) \rightarrow E$ but requires full truth table (3 marks); 1(b) Same FOL $\neg\forall x, \text{Fly}(x)$ |

Key observation: The question has been worth 2–5 marks across papers, and the 2026 sample tripled the propositional logic marks by requiring a full truth table. Prepare for both approaches (algebraic deduction AND truth table verification).

📖 Core Concepts (Quick Reference Table)

| English Term | 中文 | One-line Definition |

|---|---|---|

| Propositional Logic(命题逻辑) | 命题逻辑 | Deals with statements that are TRUE or FALSE, combined with logical connectives |

| First-Order Logic / FOL(一阶逻辑) | 一阶逻辑 | Extends propositional logic with variables, quantifiers ($\forall$, $\exists$), predicates, and functions |

| Atomic Proposition(原子命题) | 原子命题 | A basic statement with binary value: true or false (e.g., “It is raining”) |

| Connective(逻辑联结词) | 联结词 | Operators: $\neg$ (NOT), $\wedge$ (AND), $\vee$ (OR), $\rightarrow$ (IMPLIES), $\leftrightarrow$ (IFF) |

| Interpretation(解释/赋值) | 解释 | A function $\pi$ that assigns true/false to every atomic proposition |

| Tautology(重言式) | 重言式 | A formula that is true under every possible interpretation |

| Logical Implication(逻辑蕴含) | 逻辑蕴含 | $A \Rightarrow B$: for every interpretation where A is true, B must also be true |

| Logical Equivalence(逻辑等值) | 逻辑等值 | $A \Leftrightarrow B$: A and B have the same truth value under every interpretation |

| Modus Ponens(肯定前件) | 肯定前件 | From $P$ and $P \rightarrow Q$, conclude $Q$ |

| Modus Tollens(否定后件) | 否定后件 | From $P \rightarrow Q$ and $\neg Q$, conclude $\neg P$ |

| Syllogism(三段论) | 三段论 | From $(A \rightarrow B)$ and $(B \rightarrow C)$, conclude $(A \rightarrow C)$ |

| Material Implication(实质蕴含) | 实质蕴含 | $A \rightarrow B$ is false ONLY when A is true and B is false |

| Vacuous Truth(空真) | 空真 | When the premise is false, implication is always true |

| De Morgan’s Laws(德摩根定律) | 德摩根律 | $\neg(A \wedge B) \equiv \neg A \vee \neg B$ and $\neg(A \vee B) \equiv \neg A \wedge \neg B$ |

| Universal Quantifier(全称量词) | 全称量词 | $\forall x$: “for all x in the domain” |

| Existential Quantifier(存在量词) | 存在量词 | $\exists x$: “there exists at least one x” |

| Bound Variable(约束变量) | 约束变量 | A variable within the scope of a quantifier ($\forall x$ or $\exists x$) |

| Free Variable(自由变量) | 自由变量 | A variable NOT within any quantifier’s scope |

| Sentence(语句) | 语句 | A formula with NO free variables |

| Signature(签名) | 签名 | The vocabulary of a FOL language: its relation and function symbols |

| Domain(论域) | 论域 | The set of objects that variables range over in a FOL interpretation |

🧠 Feynman Draft – Learning From Scratch

Part 1: Propositional Logic

Imagine you are a security guard at a building entrance. Your job manual has simple rules written as “if… then…” statements. Each fact is either TRUE or FALSE – no grey areas, no “maybe.” Your entire job is to follow the rules and figure out what must be true.

For example, your manual says:

“If the person has a valid ID and their fingerprint matches, then grant entry.”

In symbols: $(I \wedge F) \rightarrow E$

Now, suppose today the person was denied entry ($\neg E$). What can you figure out?

Think of it this way: the rule promises that having both ID and fingerprint match guarantees entry. The person was NOT granted entry. So the guarantee must not have kicked in – meaning they did NOT have both. Either no valid ID, or no fingerprint match, or both were missing.

This reasoning is called Modus Tollens(否定后件): if the consequence didn’t happen, the premise couldn’t have been fully satisfied.

$$P \rightarrow Q, \quad \neg Q \quad \Longrightarrow \quad \neg P$$

But wait – what does “not both” mean precisely?

$\neg(I \wedge F)$ means “it’s not the case that BOTH are true.” By De Morgan’s Law, this equals $\neg I \vee \neg F$ – “at least one of them is false.”

This is exactly how every exam question on this topic works. Every. Single. One.

Part 2: The Implication Trap

Here is the single most confusing thing in propositional logic, and the lecture opens with it:

“If it rains today, I will bring an umbrella.” ($P \rightarrow Q$)

You see the person carrying an umbrella ($Q$ is true). Can you conclude it is raining ($P$)?

NO! $Q \rightarrow P$ is NOT the same as $P \rightarrow Q$. The person might just like carrying umbrellas. This mistake is called Affirming the Consequent(肯定后件谬误) – the lecture slide 4-5 opens with exactly this example.

Here is the full truth table for implication:

| $P$ | $Q$ | $P \rightarrow Q$ |

|---|---|---|

| T | T | T |

| T | F | F $\leftarrow$ the ONLY row where it’s false |

| F | T | T $\leftarrow$ vacuous truth |

| F | F | T $\leftarrow$ vacuous truth |

The key insight: $P \rightarrow Q$ is false ONLY when P is true and Q is false.

Why is $\text{false} \rightarrow \text{anything}$ true? Think of it as a promise: “If it rains, I’ll bring an umbrella.” If it doesn’t rain, I haven’t broken my promise regardless of whether I carry an umbrella. The promise is only broken when rain happens and no umbrella appears.

⚠️ Common Misconception: Students think $P \rightarrow Q$ means “P causes Q” or “P and Q are related.” It does NOT. Material implication is purely about truth values. “If pigs fly, then I am the Queen of England” is technically TRUE because the premise is false. This is called vacuous truth(空真).

Part 3: First-Order Logic

Propositional logic treats facts as indivisible boxes – “it is raining” is one atomic unit. But what if you need to say something about many things at once?

Imagine you are a biologist studying birds. You want to express: “Not all birds in this region can fly.” In propositional logic, you would need a separate proposition for each bird – $\text{Fly}(\text{robin})$, $\text{Fly}(\text{kiwi})$, $\text{Fly}(\text{penguin})$, etc. If you have 1000 birds, you need 1000 propositions. This is the verbosity problem(冗余问题).

First-order logic fixes this by introducing:

- Objects: things in your world (birds, people, squares in Wumpus World)

- Predicates (relations): properties of objects ($\text{Fly}(x)$, $\text{Pit}(x,y)$)

- Functions: mappings from objects to objects ($\text{left}(x,y)$, $\text{fatherOf}(x)$)

- Quantifiers: $\forall$ (“for all”) and $\exists$ (“there exists”)

So “Not all birds can fly” becomes simply: $\neg \forall x, \text{Fly}(x)$

Which is equivalent to: $\exists x, \neg\text{Fly}(x)$ – “there exists a bird that cannot fly.”

⚠️ Common Misconception: Students write $\forall x, \neg\text{Fly}(x)$ for “not all birds fly.” This is WRONG – it means “NO bird can fly” (way too strong). The negation must go OUTSIDE the quantifier: $\neg\forall x, \text{Fly}(x)$.

⚠️ Common Misconception: With $\forall$, use $\rightarrow$ (implication) not $\wedge$. “Every student cheats” is $\forall x (\text{Student}(x) \rightarrow \text{Cheat}(x))$, NOT $\forall x (\text{Student}(x) \wedge \text{Cheat}(x))$. The latter says “everything is both a student AND a cheater” – it claims your dog is a cheating student!

💡 Core Intuition: Propositional logic is about combining true/false statements with connectives; FOL adds the power to talk about “all” and “some” objects in a domain using quantifiers and predicates.

📐 Formal Definitions

Propositional Logic – Complete Syntax and Semantics

Syntax (from lecture slide 15):

- Atomic propositions (atoms): $\text{Atom} = {X_1, \ldots, X_k}$, each with domain ${\text{true}, \text{false}}$ (or ${0, 1}$).

- Compound propositions are built using connectives: $\neg A$, $(A \vee B)$, $(A \wedge B)$, $(A \rightarrow B)$, $(A \leftarrow B)$, $(A \leftrightarrow B)$

Semantics:

An interpretation $\pi : \text{Atom} \rightarrow {\text{true}, \text{false}}$ assigns truth values to all atoms. The truth value of any compound proposition is determined by the following table:

Master Truth Table (MEMORIZE THIS)

| $A$ | $B$ | $\neg A$ | $A \wedge B$ | $A \vee B$ | $A \rightarrow B$ | $A \leftarrow B$ | $A \leftrightarrow B$ |

|---|---|---|---|---|---|---|---|

| T | T | F | T | T | T | T | T |

| T | F | F | F | T | F | T | F |

| F | T | T | F | T | T | F | F |

| F | F | T | F | F | T | T | T |

Key observations:

- $A \rightarrow B$ is false ONLY in row 2 (A true, B false)

- $A \leftrightarrow B$ is true when A and B have the SAME value

- $A \leftarrow B$ is the “reverse implication” ($B \rightarrow A$)

Complete Logical Equivalence Laws (from lecture slide 22)

These are your tools for algebraic manipulation. The exam requires you to cite which law you use.

| Law Name | Equivalence |

|---|---|

| Double Negation | $\neg\neg A \Leftrightarrow A$ |

| Commutative | $(A \wedge B) \Leftrightarrow (B \wedge A)$; $(A \vee B) \Leftrightarrow (B \vee A)$ |

| Associative | $(A \wedge (B \wedge C)) \Leftrightarrow ((A \wedge B) \wedge C)$; same for $\vee$ |

| Distributive | $(A \wedge (B \vee C)) \Leftrightarrow ((A \wedge B) \vee (A \wedge C))$; $(A \vee (B \wedge C)) \Leftrightarrow ((A \vee B) \wedge (A \vee C))$ |

| Idempotent | $(A \wedge A) \Leftrightarrow A$; $(A \vee A) \Leftrightarrow A$ |

| De Morgan’s | $\neg(A \wedge B) \Leftrightarrow (\neg A \vee \neg B)$; $\neg(A \vee B) \Leftrightarrow (\neg A \wedge \neg B)$ |

| Implication | $(A \rightarrow B) \Leftrightarrow (\neg A \vee B)$; $(A \rightarrow B) \Leftrightarrow (\neg A \wedge \neg B) \vee B$ … simplified: $\neg A \vee B$ |

| Contrapositive | $(A \rightarrow B) \Leftrightarrow (\neg B \rightarrow \neg A)$ |

| Contradiction | $(A \vee (B \wedge \neg B)) \Leftrightarrow A$ |

| Absorption | $A \Leftrightarrow (A \wedge (A \vee B))$; $A \Leftrightarrow (A \vee (A \wedge B))$ |

| Equivalence | $(A \leftrightarrow B) \Leftrightarrow ((A \rightarrow B) \wedge (B \rightarrow A))$; $(A \leftrightarrow B) \Leftrightarrow ((A \wedge B) \vee (\neg A \wedge \neg B))$ |

Logical Implication vs. Material Implication

This distinction is subtle and important (lecture slide 21):

- Material implication ($A \rightarrow B$): a connective inside a formula. It has a truth value.

- Logical implication ($A \Rightarrow B$): a meta-statement about formulas. It means: for EVERY interpretation $\pi$, if $\pi(A) = \text{true}$ then $\pi(B) = \text{true}$.

Verification methods:

- Truth table: check that every row where A is true also has B true

- Equivalent test: $A \Rightarrow B$ if and only if $A \rightarrow B$ is a tautology

Key Inference Rules (from lecture slide 21)

$$\text{Modus Ponens: } ((A \rightarrow B) \wedge A) \Rightarrow B$$

$$\text{Modus Tollens: } ((A \rightarrow B) \wedge \neg B) \Rightarrow \neg A$$

$$\text{Syllogism: } ((A \rightarrow B) \wedge (B \rightarrow C)) \Rightarrow (A \rightarrow C)$$

First-Order Logic – Complete Syntax and Semantics

Three building blocks (lecture slide 25):

- Objects: people, houses, numbers, grid squares, …

- Relations (Predicates): properties or relationships – unary ($\text{Red}(x)$), binary ($\text{Adjacent}(x,y)$), n-ary

- Functions: mappings that produce objects – $\text{fatherOf}(x)$, $\text{left}(x,y)$

Signature (lecture slide 29): the vocabulary $S = {R_1, \ldots, R_k, f_1, \ldots, f_\ell}$ – the set of relation and function symbols.

Terms (lecture slide 29):

- Every variable is a term: $x, y, z$

- Every constant is a term: $1, 2, \text{Alice}$ (a constant is a 0-ary function)

- If $f$ is a function of arity $r$ and $t_0, \ldots, t_{r-1}$ are terms, then $f(t_0, \ldots, t_{r-1})$ is a term

- A ground term has no variables (all constants/applied functions on constants)

Formulas (lecture slide 30):

- Atomic: $t_0 = t_1$ (equality) or $R(t_0, \ldots, t_{n-1})$ (predicate applied to terms)

- Compound: built from atomic formulas using $\neg, \wedge, \vee, \rightarrow, \leftrightarrow$ and quantifiers $\forall x, \exists x$

Free vs. Bound Variables (lecture slide 32):

- A variable $x$ is bound if it appears within $\forall x : \varphi$ or $\exists x : \varphi$

- A variable $x$ is free if it is not within any quantifier’s scope

- A sentence is a formula with NO free variables

Satisfaction Relation (lecture slide 33): $I \vDash \varphi$ means interpretation $I$ satisfies formula $\varphi$:

- $I \vDash \forall x : \varphi$ iff for ALL $a \in D$, $I[x/a] \vDash \varphi$

- $I \vDash \exists x : \varphi$ iff there is SOME $a \in D$ such that $I[x/a] \vDash \varphi$

Quantifier Negation Laws (De Morgan’s for Quantifiers)

$$\neg \forall x, \varphi(x) \equiv \exists x, \neg\varphi(x)$$ $$\neg \exists x, \varphi(x) \equiv \forall x, \neg\varphi(x)$$

Additional FOL equivalences (lecture slide 34): $$\neg\neg\exists x : \varphi(x) \equiv \forall x : \neg\varphi(x) \quad [\text{Double negation + quantifier swap}]$$ $$\exists x : (\varphi_1(x) \vee \varphi_2(x)) \equiv (\exists x : \varphi_1(x)) \vee (\exists x : \varphi_2(x))$$ $$\forall x : (\varphi_1(x) \wedge \varphi_2(x)) \equiv (\forall x : \varphi_1(x)) \wedge (\forall x : \varphi_2(x))$$ $$\neg\forall x : (\varphi_1(x) \rightarrow \varphi_2(x)) \equiv \exists x : (\varphi_1(x) \wedge \neg\varphi_2(x))$$

🔄 Mechanisms & Derivations – The Exam Algorithms

Algorithm 1: Modus Tollens with De Morgan’s (The Core Exam Pattern)

This is the algorithm you will execute in 100% of logic exam questions. Master it completely.

Input: A rule $P \rightarrow Q$ and an observation $\neg Q$

Steps:

- Identify the structure: What is $P$? What is $Q$? (P is often compound, e.g., $I \wedge F$ or $P \vee Q$)

- Apply Modus Tollens: From $P \rightarrow Q$ and $\neg Q$, conclude $\neg P$

- Simplify $\neg P$ using De Morgan’s Law:

- If $P = (A \wedge B)$: $\neg(A \wedge B) = \neg A \vee \neg B$ (“at least one is false”)

- If $P = (A \vee B)$: $\neg(A \vee B) = \neg A \wedge \neg B$ (“BOTH are false”)

- State the conclusion in natural language

Algorithm 2: Truth Table Verification (Required in S1 2026 Sample)

The 2026 sample test explicitly asks “Show your steps (Truth Table) clearly” for 3 marks. Here is the exact procedure:

Step 1: Write the truth table for $X \rightarrow E$ where $X = I \wedge F$ (1 mark):

| $X$ ($I \wedge F$) | $E$ | $X \rightarrow E$ |

|---|---|---|

| 0 | 0 | 1 |

| 0 | 1 | 1 |

| 1 | 0 | 0 $\leftarrow$ violates the rule |

| 1 | 1 | 1 |

Step 2: Since $\neg E$ (E = 0) and $X \rightarrow E$ must be true, look at rows where E = 0. Only row 1 satisfies both conditions. Therefore $X = I \wedge F = 0$ (1 mark).

Step 3: Write the truth table for $I \wedge F$ to determine what $I \wedge F = 0$ means (1 mark):

| $I$ | $F$ | $I \wedge F$ |

|---|---|---|

| 0 | 0 | 0 ✓ |

| 0 | 1 | 0 ✓ |

| 1 | 0 | 0 ✓ |

| 1 | 1 | 1 ✗ |

Conclusion: At least one of I, F must be 0. The person either didn’t have valid ID, or fingerprint didn’t match (or both).

Algorithm 3: FOL Translation

Input: An English sentence

Steps:

- Identify the domain: What set of objects are we talking about?

- Define predicates: What properties/relations are relevant?

- Identify the quantifier: “all”/“every” $\rightarrow$ $\forall$; “some”/“exists”/“not all” $\rightarrow$ involves $\exists$

- Construct the formula:

- “Every X that has property A also has property B” $\rightarrow$ $\forall x, (A(x) \rightarrow B(x))$

- “Not all X have property A” $\rightarrow$ $\neg\forall x, A(x)$ or equivalently $\exists x, \neg A(x)$

- “Some X has property A” $\rightarrow$ $\exists x, A(x)$

- Verify: Read the formula back in English to check

Algorithm 4: FOL Modus Tollens (S1 2025 Actual Test Pattern)

Input: A universal rule $\forall x, (P(x) \rightarrow Q(x))$ and a fact $\neg Q(a)$ for a specific object $a$

Steps:

- Universal Instantiation: From $\forall x, (P(x) \rightarrow Q(x))$, substitute $x = a$: $P(a) \rightarrow Q(a)$

- Apply Modus Tollens: From $P(a) \rightarrow Q(a)$ and $\neg Q(a)$, conclude $\neg P(a)$

- State conclusion: Object $a$ does not have property P

⚖️ Trade-offs & Comparisons

Propositional Logic vs First-Order Logic

| Aspect | Propositional Logic | First-Order Logic |

|---|---|---|

| Building blocks | Atomic propositions (P, Q, R) | Objects, predicates, functions, quantifiers |

| Expressiveness | LOW – can’t say “for all” or “there exists” | HIGH – quantifiers over objects |

| Decidability | Always decidable (finite truth table) | Semi-decidable (may not terminate) |

| Verbosity | HIGH for real-world domains (need one prop per fact) | LOW – one formula can express rules about all objects |

| Use in AI | Simple rule engines, circuit design, Wumpus World basics | Knowledge bases, expert systems, theorem proving |

| Example | $(I \wedge F) \rightarrow E$ | $\forall x, (\text{Student}(x) \rightarrow \text{HasExam}(x))$ |

Modus Ponens vs Modus Tollens vs Converse Error

| Modus Ponens | Modus Tollens | Converse Error (INVALID!) | |

|---|---|---|---|

| Given | $P \rightarrow Q$ and $P$ | $P \rightarrow Q$ and $\neg Q$ | $P \rightarrow Q$ and $Q$ |

| Conclude | $Q$ ✅ | $\neg P$ ✅ | $P$ ❌ WRONG |

| Direction | Forward reasoning | Backward reasoning | Fallacy |

| Example | Rain $\rightarrow$ Wet. Rain. $\therefore$ Wet. | Rain $\rightarrow$ Wet. Not wet. $\therefore$ Not rain. | Rain $\rightarrow$ Wet. Wet. $\therefore$ Rain?? (sprinkler!) |

| Exam status | Not directly tested | Tested EVERY exam | Tested as motivation (lecture slide 4-5) |

De Morgan’s: $\wedge$ vs $\vee$ Negation

| Original | Negated | Result | Intuition |

|---|---|---|---|

| $A \wedge B$ (both true) | $\neg(A \wedge B)$ | $\neg A \vee \neg B$ (at least one false) | Breaking an AND gives OR |

| $A \vee B$ (at least one true) | $\neg(A \vee B)$ | $\neg A \wedge \neg B$ (both false) | Breaking an OR gives AND |

Memory trick: negation “flips” the connective ($\wedge \leftrightarrow \vee$) and negates each operand.

$\forall$ with $\rightarrow$ vs $\exists$ with $\wedge$ (Critical FOL Pattern)

| Statement | Correct FOL | Common WRONG Version | Why wrong |

|---|---|---|---|

| “Every student is enrolled” | $\forall x, (\text{Student}(x) \rightarrow \text{Enrolled}(x))$ | $\forall x, (\text{Student}(x) \wedge \text{Enrolled}(x))$ | Claims everything in domain is both a student AND enrolled |

| “Some student is happy” | $\exists x, (\text{Student}(x) \wedge \text{Happy}(x))$ | $\exists x, (\text{Student}(x) \rightarrow \text{Happy}(x))$ | Vacuously true for any non-student object |

Rule of thumb: $\forall$ pairs with $\rightarrow$; $\exists$ pairs with $\wedge$.

🏗️ Design Question Framework

If asked to model a scenario using symbolic logic:

WHAT: Define the propositions/predicates and their English meanings

- List each atomic proposition or predicate with a clear one-line definition

- Specify the domain for FOL

WHY: Why use formal logic here?

- Precise and unambiguous (unlike natural language)

- Machine-verifiable (automated reasoning)

- Supports inference: derive new facts from existing rules

HOW: Write the rules as logical formulas

- Express each rule using connectives and quantifiers

- Show at least one inference step (Modus Ponens or Modus Tollens)

TRADE-OFF: Discuss limitations

- Propositional logic: verbose, can’t express “for all”

- FOL: more expressive but semi-decidable

- Both: can’t handle uncertainty (need fuzzy logic / LNN for soft values)

EXAMPLE: Demonstrate with a concrete instance

- Show a specific inference with your rules

📝 Exam Questions – Complete Collection with Model Answers

===== EXAM Q1: S1 2025 Sample Test Q1(a) – 1 mark =====

Question: In a secure facility, $(I \wedge F) \rightarrow E$. The person was not granted entry ($\neg E$). Deduce what must be true about I and F.

Model Answer:

Given: $(I \wedge F) \rightarrow E$ and $\neg E$.

By Modus Tollens: $\neg E \Rightarrow \neg(I \wedge F)$.

By De Morgan’s Law: $\neg(I \wedge F) \equiv \neg I \vee \neg F$.

Conclusion: The person either did not have a valid ID ($\neg I$) or the fingerprint did not match ($\neg F$), or both.

Marking note: 1 mark for correct application of Modus Tollens + De Morgan’s + stating conclusion.

===== EXAM Q2: S1 2025 Sample Test Q1(b)(i) – 1 mark =====

Question: A biologist claims “Not all birds in this region can fly.” Domain: all birds in the region. $\text{Fly}(x)$ = bird x can fly. Write in FOL.

Model Answer:

$$\neg \forall x, \text{Fly}(x)$$

Equivalently: $\exists x, \neg\text{Fly}(x)$

Marking note: Either form accepted for full mark.

===== EXAM Q3: S1 2025 Sample Test Q1(b)(ii) – 1 mark =====

Question: Provide a realistic example (one sentence) that would make the statement true.

Model Answer:

“There is a penguin in this region, and penguins cannot fly.”

Marking note: Any concrete example naming a flightless bird (penguin, kiwi, ostrich, emu) is acceptable.

===== EXAM Q4: S1 2025 Actual Test Q1(a) – 1 mark =====

Question: In a smart office, $(P \vee Q) \rightarrow R$. The alarm did not sound ($\neg R$). Deduce what must be true about P and Q.

Where: P = door is open, Q = motion sensor triggered, R = alarm sounds.

Model Answer:

Given: $(P \vee Q) \rightarrow R$ and $\neg R$.

By Modus Tollens: $\neg R \Rightarrow \neg(P \vee Q)$.

By De Morgan’s Law: $\neg(P \vee Q) \equiv \neg P \wedge \neg Q$.

Conclusion: The door was NOT open AND the motion sensor was NOT triggered. (Both must be false.)

Critical difference from the sample test: Here the premise uses $\vee$ (OR), so De Morgan’s produces $\wedge$ (AND). The conclusion is STRONGER: BOTH P and Q must be false (not just “at least one”).

| Premise Connective | After De Morgan’s | Conclusion Strength |

|---|---|---|

| $A \wedge B$ (AND) | $\neg A \vee \neg B$ | At least one is false |

| $A \vee B$ (OR) | $\neg A \wedge \neg B$ | BOTH are false |

===== EXAM Q5: S1 2025 Actual Test Q1(b) – 1 mark =====

Question: $\forall x, (\text{Cheat}(x) \rightarrow \text{Disqualified}(x))$. Alice is not disqualified. Did Alice cheat?

Model Answer:

From the universal rule: $\forall x, (\text{Cheat}(x) \rightarrow \text{Disqualified}(x))$

Instantiate for Alice: $\text{Cheat}(\text{Alice}) \rightarrow \text{Disqualified}(\text{Alice})$

Given: $\neg\text{Disqualified}(\text{Alice})$

By Modus Tollens: $\neg\text{Disqualified}(\text{Alice}) \Rightarrow \neg\text{Cheat}(\text{Alice})$

Conclusion: Alice did not cheat.

Key steps for marks: (1) Universal instantiation, (2) Modus Tollens, (3) Conclusion in English.

===== EXAM Q6: S1 2026 Sample Test Q1(a) – 3 marks =====

Question: SAME scenario as 2025 sample $(I \wedge F) \rightarrow E$, $\neg E$, but now explicitly requires truth table for 3 marks.

Model Answer:

Step 1 (1 mark): Let $X = I \wedge F$. Truth table for $X \rightarrow E$:

$X$ ($I \wedge F$) $E$ $X \rightarrow E$ 0 0 1 0 1 1 1 0 0 1 1 1 Step 2 (1 mark): Since $E = 0$ and $X \rightarrow E$ is true (given the rule holds), the only valid row is row 1 where $X = 0$. Therefore $I \wedge F = 0$.

Truth table for $I \wedge F$:

$I$ $F$ $I \wedge F$ 0 0 0 ✓ 0 1 0 ✓ 1 0 0 ✓ 1 1 1 ✗ Step 3 (1 mark): Since $I \wedge F = 0$, at least one of $I$ or $F$ must be 0.

Conclusion: The person either did not have a valid ID or the fingerprint did not match (or both).

===== EXAM Q7: S1 2026 Sample Test Q1(b) – 2 marks =====

Identical to S1 2025 Sample Q1(b). Same answers apply:

- (i) $\neg\forall x, \text{Fly}(x)$ [1 mark]

- (ii) “There is a penguin in this region, and penguins cannot fly.” [1 mark]

===== LECTURE MOTIVATION QUESTION (potential exam question) =====

Question (slide 4-5): “If it rains, I bring an umbrella” ($P \rightarrow Q$). You see the person with an umbrella ($Q$). Can you conclude it is raining ($P$)?

Answer: No. $Q \rightarrow P$ (converse) is NOT logically equivalent to $P \rightarrow Q$. Seeing $Q$ true does not let us conclude $P$. This is the fallacy of Affirming the Consequent.

To conclude $P$, you would need $Q \rightarrow P$ (the converse) as a separate rule, or equivalently $P \leftrightarrow Q$ (biconditional).

🔬 Additional Practice Problems (Exam-Style)

Practice 1: Combined Modus Tollens with Additional Information

Given: $(P \wedge A) \rightarrow C$; $\neg C$; $A$ is true.

Question: What can you conclude about P?

Click to see answer

Step 1: By Modus Tollens: $\neg C$ and $(P \wedge A) \rightarrow C$ gives $\neg(P \wedge A)$.

Step 2: $\neg(P \wedge A) = \neg P \vee \neg A$ (De Morgan’s).

Step 3: Since $A$ is TRUE, $\neg A$ is FALSE.

Step 4: Therefore $\neg P \vee \text{FALSE} = \neg P$ must be TRUE.

Conclusion: $P$ is false. The student did NOT pass the exam.

Practice 2: FOL Translation – University Scenario

Translate: “Every computer science student at Auckland takes at least one math course.”

Domain setup:

- $\text{CS}(x)$: x is a CS student

- $\text{Math}(y)$: y is a math course

- $\text{Takes}(x, y)$: student x takes course y

Click to see answer

$$\forall x, [\text{CS}(x) \rightarrow \exists y, (\text{Math}(y) \wedge \text{Takes}(x, y))]$$

Read back: “For all x, if x is a CS student, then there exists a y such that y is a math course and x takes y.”

Note the nested quantifiers: $\forall$ outside, $\exists$ inside. The $\exists y$ is within the scope of $\forall x$.

Practice 3: Identify the Fallacy

Given: $\forall x, (\text{Student}(x) \rightarrow \text{Enrolled}(x, \text{Uni}))$. David is enrolled at Uni. Conclusion: David is a student.

Click to see answer

This is INCORRECT. This is Affirming the Consequent.

The rule says: Student $\rightarrow$ Enrolled. Being enrolled does not mean being a student. David could be enrolled as staff, auditor, etc.

To conclude David is a student, you would need the converse: $\text{Enrolled}(x, \text{Uni}) \rightarrow \text{Student}(x)$, which is a different (and not given) rule.

Practice 4: FOL with Negated Quantifiers

Translate: “No robot in the warehouse is idle.”

Click to see answer

Option 1: $\neg\exists x, \text{Idle}(x)$ (“there does not exist an idle robot”)

Option 2 (equivalent): $\forall x, \neg\text{Idle}(x)$ (“every robot is not idle”)

These are equivalent by quantifier negation: $\neg\exists x, \varphi(x) \equiv \forall x, \neg\varphi(x)$

Practice 5: Truth Table for OR-based Implication

Given: $(A \vee B) \rightarrow C$, $\neg C$. Deduce what must be true.

Click to see answer

By Modus Tollens: $\neg(A \vee B)$.

By De Morgan’s: $\neg A \wedge \neg B$.

Both A and B must be false. (This is the same pattern as the S1 2025 actual test.)

Full truth table verification:

| $A$ | $B$ | $A \vee B$ | $C$ | $(A \vee B) \rightarrow C$ |

|---|---|---|---|---|